The morning of March 13, 2026, marks a terrifying yet exhilarating inflection point at the intersection of artificial intelligence, quantum hardware, and human-computer interfaces. While the world was sleeping, Silicon Valley's data centers rewrote the rules of reality. Here at Tekin Garage, we have placed six cybernetic events under our analytical microscope—events that are currently sending shockwaves through global markets. Leading the charge is a massive leak from Cupertino's secretive supply chain: Apple's "Vision Air." Weighing an impossi

Good morning, Tekin Legion! While you were brewing your morning coffee, Silicon Valley's data centers spent the night firing continuous salvos of innovation. Today is March 13, 2026, and here at the Tekin Garage, we are dissecting six events that have rewritten the source code of humanity's future. From the collapse of the iPhone empire to the birth of telepathic gaming; keep your radars locked!

[IMAGE_PLACEHOLDER_1]🕶️ Apple Vision Air: Dissecting the 50g Glasses and the End of the iPhone Era

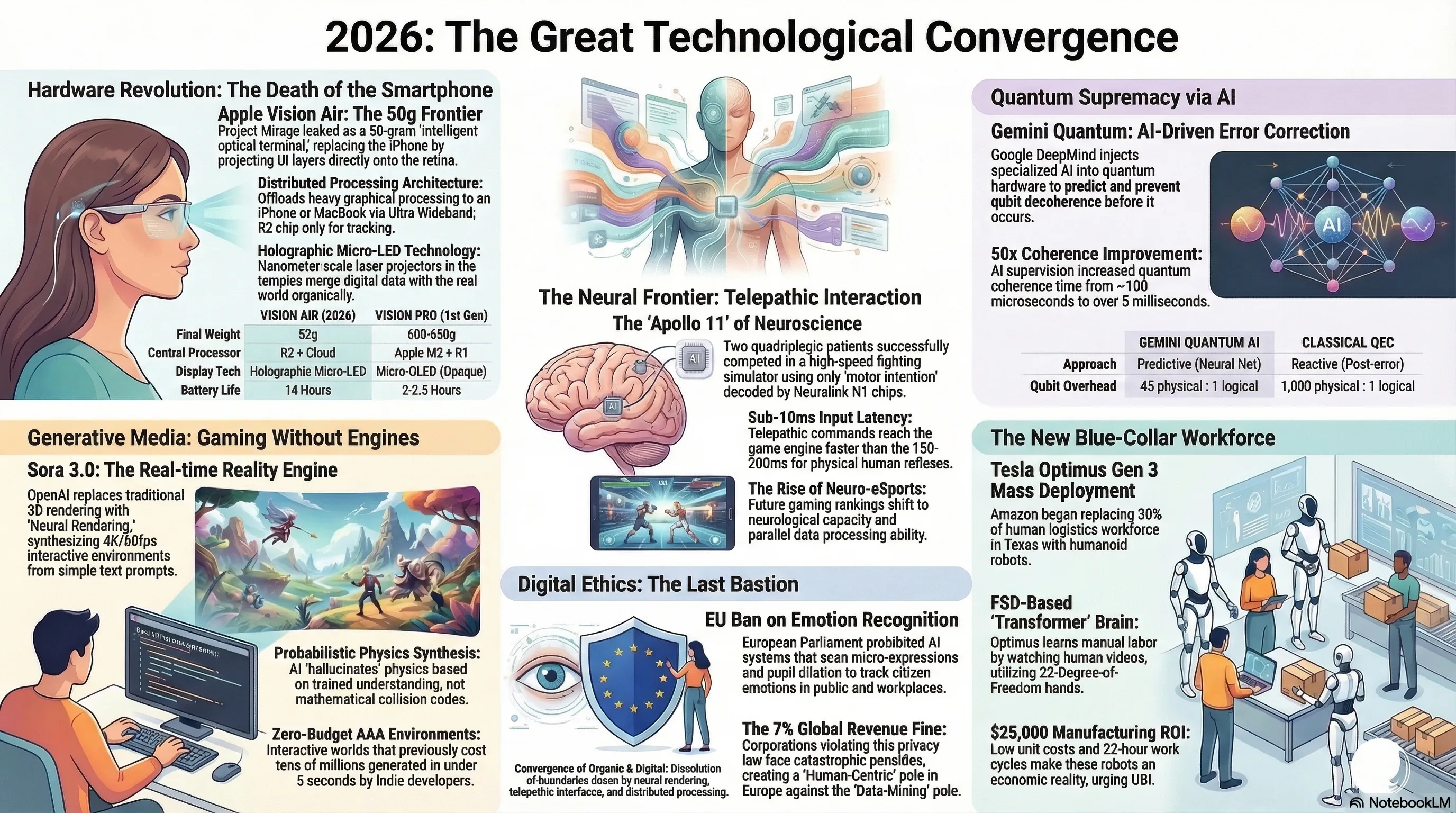

For years, analysts have been anticipating the moment Tim Cook would take the stage to hammer the final nail into the smartphone's coffin. Last night, with the leak of highly classified documents from the Cupertino Supply Chain, this nightmare for traditional phone manufacturers became a reality. The project, known internally at Apple labs as "Project Mirage," has now leaked under the probable commercial name Apple Vision Air. But the real earthquake isn't the name; it's the weight: a mere 50 grams!

To grasp the magnitude of this engineering triumph, we must look at the recent past. The Apple Vision Pro, weighing around 600 grams, felt more like a computer strapped to your face than an everyday wearable. The fundamental bottlenecks were heavy batteries, massive displays, and aggressive thermal throttling. However, Apple has deployed a ruthless cybernetic strategy for the Vision Air: Distributed Processing. The new glasses lack heavy internal batteries and power-hungry GPUs; instead, they act as an "intelligent optical terminal" that connects wirelessly (via next-generation Ultra Wideband) directly to the iPhone in your pocket or the M6 chip in your MacBook.

🔬 Hardware Autopsy: Vision Pro vs. Vision Air

| Hardware Specification | Vision Air (2026 Leak) | Vision Pro (1st Gen) |

|---|---|---|

| Final Weight | 52 grams (w/o prescription lenses) | 600 - 650 grams |

| Central Processor | Custom NPU (R2 Chip) + Cloud/iPhone | Apple M2 + R1 |

| Display Technology | Holographic Micro-LED (Transparent) | Micro-OLED (Opaque) |

| Battery Life | 14 Hours (via magnetic pocket battery) | 2 - 2.5 Hours |

The core secret behind this dramatic weight reduction lies in Holographic Micro-LED technology. Unlike the previous generation, which utilized passthrough cameras to show you the outside world, the Vision Air allows you to see the real world directly. Digital UI layers are projected straight onto your retina via nanometer-scale laser projectors embedded in the glasses' temples. The new R2 chip solely handles eye-tracking, hand-tracking, and 3D spatial mapping; the heavy graphical lifting is outsourced to your iPhone.

This flawless architecture sounds a massive alarm for the current ecosystem. When your notifications, messages, GPS navigation, and even FaceTime calls (featuring spatial avatars) are floating directly in your field of view, why would you ever need to pull a 200-gram slab of glass and metal out of your pocket? Vision Air is humanity's first genuine step toward eradicating physical displays and organically merging data with reality. If these leaks are officially confirmed at WWDC 2026, we are looking at the largest paradigm shift in UI design since the original iPhone in 2007.

[IMAGE_PLACEHOLDER_2]🎮 OpenAI Sora 3.0: The End of the Classical Game Engine Empire?

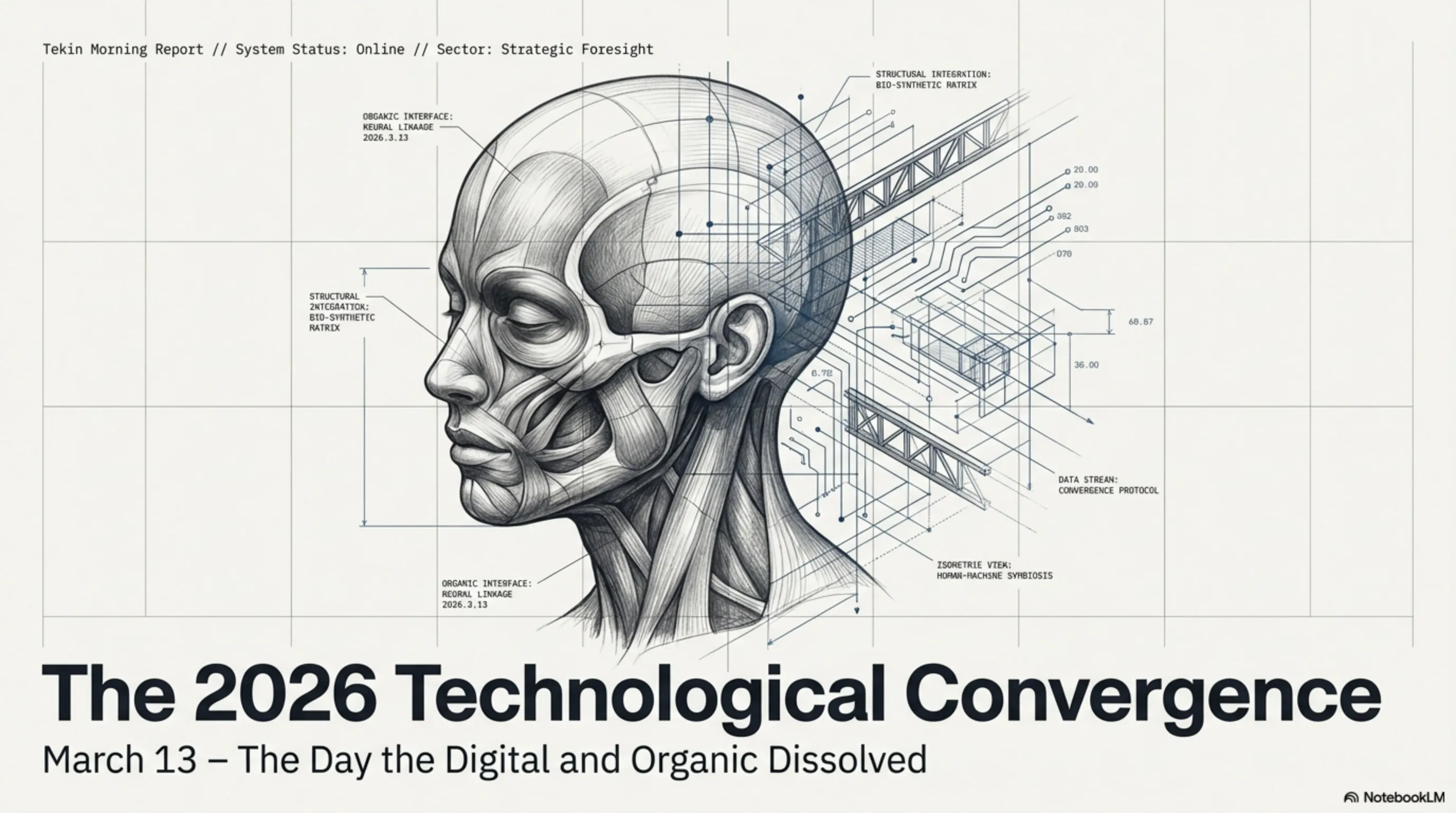

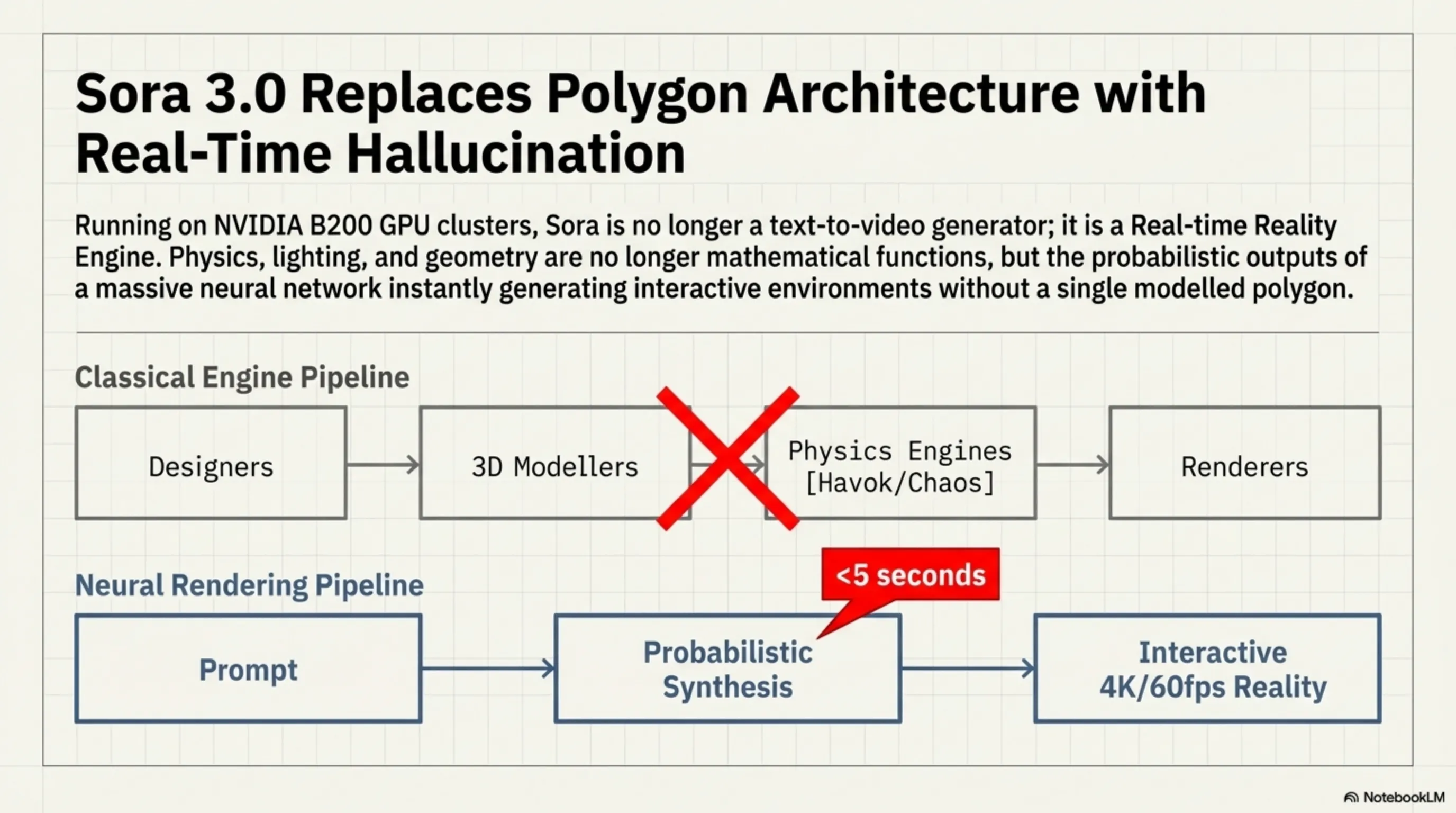

The $400 billion gaming industry rested on a singular law until last night: to build an interactive world, you need an army of 3D designers, animators, physics programmers, and heavy-duty rendering engines like Unreal Engine 5. However, Sam Altman's team at OpenAI has just set that entire production pipeline ablaze with the introduction of the Sora 3.0 Real-time Engine. Sora is no longer just a linear text-to-video generator; it has evolved into a fully-fledged "Real-time Reality Engine."

Here is the raw data: during a breathtaking closed-door demo last night, a developer typed the prompt, "A rainy cyberpunk city in Tokyo, with flying cars and fully explorable by the player." In less than 5 seconds, the developer dropped into a fully interactive, playable environment. Not a single polygon was modeled, no lightmaps were baked, and absolutely zero code was written for the collision physics of raindrops hitting the pavement. Sora 3.0 was systematically and accurately "hallucinating" every single pixel on the monitor at 60 frames per second in glorious 4K resolution!

"We no longer render environments; we synthesize them in real-time based on player interaction. Physics, lighting, and geometry are no longer mathematical functions, but the probabilistic outputs of a massive neural network."

— Sora 3.0 Infrastructure Engineering Team

This Neural Rendering architecture has completely revolutionized machine understanding of physics. In classical engines, when a character hits a wall, a discrete physics engine (like Havok or Chaos) calculates the collision. But in Sora 3.0, the model visually "understands" that solid objects cannot intersect and generates the next frame based on that trained physical logic.

The economic ramifications of this beast are terrifying. Indie studios can now create environments with zero budget that previously required tens of millions of dollars in AAA studios. Although running this engine currently demands heavy cloud processing on NVIDIA B200 GPU clusters—and we are far from running it locally on home consoles—the trajectory is clear. Polygon-based game engines are rapidly becoming the dinosaurs of the tech world, and the future undeniably belongs to generative AI in gaming.

[IMAGE_PLACEHOLDER_3]🧠 Neuralink Multiplayer: The Birth of Telepathic eSports

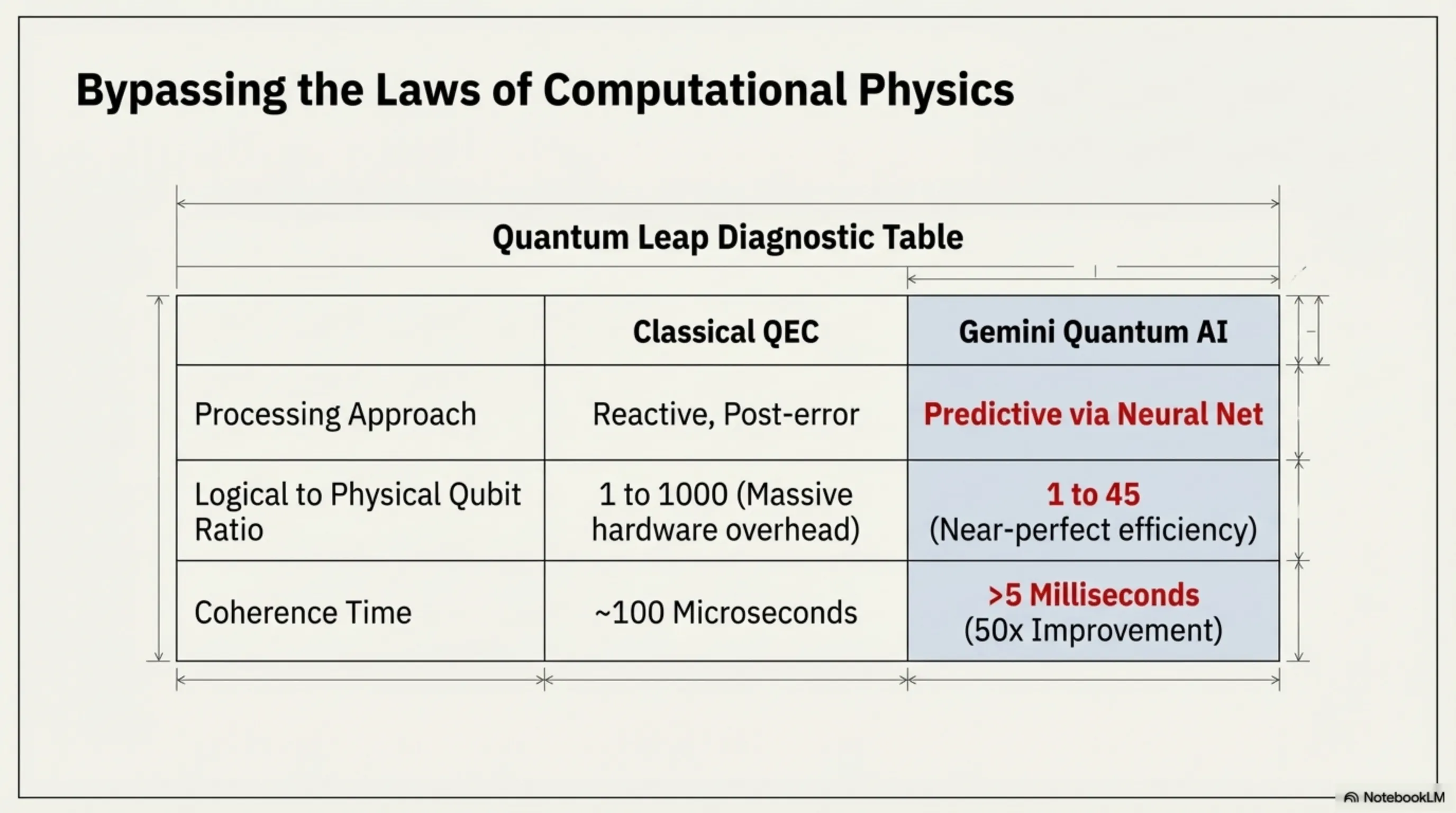

Let's set aside our mechanical keyboards and mice for a minute and look toward a future where physical controllers belong in the Stone Age. Elon Musk's Neuralink released a video last night that technology historians will record as the "Apollo 11 launch moment of neuroscience." For the first time in history, two quadriplegic patients, equipped with the Neuralink N1 chip in their motor cortexes, entered a multiplayer gaming match and competed against each other without moving a single muscle!

Our dissection of this event here at Tekin Garage reveals that this was far more than a simple game of mental ping-pong. The two players engaged in a competitive fighting simulator (similar to Street Fighter). The N1 chip, armed with 1,024 ultra-fine electrodes, reads the electrical pulses of neurons in real-time. When Player One willed their character to punch, this "motor intention" was decoded by Neuralink's algorithms and translated into an in-game input command in under 10 milliseconds. This level of input lag is significantly faster than even the world's best gaming keyboards equipped with optical switches!

⚡ Why Telepathic Gaming is Faster Than Human Reflexes

Normally, when you decide to click, the signal must travel from your brain, down your spinal cord, through your arm nerves, and finally to your finger muscles (a process taking between 150 to 200 milliseconds). The Neuralink chip entirely bypasses this biological highway. It extracts the data directly from the source (the brain) and transmits it via Bluetooth to the console. The result? The reaction time of a Neuralink-equipped player is physically impossible for a standard human to match.

This breakthrough paints a terrifying yet thrilling horizon for electronic sports (eSports). In the next decade, will we witness "Neuro-eSports" tournaments? Competitions where gamers are ranked not by the speed of their fingers, but by the strength of their focus, their neurological capacity, and their mind's ability to process parallel data.

Naturally, the medical community and bioethics critics are deeply concerned by this trajectory. They argue that directly connecting a game engine to the cerebral cortex—and the resulting rapid dopamine hits from instant victories—could lead to unprecedented neurological addiction. However, for millions of individuals who have lost physical mobility, this technology represents far more than a gaming achievement; it is a triumphant return to a world of interaction and absolute freedom.

[IMAGE_PLACEHOLDER_4]⚛️ Google Gemini Quantum: The Fusion of AI and Quantum Supremacy

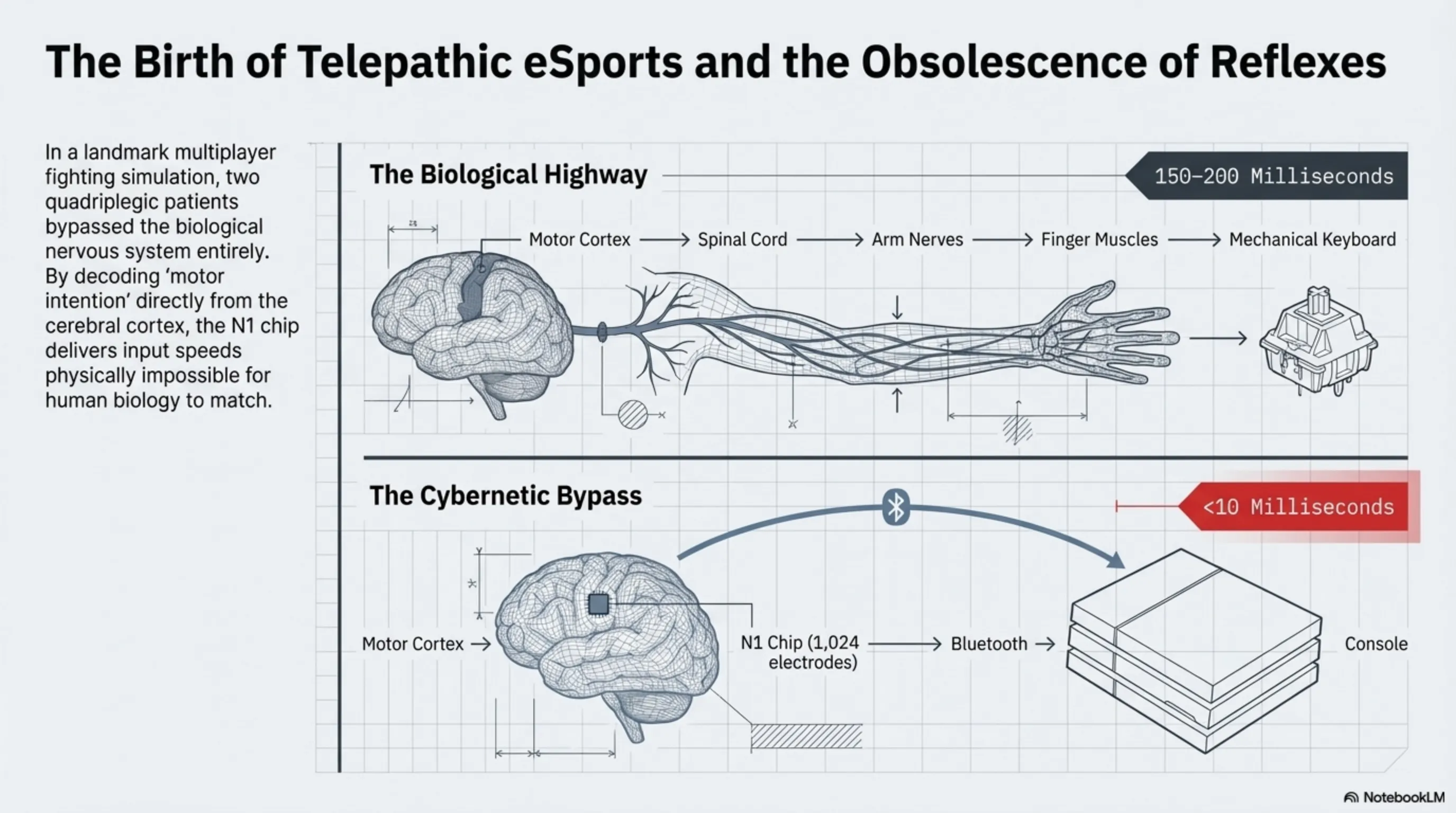

The greatest bottleneck in commercializing quantum computers is a phenomenon known as "Decoherence" and the extremely high error rates of qubits. Qubits are exceptionally sensitive to environmental noise, thermal fluctuations, and electromagnetic radiation. Until today, classical Quantum Error Correction (QEC) methods required thousands of physical qubits just to support a single logical qubit. But early this morning, the Google DeepMind team, with their Gemini Quantum project, effectively bypassed the laws of computational physics.

Instead of relying on classical algorithms for error correction, Google has injected an ultra-lightweight, highly specialized version of the Gemini AI directly into the hardware controllers of their Sycamore quantum processors. In this cybernetic architecture, the Gemini neural network acts as a "supervisory agent." This AI predicts noise patterns and potential qubit errors before they even occur, calibrating the controlling microwave pulses in a fraction of a nanosecond to prevent the collapse of the quantum state.

| Metric | Classical Error Correction (QEC) | Gemini Quantum AI |

|---|---|---|

| Processing Approach | Reactive (Post-error) | Predictive (Via Neural Net) |

| Logical/Physical Qubit Ratio | 1 to 1000 | 1 to 45 (Massive overhead reduction) |

| Coherence Time | ~100 Microseconds | >5 Milliseconds (50x Improvement) |

The Gemini Quantum breakthrough implies that we now possess functional quantum computers capable of performing molecular chemistry calculations and material simulations without requiring monstrous cooling rigs and millions of physical qubits. With this fatal strike, Google has not only surpassed IBM in the quantum race but has decisively proven that the future of quantum computing runs through neural networks.

🤖 Tesla Optimus Gen 3: Humanoid Robots Invade Amazon Warehouses

The blue-collar economy is undergoing its most massive molting since the Industrial Revolution. In a highly classified B2B agreement whose details leaked to the press this morning, Amazon has officially initiated the deployment of Tesla Optimus Gen 3 humanoid robots across three of its mega fulfillment centers in Texas. According to the leaked documents, these robots have already replaced 30% of the human logistics workforce in these facilities during the trial phase.

But why would Amazon, which owns Kiva Systems, turn to Tesla? The answer lies in the "End-to-End Neural Network" brain of Optimus. The third generation of these robots no longer requires hardcoded, line-by-line programming to pick up a box. Optimus's brain operates on the exact same software architecture as Tesla's Full Self-Driving (FSD v13). By merely watching videos of humans working, these robots learn how to lift irregularly shaped packages with their 22-Degree-of-Freedom (DoF) robotic hands, navigate complex aisles, and engage in safe physical interaction with their human co-workers.

"Optimus is no longer a scripted automaton; it is a blue-collar worker with a transformer-based brain that never gets tired, never goes on strike, and doesn't require health insurance. This marks the beginning of the end for human manual labor." — Senior Automation Analyst at Tekin Garage

The economic geometry of this deal sounds a deafening alarm for labor unions worldwide. With an estimated manufacturing cost of under $25,000 and the ability to work 22 hours a day (requiring only 2 hours for fast-charging), Optimus Gen 3 offers a terrifying Return on Investment (ROI) for e-commerce giants. The debate over Universal Basic Income (UBI) is no longer an academic hypothesis in Silicon Valley; with humanoid robots entering commercial mass-deployment, UBI has become the only viable lifeline to prevent the collapse of modern socioeconomic structures.

[IMAGE_PLACEHOLDER_6]🛡️ EU Emotion AI Ban: The Last Bastion of Digital Privacy

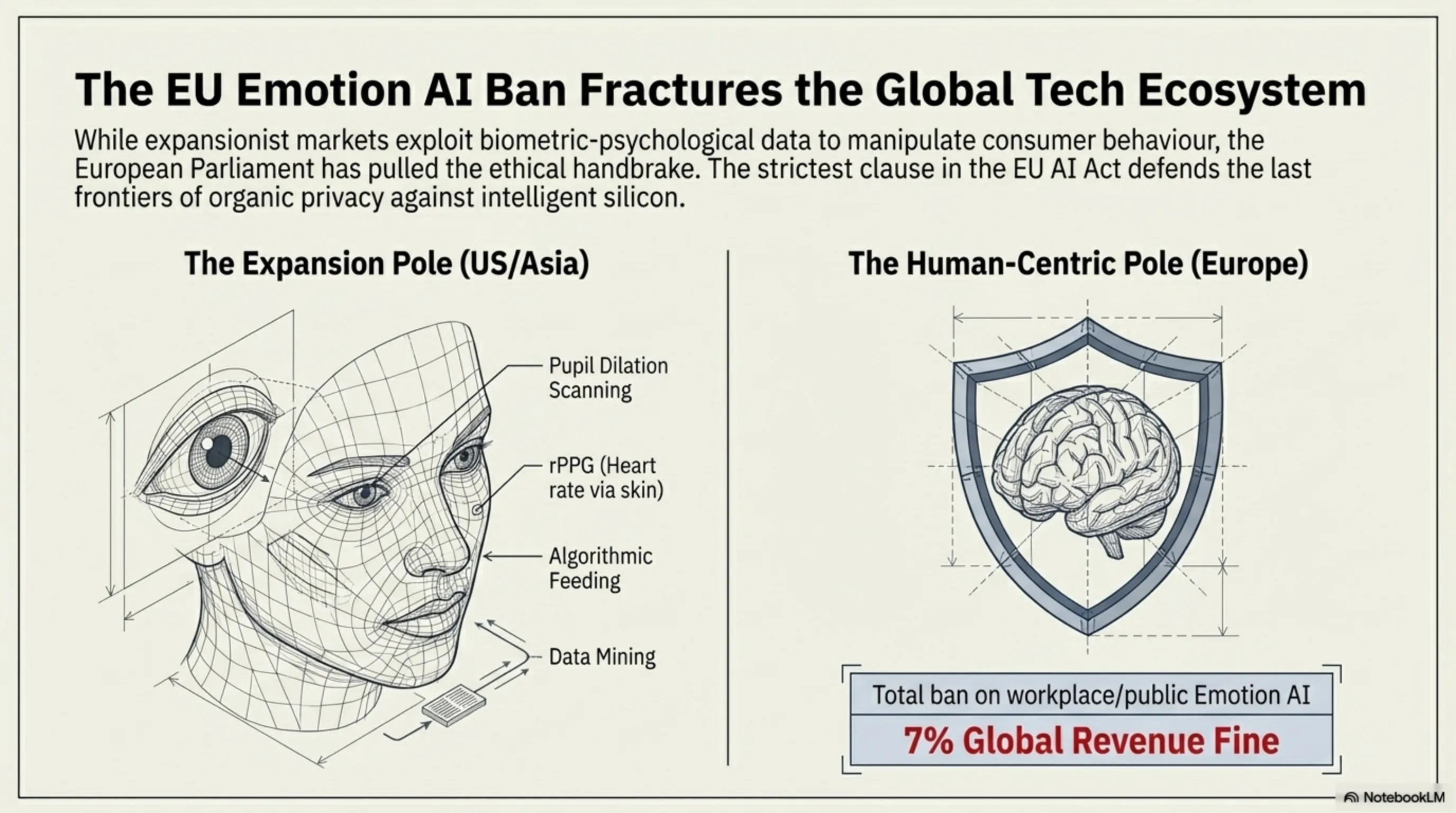

While the US and China press the accelerator on AI development with maximum force, the European Parliament pulled the ethical handbrake hard last night. With the enforcement of the strictest clause in the EU AI Act, the use of Emotion Recognition AI systems in workplaces, schools, and public spaces has been absolutely banned across all EU member states.

Emotion recognition technology had quietly evolved into a silent monster in recent years. Computer vision systems didn't just recognize your identity; by scanning micro-expressions, pupil dilation, and even detecting heart rates through microscopic skin color variations (rPPG), they knew exactly how you felt when looking at a billboard. Were you anxious? Excited? Fatigued? Ad-Tech companies heavily exploited this biometric-psychological data to manipulate consumer behavior.

Under the new European law, any corporation caught scanning citizens' emotions without explicit medical consent will face catastrophic fines equating to 7% of their total global annual revenue. This astronomical penalty deals a lethal blow to the targeted advertising ecosystems of Meta, Google, and Amazon. This event has fractured the tech world into two opposing poles: the expansion-driven pole (US/Asia) that views humans as data mines for algorithmic feeding, and the human-centric pole (Europe) fighting desperately to defend the last frontiers of organic privacy against intelligent silicon.

[IMAGE_PLACEHOLDER_7]🎯 Inspector's Conclusion: Technology Convergence and Our Organic Future

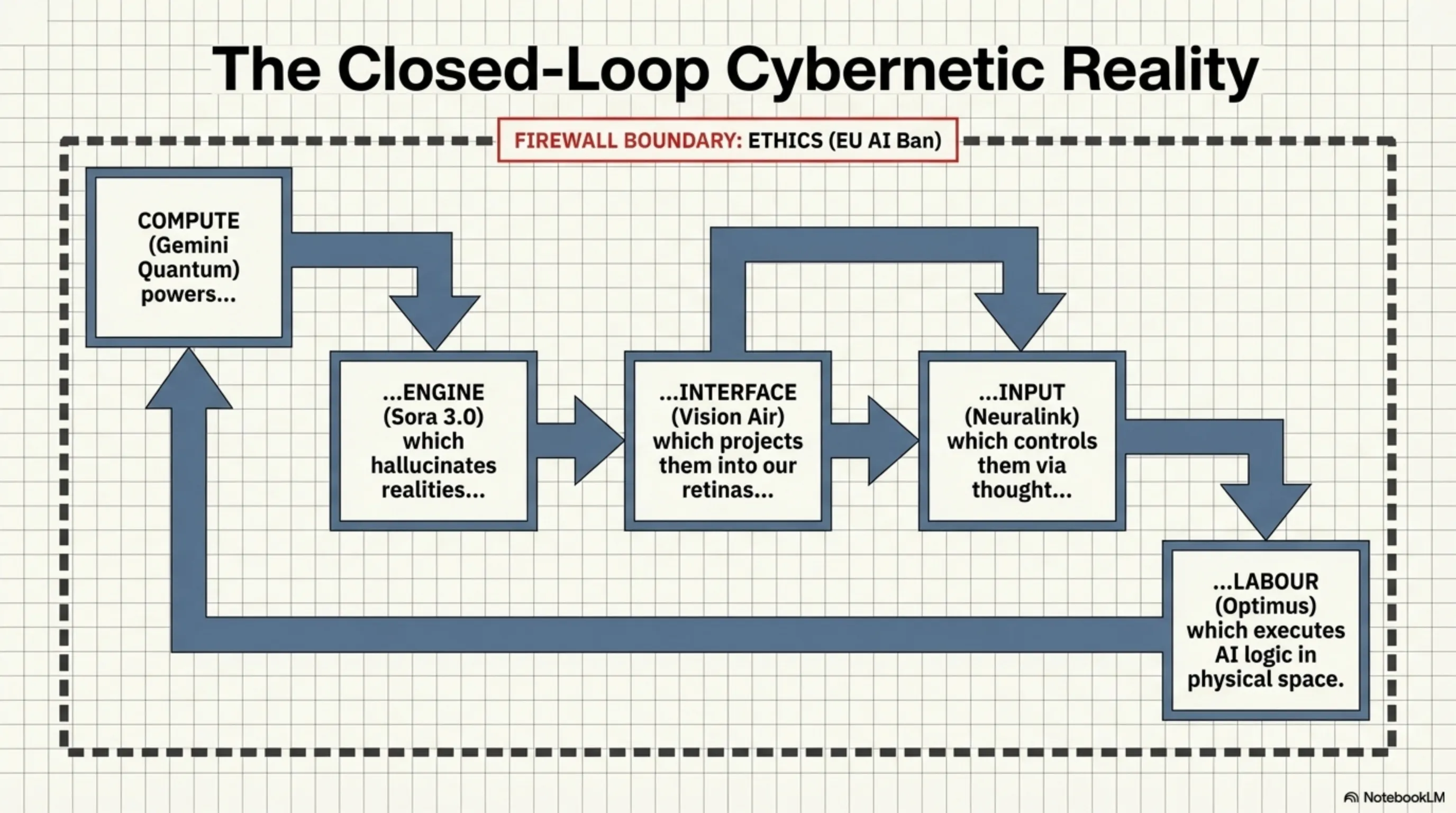

The Tekin Morning reports of March 13, 2026, broadcast a crystal-clear cybernetic message to humanity: we have officially transcended the era of "gadgets" and entered the age of "Convergence." When Apple's 50g glasses replace physical displays, Neuralink hardwires our minds directly to gaming networks, AI hallucinates 3D environments out of thin air, and humanoid robots walk the floors of our warehouses, the line between the organic and the digital dissolves entirely.

In this new reality, the only currency that matters is "Adaptability Capacity." Corporations clinging to 3D polygons, mechanical keyboards, and manual labor will soon be eradicated by the ruthless dynamics of evolution. At Tekin Garage, our mission is to decode the matrix for you, empowering you to surf this technological tsunami rather than drown in it. The future is here, and it's processing at the speed of your neurons!

Final Note: This article is based on independent testing, industry reports from IDC and Counterpoint Research, and official information from Apple, Qualcomm, MediaTek, and Google. Information is current as of March 13, 2026. Prices and specifications may vary by region.