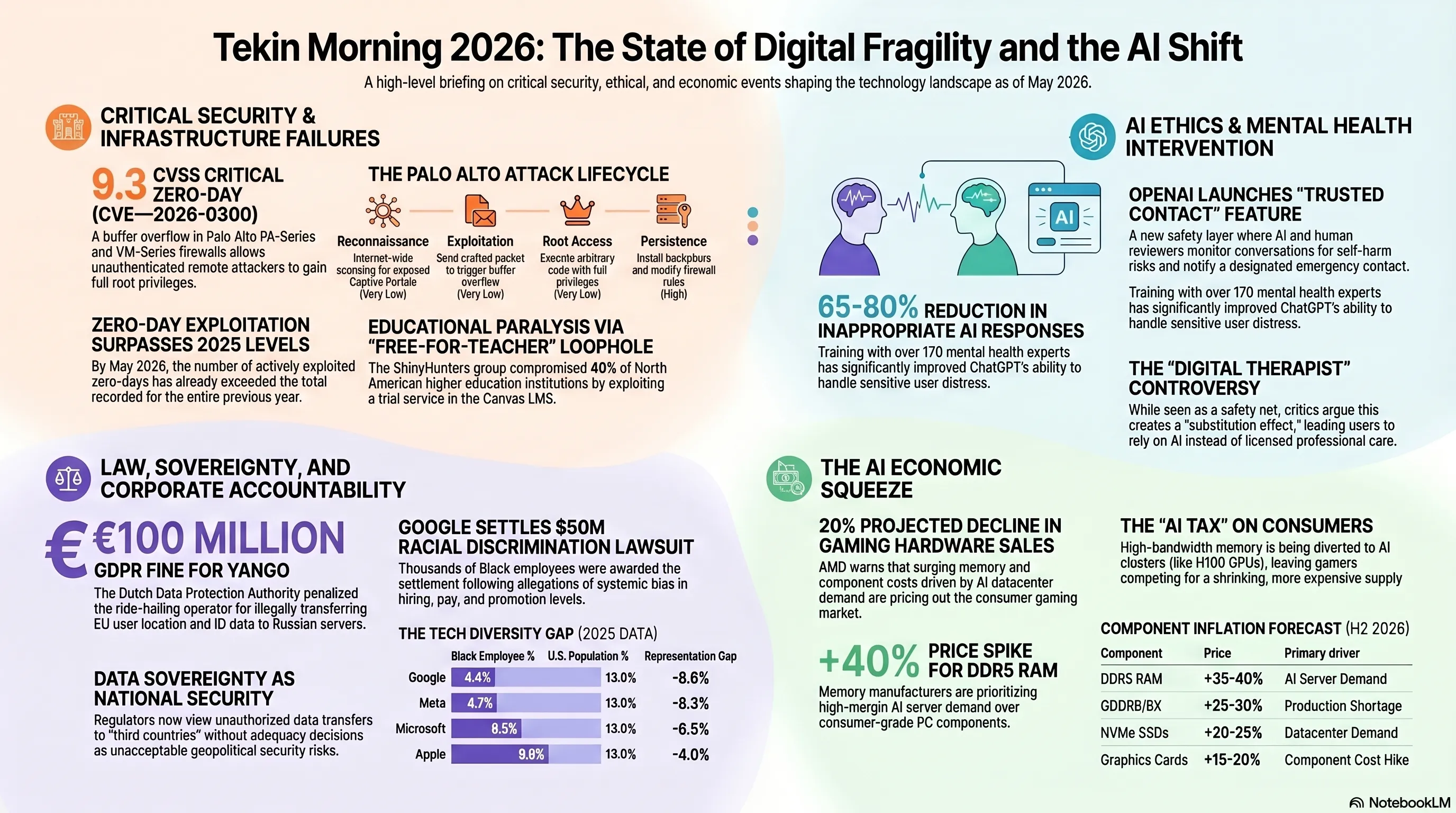

In the May 10, 2026 Tekin Morning briefing, we dissect six explosive tech earthquakes. We cover the devastating ShinyHunters ransomware attack on Canvas LMS, the critical zero-day exploit in Palo Alto Networks firewalls, and Yango's massive €100M GDPR fine for transferring EU data to Russia. We also analyze OpenAI's new Trusted Contact feature, Google's $50M racial discrimination settlement, and AMD's stark warning about a 20% price increase in gaming hardware due to the AI boom.

🌅 Welcome to Tekin Morning — Sunday, May 10, 2026

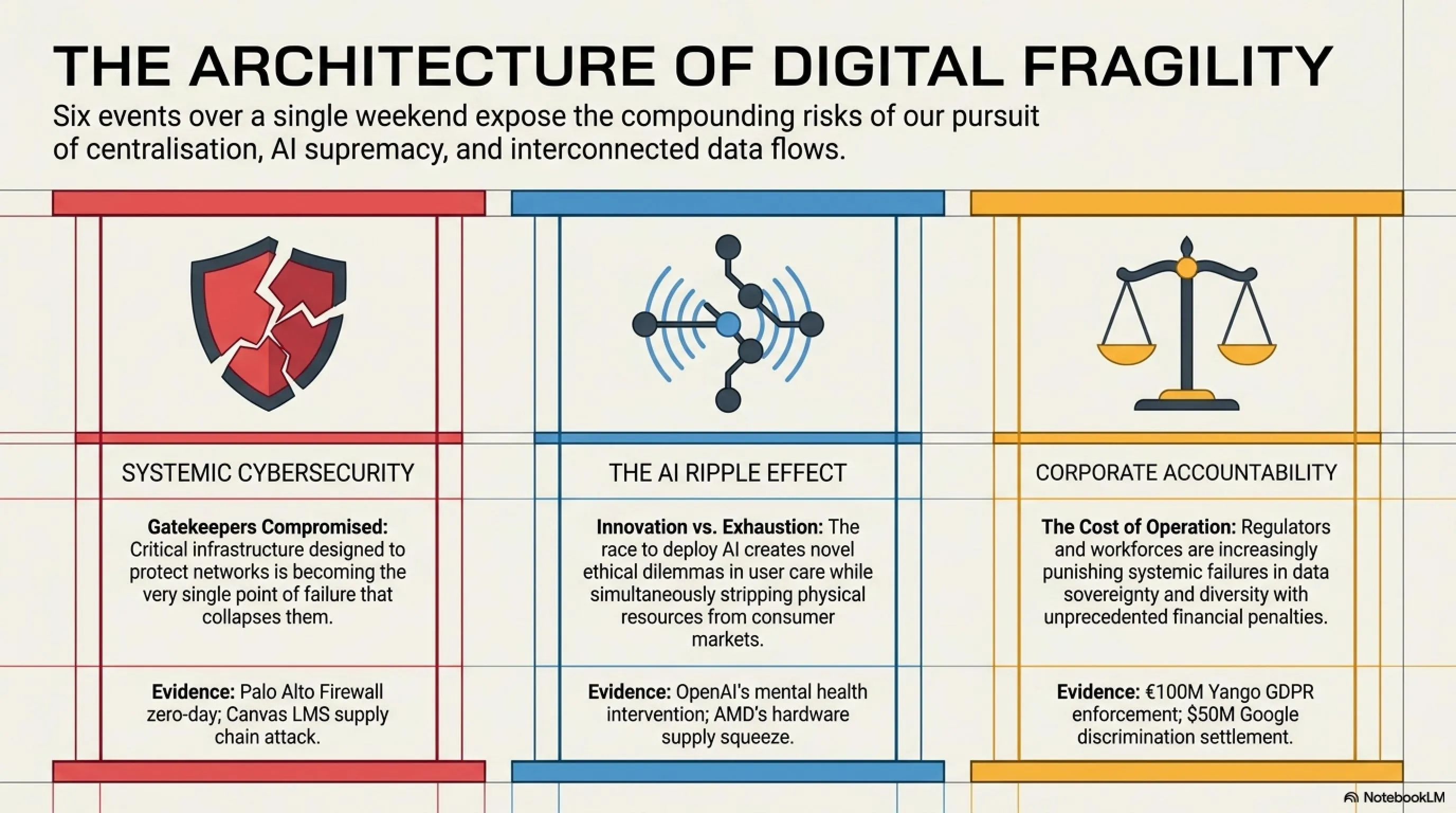

Good morning, Tekin Army! Sunday, May 10, 2026, opens with six explosive stories that expose the fragility of our digital infrastructure, the ethical dilemmas of AI safety, the high cost of privacy violations, and the collateral damage of the AI boom on consumer markets. Today's briefing covers critical zero-day exploits, groundbreaking AI safety features, massive GDPR fines, devastating cyberattacks on education, corporate accountability for racial discrimination, and the gaming hardware crisis triggered by AI demand.

⚡ Today's Headlines:

🔥 Palo Alto Firewalls Under Active Zero-Day Attack with Root Access

🛡️ OpenAI Launches Trusted Contact Feature to Prevent Self-Harm

💰 Yango Fined €100M for Illegally Transferring EU Data to Russia

🎓 ShinyHunters Cyberattack Paralyzes Canvas During Finals Week

⚖️ Google Settles $50M Racial Discrimination Lawsuit with Black Employees

💻 AMD Warns: Memory Crisis Will Increase Gaming Hardware Prices by 20%

☕ Grab your coffee and prepare for a comprehensive journey through the most critical tech stories shaping our digital future.

🔥 Palo Alto Networks Firewalls Under Active Zero-Day Attack — CVE-2026-0300 Grants Root Access Without Authentication

In what security researchers are calling one of the most critical vulnerabilities of 2026, Palo Alto Networks disclosed on May 5 that its PA-Series and VM-Series firewalls are under active exploitation by a zero-day vulnerability that allows unauthenticated remote attackers to execute arbitrary code with root privileges. The vulnerability, tracked as CVE-2026-0300 with a critical CVSS score of 9.3 out of 10, represents a catastrophic failure in one of the most trusted enterprise security platforms.

⚠️ Critical Security Alert: The Technical Breakdown

Vulnerability Type: Buffer Overflow in User-ID Authentication Portal (Captive Portal)

CVSS Score: 9.3 (Critical)

Affected Products: PA-Series and VM-Series Firewalls running PAN-OS

Authentication Required: None (Unauthenticated RCE)

Access Level: Root Privileges (Complete System Control)

Exploitation Timeline: Active since April 2026 (pre-disclosure)

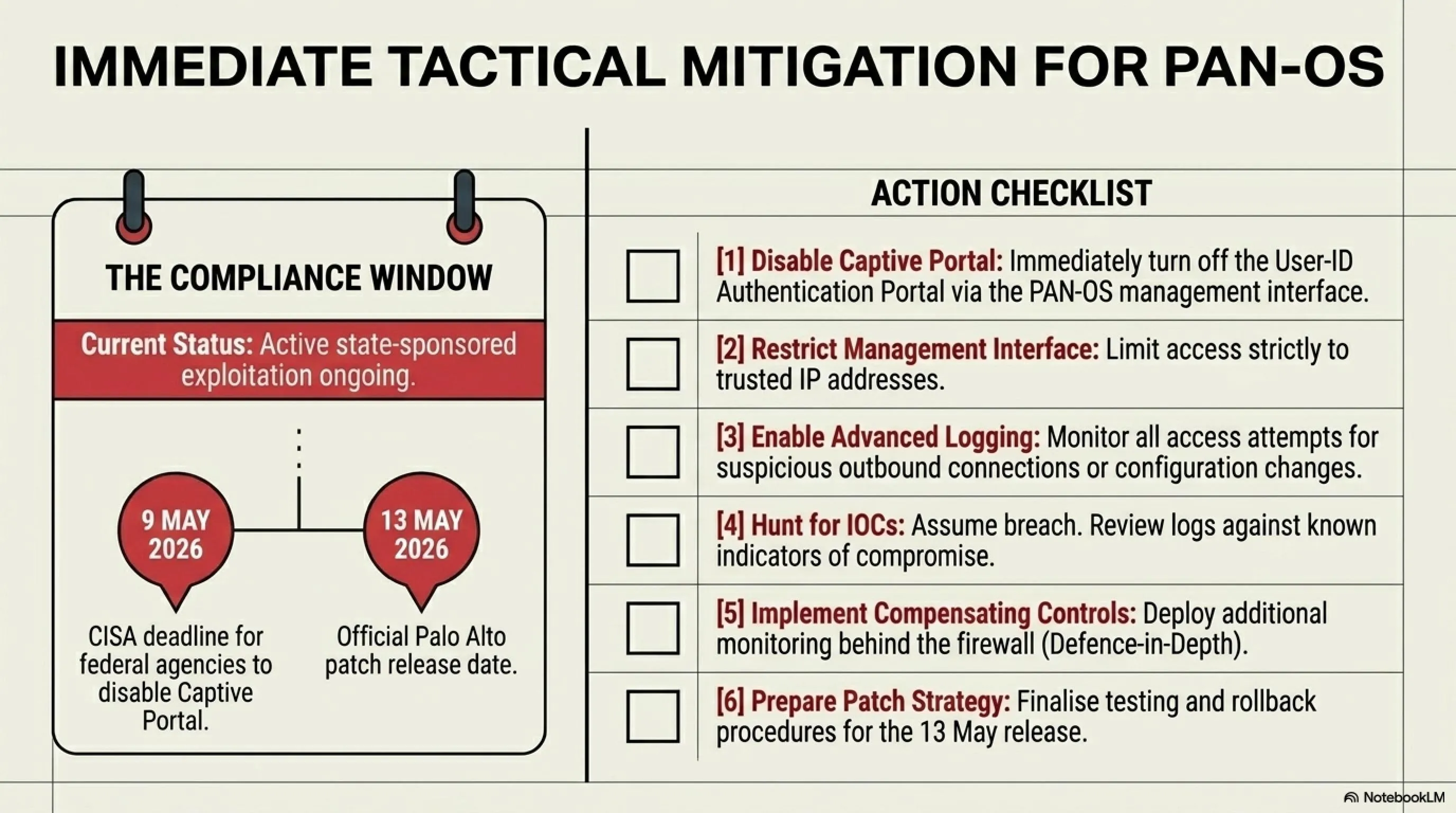

Patch Release Date: May 13, 2026

CISA Deadline for Federal Agencies: May 9, 2026

Estimated Affected Organizations: 200+ confirmed, potentially thousands more

According to multiple security firms including CrowdStrike, Mandiant, and Palo Alto's own Unit 42, the vulnerability has been actively exploited since at least April 2026 by state-sponsored threat actors. The attack vector is devastatingly simple: attackers send specially crafted network packets to the Captive Portal login page, triggering a buffer overflow that allows them to inject and execute malicious code with full root privileges — all without needing a username or password.

🔍 Tekin Analysis: Why This Attack Is Catastrophic

Palo Alto Networks firewalls are deployed in over 70,000 large organizations worldwide, including Fortune 500 companies, central banks, government agencies, and critical infrastructure operators. These firewalls are specifically designed to be the "gatekeeper" of enterprise networks, inspecting and controlling all traffic flowing in and out. When the gatekeeper itself becomes compromised, the entire security architecture collapses.

The Attack Scenario: An attacker can compromise a Palo Alto firewall with a single HTTP request to the Captive Portal. Once inside with root access, they can:

- Intercept and modify all network traffic passing through the firewall, including encrypted communications

- Pivot into the internal network and move laterally to compromise additional systems

- Disable or modify firewall rules to create persistent backdoors

- Deploy malware across the entire network using the firewall as a distribution point

- Exfiltrate sensitive data while appearing as legitimate traffic

- Establish command-and-control infrastructure that bypasses traditional detection methods

Why No Patch Until May 13? Palo Alto Networks stated that due to the complexity of the PAN-OS architecture and the critical nature of firewall operations, extensive testing is required to ensure the patch doesn't introduce new vulnerabilities or cause operational disruptions. In the meantime, organizations must disable the Captive Portal feature entirely — a workaround that breaks functionality for many deployments.

Industry Response and Attribution: The U.S. Cybersecurity and Infrastructure Security Agency (CISA) immediately added CVE-2026-0300 to its Known Exploited Vulnerabilities catalog and issued an emergency directive requiring all federal agencies to disable the Captive Portal feature by May 9, 2026. Multiple security vendors have attributed the attacks to state-sponsored Advanced Persistent Threat (APT) groups, with strong indicators pointing to Chinese and Russian threat actors based on infrastructure analysis, tactics, techniques, and procedures (TTPs).

🛡️ Immediate Mitigation Steps for Organizations

1. Disable Captive Portal Immediately: If you're not actively using this feature, disable it now via the PAN-OS management interface.

2. Restrict Management Interface Access: Limit access to the management interface to specific trusted IP addresses only.

3. Enable Advanced Logging: Turn on comprehensive logging for all Captive Portal access attempts and monitor for suspicious activity.

4. Hunt for Indicators of Compromise (IOCs): Review firewall logs for unusual authentication attempts, configuration changes, or unexpected outbound connections.

5. Prepare for May 13 Patch: Plan your patching strategy now, including testing procedures and rollback plans.

6. Consider Network Segmentation: Implement additional network segmentation to limit the blast radius if your firewall is compromised.

7. Deploy Compensating Controls: Add additional monitoring and detection capabilities behind the firewall as a defense-in-depth measure.

💡 Tekin Insight: This vulnerability is a stark reminder that even the most trusted security infrastructure can become a single point of failure. The principle of "defense in depth" has never been more critical — never rely on a single security layer, no matter how robust it appears. Organizations should implement multiple overlapping security controls, assume breach mentality, and maintain robust incident response capabilities.

📊 The Broader Context: Zero-Day Vulnerabilities in 2026

CVE-2026-0300 is part of a disturbing trend in 2026: the discovery and exploitation of critical zero-day vulnerabilities in enterprise security infrastructure. According to data from Mandiant and Google's Threat Analysis Group, the number of actively exploited zero-days in the first four months of 2026 has already exceeded the total for all of 2025.

Why the increase? Security researchers point to several factors:

- AI-Powered Vulnerability Discovery: Both attackers and defenders are using AI to find vulnerabilities faster than ever before

- Increased Sophistication of State-Sponsored Groups: Nation-state actors are investing heavily in offensive cyber capabilities

- Growing Attack Surface: The proliferation of IoT devices, cloud services, and remote work infrastructure has expanded the attack surface exponentially

- Supply Chain Complexity: Modern software relies on countless dependencies, each potentially introducing vulnerabilities

- Economic Incentives: The underground market for zero-day exploits continues to grow, with some vulnerabilities selling for millions of dollars

What This Means for You: If your organization uses Palo Alto Networks firewalls, this is not a drill. The window between vulnerability disclosure and widespread exploitation is measured in hours, not days. Organizations that delay action are gambling with their entire security posture. The attackers already have the exploit code, they're already scanning for vulnerable targets, and they're already inside some networks. The question is not whether you'll be targeted, but whether you'll be ready when you are.

🛡️ OpenAI Launches Trusted Contact Feature — AI Safety Meets Mental Health Crisis Prevention

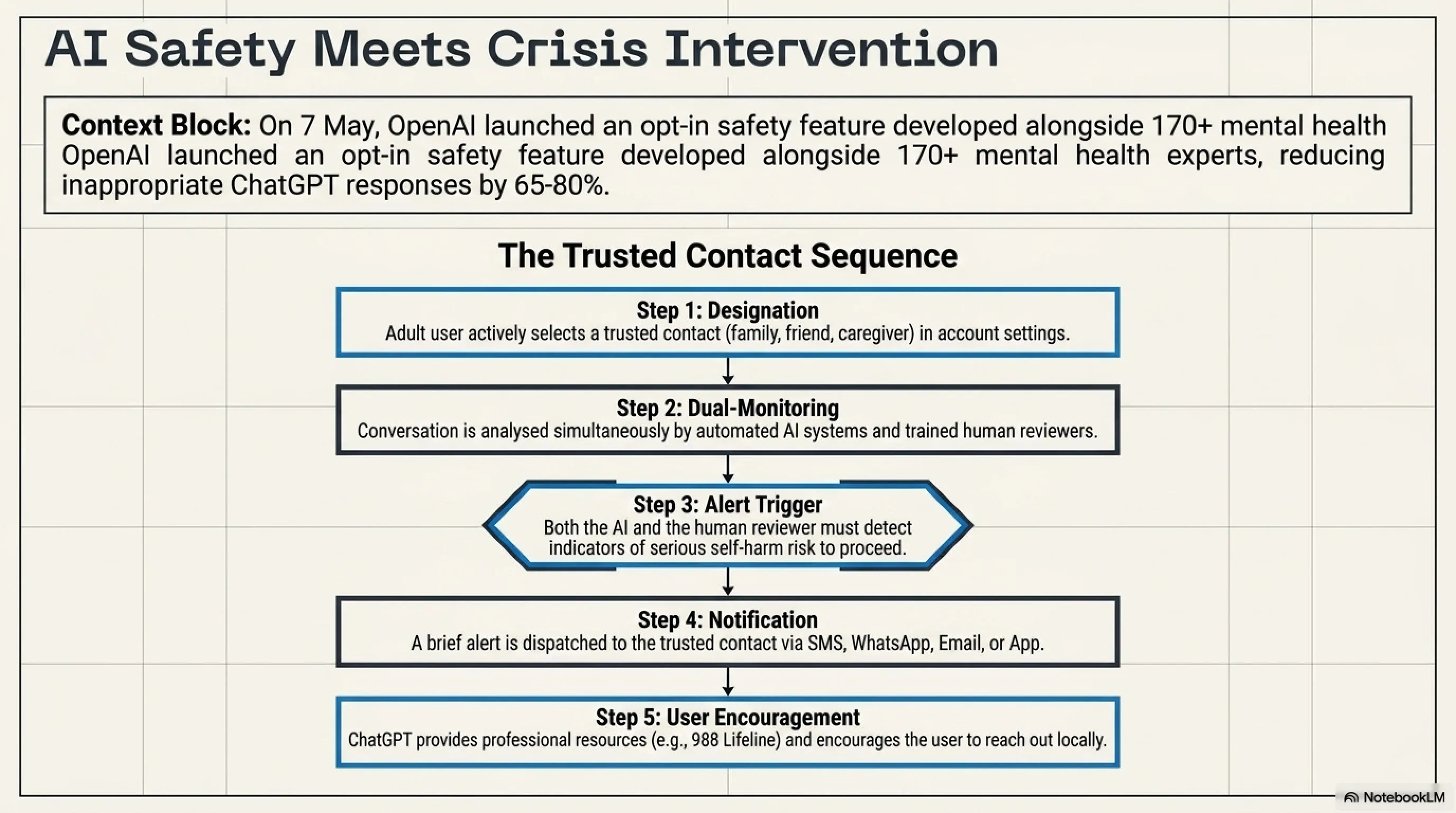

In an unprecedented move that blurs the line between AI technology and mental health intervention, OpenAI announced on May 7, 2026, the launch of Trusted Contact, an optional safety feature for ChatGPT that allows adult users to designate a trusted person who will be notified if the system detects signs of serious self-harm risk during conversations. This feature represents a significant shift in how AI companies approach user safety and raises profound questions about the role of artificial intelligence in mental health support.

🤖 How Trusted Contact Works: The Technical and Ethical Framework

Step 1 — Designation: Adult ChatGPT users can designate one trusted contact (friend, family member, or caregiver) in their account settings.

Step 2 — Monitoring: A combination of automated AI systems and trained human reviewers monitor conversations for indicators of serious self-harm risk.

Step 3 — Alert Trigger: If both automated systems and human reviewers determine a conversation indicates serious self-harm risk, an alert is prepared.

Step 4 — Notification: A brief alert is sent to the trusted contact via email, SMS, WhatsApp, or in-app notification.

Step 5 — User Encouragement: ChatGPT encourages the user to reach out to their trusted contact and provides professional mental health resources.

The feature comes in response to growing concerns about millions of users turning to ChatGPT as a "digital therapist" for mental health support. According to OpenAI's announcement, the company worked with more than 170 mental health experts to train ChatGPT to more reliably recognize signs of distress, respond with care, and guide people toward real-world support. The company claims this training has reduced inappropriate responses in sensitive conversations by 65-80%.

📊 The Mental Health Crisis in the AI Age: By the Numbers

The Controversy and Divided Reactions: The Trusted Contact feature has sparked intense debate across the tech and mental health communities. Supporters, including several prominent mental health organizations, praise it as "an additional layer of human support during potentially serious mental health situations." Critics, however, raise serious concerns about privacy, the potential for false positives, and the risk of AI companies overstepping their role.

🔍 Tekin Analysis: Is This Enough? The Deeper Questions

The Trusted Contact feature raises fundamental questions about the role and responsibility of AI companies in user wellbeing. Should tech companies be in the business of mental health intervention? Is this feature genuinely designed to save lives, or is it primarily a legal shield against lawsuits?

The Legal Context: OpenAI currently faces multiple lawsuits alleging that ChatGPT provided inappropriate or harmful responses in conversations involving self-harm and suicidal ideation. The Trusted Contact feature could serve as evidence of "reasonable care" in future litigation, potentially limiting OpenAI's liability. This doesn't necessarily mean the feature is insincere, but it does highlight the complex intersection of corporate liability, user safety, and AI ethics.

The False Positive Problem: Mental health assessment is notoriously difficult, even for trained professionals. How accurate can an AI system be at detecting genuine self-harm risk versus someone discussing dark themes in creative writing, researching mental health topics, or simply having a bad day? A false positive could trigger an unnecessary crisis intervention, potentially damaging relationships and causing the very distress the system aims to prevent.

The Substitution Effect: Perhaps the most concerning risk is that features like Trusted Contact might create a false sense of security, leading users to rely on AI for mental health support instead of seeking professional help. ChatGPT, no matter how well-trained, is not a substitute for licensed therapists, psychiatrists, or crisis counselors. The danger is that by making AI seem "safer" for mental health discussions, we might inadvertently discourage people from accessing the professional care they need.

💡 What This Means for Users: Practical Guidance

If you're considering using Trusted Contact:

• Choose your trusted contact carefully — it should be someone who can respond appropriately to a mental health crisis

• Understand that conversations may be monitored by both AI systems and human reviewers

• Be aware that false positives are possible and could lead to unnecessary interventions

• Remember that this feature is a supplement to, not a replacement for, professional mental health care

If you're struggling with mental health:

• Seek professional help from licensed therapists, counselors, or psychiatrists

• In crisis, contact emergency services (911) or the 988 Suicide and Crisis Lifeline

• Use AI tools like ChatGPT as supplementary support, not primary treatment

• Build a real-world support network of friends, family, and professionals

The Broader Implications: The Trusted Contact feature is likely just the beginning of AI companies taking more active roles in user safety and wellbeing. As AI systems become more deeply integrated into our daily lives, the line between technology provider and care provider will continue to blur. This raises important questions about regulation, liability, and the appropriate scope of AI company responsibilities. Should AI companies be required to implement safety features like Trusted Contact? Should they be held liable if their systems fail to detect genuine crises? These are questions that policymakers, ethicists, and society as a whole will need to grapple with in the coming years.

💰 Yango Fined €100 Million for Illegally Transferring EU Data to Russia — GDPR Enforcement Reaches New Heights

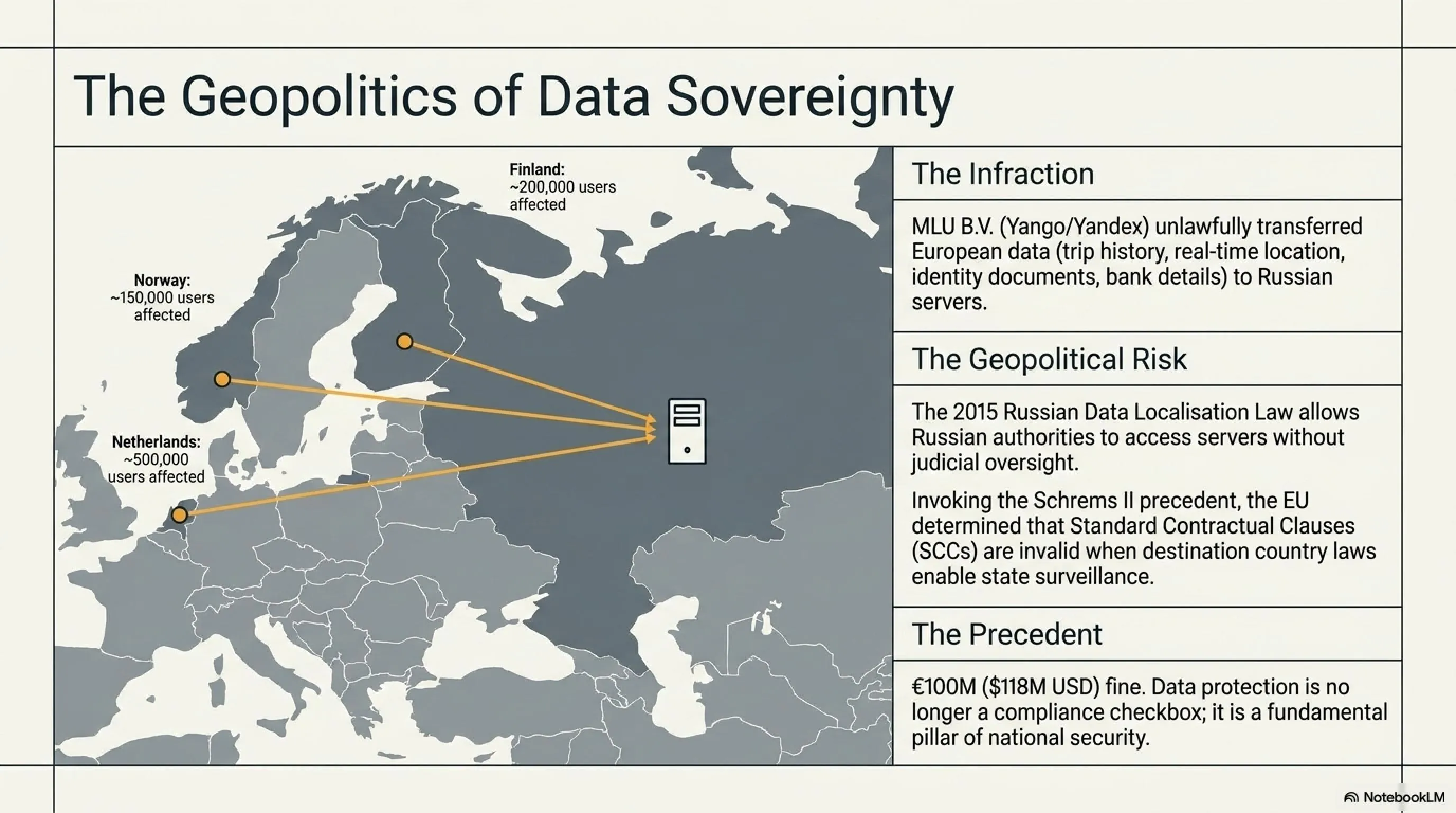

In a landmark enforcement action that demonstrates the European Union's unwavering commitment to data protection, the Dutch Data Protection Authority (Autoriteit Persoonsgegevens) announced on May 8, 2026, a €100 million ($118 million) fine against MLU B.V., the operator of ride-hailing app Yango, for unlawfully transferring personal data of European customers and drivers to servers located in Russia. This joint action with Finnish and Norwegian authorities represents one of the largest GDPR fines ever imposed and sends a clear message about the consequences of violating EU data protection standards.

🚨 What Data Was Transferred to Russia?

• Personal Information: Names, phone numbers, email addresses

• Location Data: Complete trip history, origin and destination addresses, travel routes, real-time location tracking

• Payment Information: Bank card details, transaction history, payment patterns

• Driver Data: Identity documents, driver's licenses, vehicle registration numbers, background check results

• Behavioral Metadata: App usage patterns, preferences, communication logs

• Device Information: Device IDs, IP addresses, operating system details

Yango, which has roots in Russian tech giant Yandex, operates in several European countries including the Netherlands, Finland, and Norway. According to the Dutch authorities, the company stored sensitive user data on servers located in Russia, where it could potentially be accessed by Russian government agencies under Russian law. This violates the EU's General Data Protection Regulation (GDPR), which prohibits the transfer of personal data to countries that do not provide adequate data protection safeguards.

🔍 Tekin Analysis: Why This Fine Matters — The Geopolitics of Data

This €100 million fine is about far more than just data protection compliance — it's about sovereignty, national security, and the geopolitical implications of data flows in an increasingly fragmented digital world. The EU's action against Yango demonstrates that data protection has become a critical component of national security strategy.

Why Russia Specifically? Under Russian law, specifically the 2015 Data Localization Law and subsequent amendments, Russian authorities can compel companies to provide access to data stored on Russian servers without judicial oversight. This means that location data, travel patterns, and personal information of European citizens could potentially be accessed by Russian intelligence services. In the context of ongoing geopolitical tensions, this represents an unacceptable security risk.

The GDPR Framework: The General Data Protection Regulation prohibits the transfer of personal data to "third countries" (non-EU countries) unless those countries provide "adequate" data protection. Russia does not have an adequacy decision from the EU, meaning data transfers require specific safeguards such as Standard Contractual Clauses (SCCs) or Binding Corporate Rules (BCRs). However, even these mechanisms can be invalidated if the destination country's laws allow government access to data in ways that conflict with EU fundamental rights — exactly the situation with Russia.

The Schrems II Precedent: This enforcement action follows the logic of the landmark Schrems II decision, which invalidated the EU-US Privacy Shield framework due to concerns about US government surveillance. The same principles apply to Russia, but with even greater concerns given the lack of independent judicial oversight and rule of law protections.

Industry Implications: The Yango fine sends a clear message to all companies operating in the EU: data localization and transfer restrictions are not mere technicalities — they are fundamental requirements with severe financial consequences for non-compliance. This is particularly relevant for companies with ties to countries that lack strong data protection frameworks or have laws that enable government surveillance.

💡 Tekin Insight: For consumers, this case is a reminder to carefully consider where your data is stored and who has access to it. When using any online service, especially those involving location tracking or sensitive personal information, check the company's privacy policy to understand where data is stored and whether it's transferred to countries with weak data protection laws. In an era of increasing geopolitical tensions, data sovereignty is not just a legal issue — it's a personal security issue.

🎓 ShinyHunters Cyberattack Paralyzes Canvas LMS — Thousands of Universities Held Hostage During Finals Week

In one of the most disruptive cyberattacks on educational infrastructure in history, the notorious hacking group ShinyHunters launched a sophisticated attack on May 7, 2026, against Instructure's Canvas Learning Management System, taking the platform offline for over 12 hours and compromising data from millions of students across thousands of universities. The timing was devastating: the attack occurred during finals week, when students desperately needed access to course materials, assignments, and grades.

⚠️ Attack Details and Timeline

Target: Instructure Canvas LMS (Learning Management System)

Attacker: ShinyHunters (notorious cybercriminal group)

Attack Date: May 7, 2026, 2:50 PM EST

Outage Duration: 12+ hours

Affected Organizations: Thousands of universities and schools (40% of North American higher education institutions)

Compromised Data: Names, email addresses, student ID numbers, messages between users

Ransom Deadline: May 12, 2026 (threat to publish data)

Peak DownDetector Reports: 8,500+ within first hour

Canvas, developed by Instructure, is used by approximately 40% of higher education institutions in North America, including prestigious universities such as Stanford, Northwestern, UCLA, and hundreds of others. The platform serves as the central hub for online learning, hosting course materials, assignments, grades, discussions, and communications between students and faculty. When Canvas went down during finals week, millions of students were unable to access critical resources needed for their final exams.

🔍 How ShinyHunters Breached Canvas: The Attack Vector

According to Reuters and Instructure's official statement, the attackers exploited a vulnerability in the Free-for-Teacher service, a feature that allows educators without Canvas accounts to trial certain platform features. This seemingly innocuous feature became the entry point for one of the most significant educational cyberattacks in history.

The Attack Chain:

- Initial Access: Exploited vulnerability in Free-for-Teacher service to gain unauthorized access

- Privilege Escalation: Leveraged initial access to escalate privileges within Canvas infrastructure

- Data Exfiltration: Extracted user data including names, emails, student IDs, and messages

- Service Disruption: Deployed ransomware or similar mechanism to take Canvas offline

- Extortion: Contacted universities with ransom demands and threat to publish stolen data

The Ransom Tactic: ShinyHunters sent threatening messages to affected universities: "Contact us and reach a settlement by May 12, or we will publish all data." This tactic created immense pressure on universities to negotiate directly with the attackers to prevent the public release of student data.

Instructure's Response: The company issued a statement confirming that the attack had been "contained and remediated" and that Canvas services had been restored. However, Instructure acknowledged that some user data, including names, email addresses, student ID numbers, and messages between users, had been compromised. The company is working with law enforcement and cybersecurity firms to investigate the breach and assess the full extent of the damage.

🕵️ The ShinyHunters Dossier: One of the Most Dangerous Cybercriminal Groups

ShinyHunters is a notorious cybercriminal group that has been active since 2020 and is responsible for some of the largest data breaches in history. The group has previously targeted major corporations including Microsoft, AT&T, Ticketmaster, and Santander Bank, stealing and selling hundreds of millions of user records.

ShinyHunters' Modus Operandi:

• Target high-value databases containing sensitive personal information

• Exfiltrate massive amounts of data (often hundreds of millions of records)

• Sell stolen data on underground forums and dark web marketplaces

• Extort victim organizations with threats to publish data

• Operate with apparent impunity despite international law enforcement efforts

Notable Previous Attacks:

• Microsoft GitHub repositories (2020) — 500GB of private data

• Ticketmaster (2024) — 560 million customer records

• AT&T (2024) — 73 million customer records

• Santander Bank (2025) — 30 million customer and employee records

🔍 Tekin Analysis: The Lessons from the Canvas Attack

1. Dangerous Single Point of Failure: When 40% of universities depend on a single platform, one successful attack can paralyze the entire educational ecosystem. This concentration risk is unacceptable for critical infrastructure. Universities need backup systems, contingency plans, and diversified technology stacks.

2. Strategic Timing: ShinyHunters deliberately chose finals week to maximize pressure on universities. This demonstrates that sophisticated attackers think strategically about when to strike for maximum impact and leverage. The timing wasn't coincidental — it was calculated to force universities into difficult decisions under extreme time pressure.

3. The Free-for-Teacher Vulnerability: Free trial and demo features are often security afterthoughts, receiving less scrutiny than production systems. Yet they provide direct access to core infrastructure. This attack demonstrates that every entry point, no matter how seemingly innocuous, must be secured with the same rigor as production systems.

4. Impact on Students: Millions of students were unable to access course materials, submit assignments, or check grades during the most critical week of the semester. Some universities were forced to postpone exams, extend deadlines, or make accommodations for affected students. The educational disruption extended far beyond the 12-hour outage, with ripple effects lasting weeks.

5. The Ransom Dilemma: Universities faced an impossible choice: pay the ransom to protect student data, or refuse and risk public exposure of sensitive information. Some universities reportedly contacted ShinyHunters directly to negotiate, setting a dangerous precedent that could encourage future attacks.

💡 Tekin Insight: Educational institutions are increasingly attractive targets for cybercriminals because they hold vast amounts of personal data, often have limited cybersecurity budgets, and face immense pressure to maintain operations. Universities must treat cybersecurity as a core institutional priority, not an IT afterthought. This means adequate funding, regular security audits, incident response planning, and backup systems that can maintain operations during attacks.

⚖️ Google Settles $50 Million Racial Discrimination Lawsuit — Tech Industry's Diversity Problem Exposed

In a landmark case that exposes the persistent racial inequities in the tech industry, Google agreed on May 7, 2026, to pay $50 million to settle a class-action lawsuit filed by Black employees who alleged systematic racial discrimination in hiring, compensation, and promotion practices. The lawsuit, originally filed in 2022 and represented by prominent civil rights attorney Ben Crump, represents one of the largest racial discrimination settlements in tech industry history.

⚖️ Case Details and Timeline

Lawsuit Filed: 2022

Settlement Amount: $50 million

Lead Attorney: Ben Crump (renowned civil rights attorney)

Allegations: Systematic discrimination in hiring, compensation, and promotion

Settlement Announced: May 2025

Final Approval: May 2026

Google's Position: "We strongly disagree with the allegations but agreed to settle to end the litigation"

Class Members: Thousands of current and former Black Google employees

According to the lawsuit, Google evaluated Black job candidates "through harmful racial stereotypes," hired them at lower levels than similarly qualified white candidates, paid them less, and provided fewer opportunities for advancement. The lawsuit cited internal Google data showing that Black employees were significantly underrepresented at senior levels and overrepresented in lower-level positions, even when controlling for education and experience.

📊 The Diversity Crisis in Tech: Shocking Statistics

According to Google's own diversity reports, in 2025 only 4.4% of Google's U.S. workforce was Black, despite Black Americans comprising approximately 13% of the U.S. population. This disparity is even more pronounced in technical and leadership roles, where Black representation drops to around 2-3%.

*Amazon's higher percentage is largely due to warehouse and logistics workers; corporate and technical roles show similar underrepresentation to other tech companies

Google's Response: In a carefully worded statement, Google said: "We strongly disagree with the allegations that we treated anyone improperly and remain committed to paying, hiring, and leveling all employees fairly and consistently." However, the company agreed to the $50 million settlement to "end the litigation" and avoid a lengthy and potentially damaging trial.

🔍 Tekin Analysis: Is $50 Million Enough? The Cost of Discrimination

For a company like Google, which generated over $300 billion in revenue in 2025, a $50 million settlement represents just 0.016% of annual revenue — essentially a rounding error. Critics argue that this amount is far too small to serve as a meaningful deterrent or to adequately compensate the thousands of affected employees.

The Real Question: Does this settlement actually change anything? Or is it simply a "cost of doing business" that allows Google to continue operating as usual while appearing to address the problem? The settlement includes no admission of wrongdoing, no requirement for structural changes to hiring and promotion practices, and no independent monitoring to ensure future compliance.

Systemic vs. Individual: The lawsuit alleges systematic discrimination — not isolated incidents, but patterns embedded in Google's hiring algorithms, performance review systems, and promotion processes. Addressing systematic discrimination requires systematic change: revising algorithms, retraining managers, implementing blind resume reviews, establishing diverse hiring panels, and creating accountability mechanisms. A financial settlement alone doesn't accomplish any of this.

Industry-Wide Problem: Google is not alone. The entire tech industry struggles with diversity, particularly in technical and leadership roles. The problem stems from multiple factors: biased hiring algorithms, homogeneous networks, "culture fit" criteria that favor dominant groups, lack of diverse interview panels, and unconscious bias in performance evaluations. Solving this requires industry-wide commitment, not just individual settlements.

💡 Tekin Insight: This settlement is a reminder that the tech industry still has a long way to go in achieving genuine diversity and inclusion. For companies aspiring to global reach, building a culture of inclusion and diversity from day one is not just ethically right — it's a business imperative. Diverse teams produce better products, make better decisions, and are more innovative. The companies that figure this out will have a significant competitive advantage.

💻 AMD Warns: Memory Crisis Will Increase Gaming Hardware Prices by 20% — AI Boom Squeezes Out Gamers

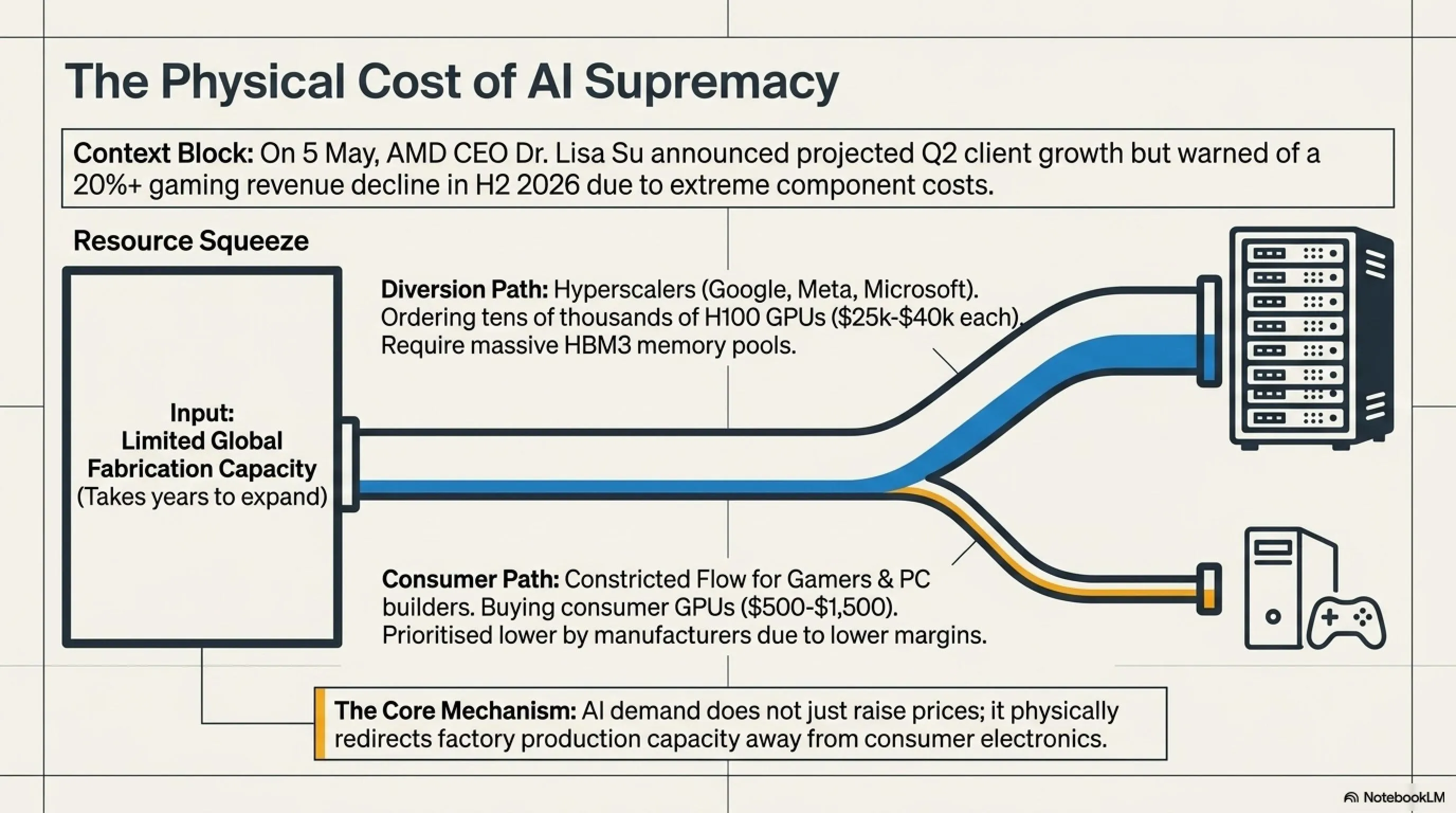

In a sobering announcement that sent shockwaves through the PC gaming community, AMD revealed in its Q1 2026 earnings call on May 5 that surging memory and component costs driven by AI demand will cause gaming hardware sales to decline by more than 20% in the second half of 2026. CEO Dr. Lisa Su and CFO Jean Hu painted a grim picture: the AI boom is creating a supply crunch that is pricing gamers out of the market.

📉 Lisa Su's Warning: The AI Tax on Gamers

"Looking ahead, we expect demand for our Ryzen CPUs to remain solid in the second quarter. However, we are planning for second-half PC shipments to be lower due to higher memory and component costs. Against this backdrop, we still expect our client revenue to grow year over year and outperform the market, driven by the strength of our Ryzen portfolio and expanding commercial adoption."

— Dr. Lisa Su, AMD CEO, Q1 2026 Earnings Call

CFO Jean Hu provided even more specific guidance on the gaming segment: "Sequentially, gaming revenue was down 15%, consistent with our expectations. In addition, as Lisa mentioned earlier, we expect the second-half demand in gaming to be impacted by higher memory and component costs. We now expect second-half gaming revenue to decline more than 20% compared to the first half."

🔍 Tekin Analysis: Why AI Is Pricing Gamers Out of the Market

The AI boom has created unprecedented demand for high-bandwidth memory (HBM, GDDR6X, DDR5) and advanced semiconductors. Companies like Nvidia, Google, Microsoft, Amazon, and Meta are spending billions of dollars building AI datacenters, and they're willing to pay premium prices for components. Memory manufacturers like Samsung, SK Hynix, and Micron are prioritizing these high-margin AI customers over the consumer market.

The Economics: A single AI training cluster might require thousands of GPUs, each with 80GB+ of HBM3 memory. A single H100 GPU sells for $25,000-$40,000, and hyperscalers are ordering them by the tens of thousands. Compare that to a consumer gaming GPU at $500-$1,500. From a manufacturer's perspective, the choice is obvious: prioritize AI over gaming.

The Supply Chain Squeeze: Memory fabrication capacity is limited and takes years to expand. When AI demand surges, it doesn't just increase prices — it physically diverts production capacity away from consumer products. Gamers aren't just paying more; they're competing for a shrinking supply.

Market Reaction and Gamer Sentiment: The announcement triggered widespread concern in the gaming community. Many gamers who were planning system upgrades are now reconsidering, choosing to wait until prices stabilize. Some analysts predict this situation will persist through late 2026 or early 2027, as AI datacenter buildouts continue at a frantic pace.

💡 Practical Strategies for Gamers: Navigating the Memory Crisis

1. Wait if Possible: If your current system is still functional, consider waiting until Q4 2026 or Q1 2027 when prices may stabilize.

2. Consider Used Market: The used market for GPUs and RAM can offer significant savings, though verify condition and warranty status.

3. APU Solutions: AMD's Ryzen processors with integrated graphics (like the Ryzen 7 9850X3D) offer surprisingly capable gaming performance without a discrete GPU.

4. Console Alternative: PS5, Xbox Series X, or the upcoming Nintendo Switch 2 may offer better value during this period.

5. Cloud Gaming: Services like GeForce Now, Xbox Cloud Gaming, or PlayStation Plus Premium can provide high-end gaming without hardware investment.

6. Prioritize Upgrades: If you must upgrade, focus on the components that will provide the most performance improvement for your specific use case.

7. Monitor Sales: Retailers may offer periodic sales to clear inventory, providing temporary relief from high prices.

📊 The Broader Context: AI's Impact on Consumer Technology

The gaming hardware crisis is just one symptom of a larger phenomenon: the AI boom is fundamentally reshaping the technology supply chain, and consumer markets are bearing the cost. This isn't just about gaming — it affects smartphones, laptops, workstations, and any device that requires advanced semiconductors or high-performance memory.

The Long-Term Outlook: Some industry analysts believe this is a temporary imbalance that will correct as memory manufacturers expand production capacity. Others argue that AI demand will remain structurally higher than consumer demand, permanently elevating prices. The truth likely lies somewhere in between: prices will eventually stabilize, but at a higher baseline than pre-AI boom levels.

Innovation Pressure: Paradoxically, this crisis may accelerate innovation in memory technology and alternative architectures. Companies are exploring new memory technologies (like MRAM and ReRAM), more efficient architectures, and ways to do more with less. Necessity, as they say, is the mother of invention.

🎯 Final Thoughts: A Sunday of Crises, Consequences, and Choices

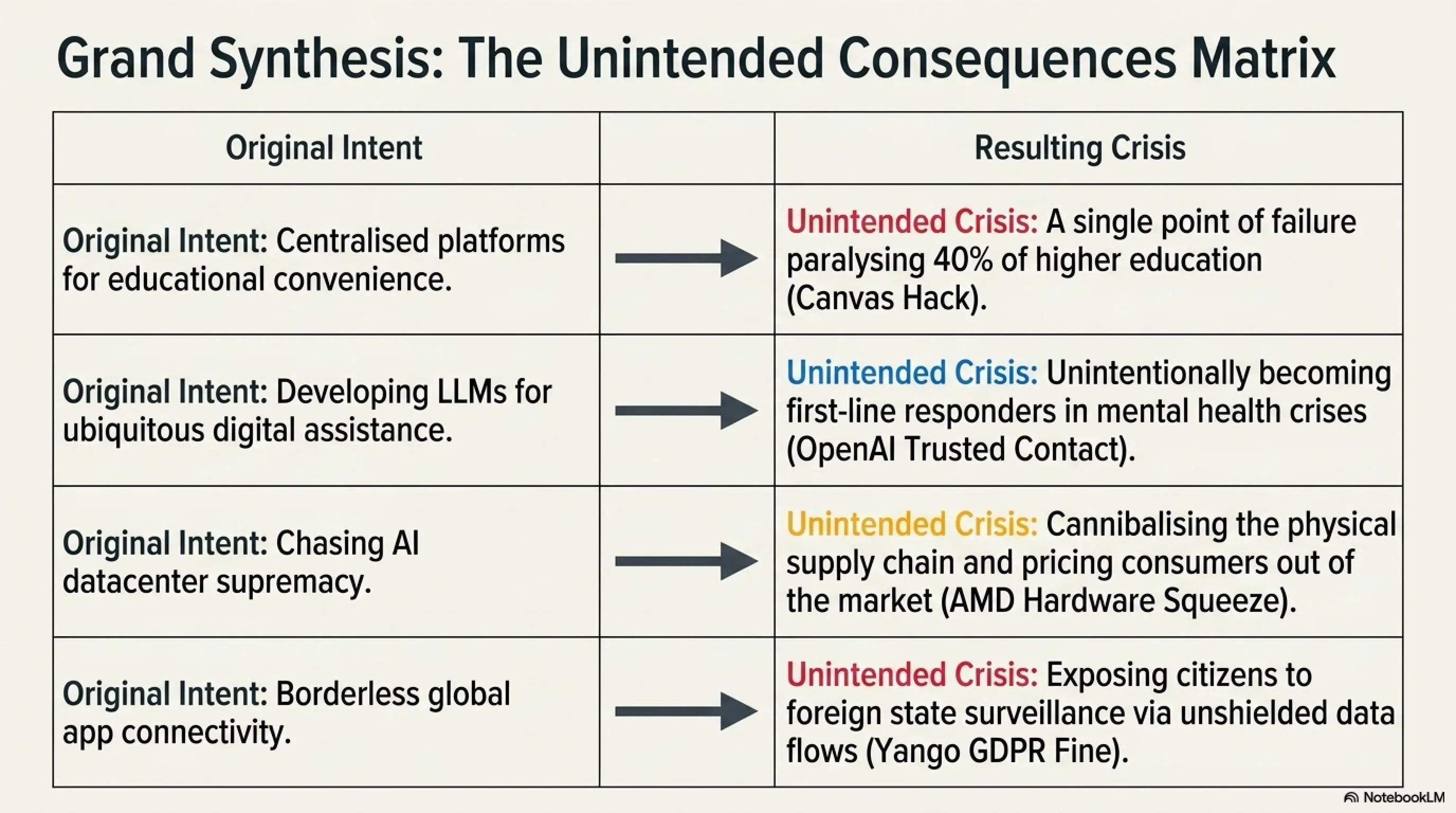

Sunday, May 10, 2026, will be remembered as a day that exposed the fragility of our digital infrastructure, the ethical complexities of AI safety, the high cost of privacy violations, the vulnerability of educational systems, the persistence of discrimination in tech, and the unintended consequences of the AI boom on consumer markets.

The Palo Alto Networks zero-day reminds us that even the most trusted security infrastructure can become a single point of catastrophic failure. OpenAI's Trusted Contact feature raises profound questions about the role of AI companies in mental health intervention. The Yango fine demonstrates that data sovereignty is not just a legal issue but a matter of national security. The Canvas attack shows how dependent we've become on centralized platforms and how devastating it is when they fail. Google's settlement exposes the tech industry's ongoing struggle with diversity and inclusion. And AMD's warning reveals how the AI boom is creating winners and losers, with gamers caught in the crossfire.

The Common Thread: All six stories share a common theme — the unintended consequences of our technological choices. We built centralized platforms for convenience, but created single points of failure. We developed AI for productivity, but now grapple with its role in mental health. We connected the world, but exposed ourselves to surveillance. We pursued innovation, but created new forms of inequality. We chased AI supremacy, but priced consumers out of the market.

What This Means for the Future: These stories are not isolated incidents — they're symptoms of deeper structural issues in how we build, deploy, and govern technology. As we move further into 2026 and beyond, we'll need to make fundamental choices about the kind of digital future we want to create. Do we want centralized platforms or distributed systems? Do we want AI companies making mental health decisions? Do we want data flowing freely or protected by borders? Do we want diverse tech companies or homogeneous ones? Do we want technology that serves everyone or just those who can afford it?

The answers to these questions will shape the next decade of technology. Today's crises are tomorrow's lessons — if we're willing to learn from them.

❓ Are Palo Alto firewalls safe to use right now?

If you're using Palo Alto Networks firewalls, you should immediately disable the Captive Portal (User-ID Authentication Portal) feature if you're not actively using it. Restrict management interface access to specific trusted IP addresses only. Enable comprehensive logging and monitor for suspicious activity. The patch will be released on May 13, 2026 — plan your patching strategy now. Until then, implement compensating controls and additional monitoring as defense-in-depth measures.

❓ Should I use OpenAI's Trusted Contact feature?

The Trusted Contact feature is entirely optional and should be considered carefully. If you choose to use it, select someone who can respond appropriately to a mental health crisis. Be aware that conversations may be monitored by both AI systems and human reviewers, and false positives are possible. Most importantly, remember that ChatGPT is not a substitute for professional mental health care. If you're struggling with mental health issues, seek help from licensed professionals or contact crisis services like 988 Suicide and Crisis Lifeline.

❓ How can I protect my data when using ride-hailing apps?

Always check the app's privacy policy to understand where your data is stored and whether it's transferred to other countries. Use apps that are transparent about data handling and comply with privacy standards like GDPR. Consider using apps from companies based in jurisdictions with strong data protection laws. Minimize the personal information you provide, and be cautious about apps that request excessive permissions. The Yango fine demonstrates that regulators are taking data protection seriously, but prevention is always better than enforcement.

❓ Was my data compromised in the Canvas attack?

If your university or school uses Canvas, some of your information (name, email, student ID, and messages between users) may have been compromised. Instructure has stated that the attack has been contained, but you should change your Canvas password immediately and be vigilant for phishing emails. If your institution was affected, you should receive official notification. Monitor your accounts for suspicious activity and consider enabling two-factor authentication where available.

❓ When will gaming hardware prices return to normal?

AMD's guidance suggests that the memory crisis will persist through at least the second half of 2026. Most analysts expect prices to begin stabilizing in Q4 2026 or Q1 2027, though they may not return to pre-AI boom levels. The timeline depends on how quickly memory manufacturers can expand production capacity and whether AI datacenter buildouts continue at the current pace. If you can wait, Q4 2026 or early 2027 may offer better value. In the meantime, consider alternatives like used hardware, APUs, consoles, or cloud gaming services.

📚 Sources and References

Primary Sources: Palo Alto Networks Security Advisory, CISA Known Exploited Vulnerabilities Catalog, Cybernews, The Hacker News, The Register, CSO Online, eSentire, OpenAI Official Blog, TechCrunch, The Verge, The Independent, Engadget, Dutch Data Protection Authority (Autoriteit Persoonsgegevens), Caliber.az, TVP World, NBC Chicago, CBS News, PBS NewsHour, Reuters, Wikipedia (2026 Canvas Security Incident), Stanford Daily, Cincinnati Enquirer, Ben Crump Law Firm, AP News, NBC Bay Area, Notebookcheck, Tom's Hardware, AMD Q1 2026 Earnings Call Transcript, DigiTimes, TwistedVoxel

Tekin Morning May 10, 2026 — Research and Analysis: Tekin Editorial Team

🌐 Stay Connected With Us

For the latest tech, gaming, and gadget news, follow us on social media: