In the May 11, 2026 Tekin Morning briefing, we dissect six explosive tech stories focusing on the convergence of technology and biology. We cover TotalEnergies' €100M investment in the Pangea 5 supercomputer, Princeton's 3D-MIND chip featuring 70,000 living neurons, and Samsung's One UI 8.5 Agentic AI update. We also analyze the Samsung Sensor OLED display for blood pressure, Cambridge's energy-saving memristors, and Leiden University's brainless 3D-printed microbots.

🌅 Welcome to Tekin Morning — Monday, May 11, 2026

Good morning, tech enthusiasts! Today, Monday, May 11, 2026, we're kicking off with six revolutionary stories from the world of technology. From next-generation supercomputers to living chips, from smart displays to microscopic robots — everything in this comprehensive briefing.

⚡ Today's Headlines:

🖥️ Pangea 5 Supercomputer: 6x Power with €100M Investment

🧠 Living Brain Chip: 70K Neurons on Princeton's Chip

📱 One UI 8.5: AI Revolution for Galaxy S25 & S24

❤️ Samsung Health Display: Heart Sensor on Screen

⚡ Cambridge Memristor: 70% AI Energy Reduction

🤖 Brainless Robot: Swimming & Navigation Without Control

☕ Grab your coffee and get ready for an exciting journey through the world of technology!

🖥️ Pangea 5 Supercomputer: TotalEnergies Multiplies Computing Power by 6x

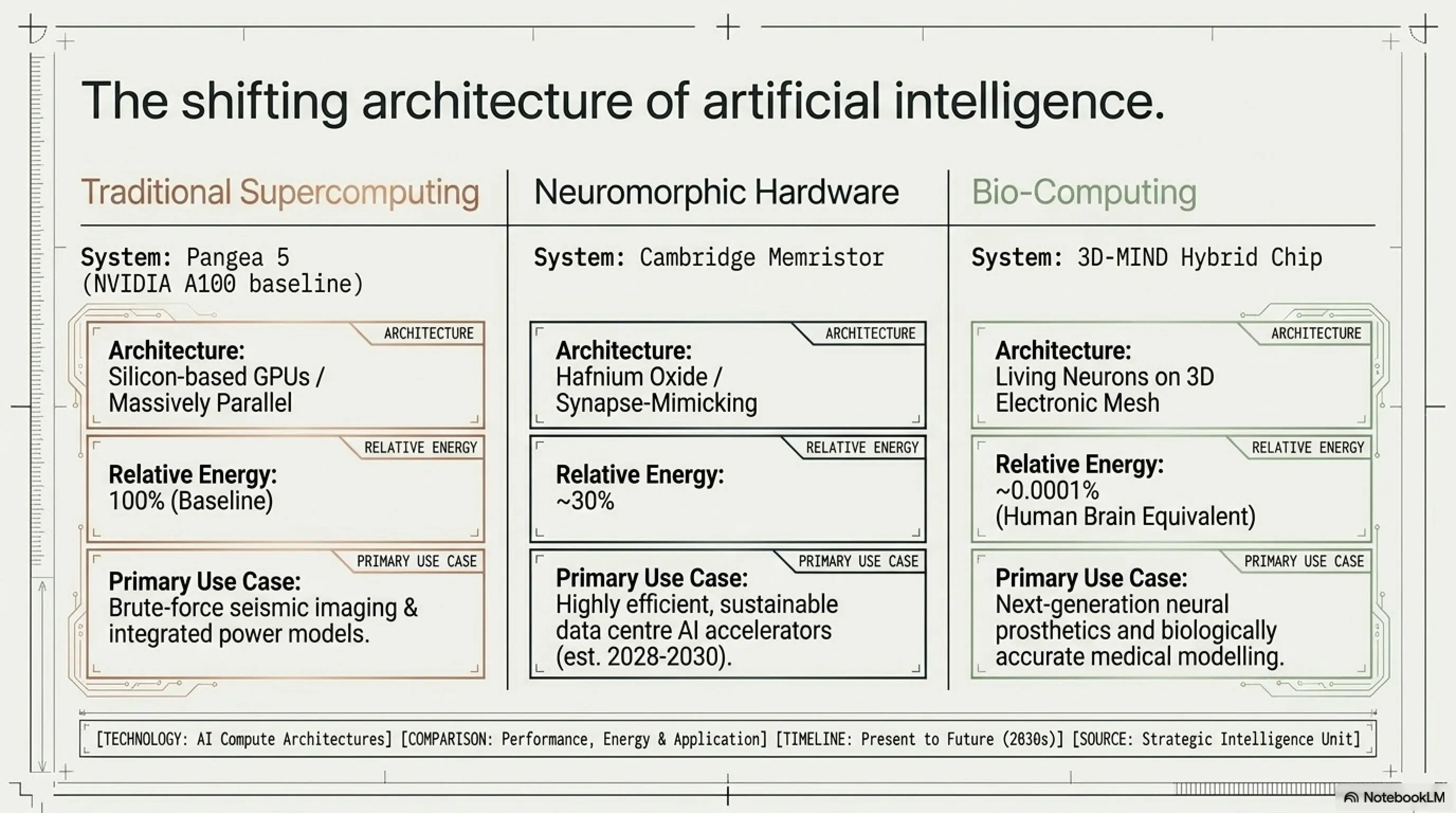

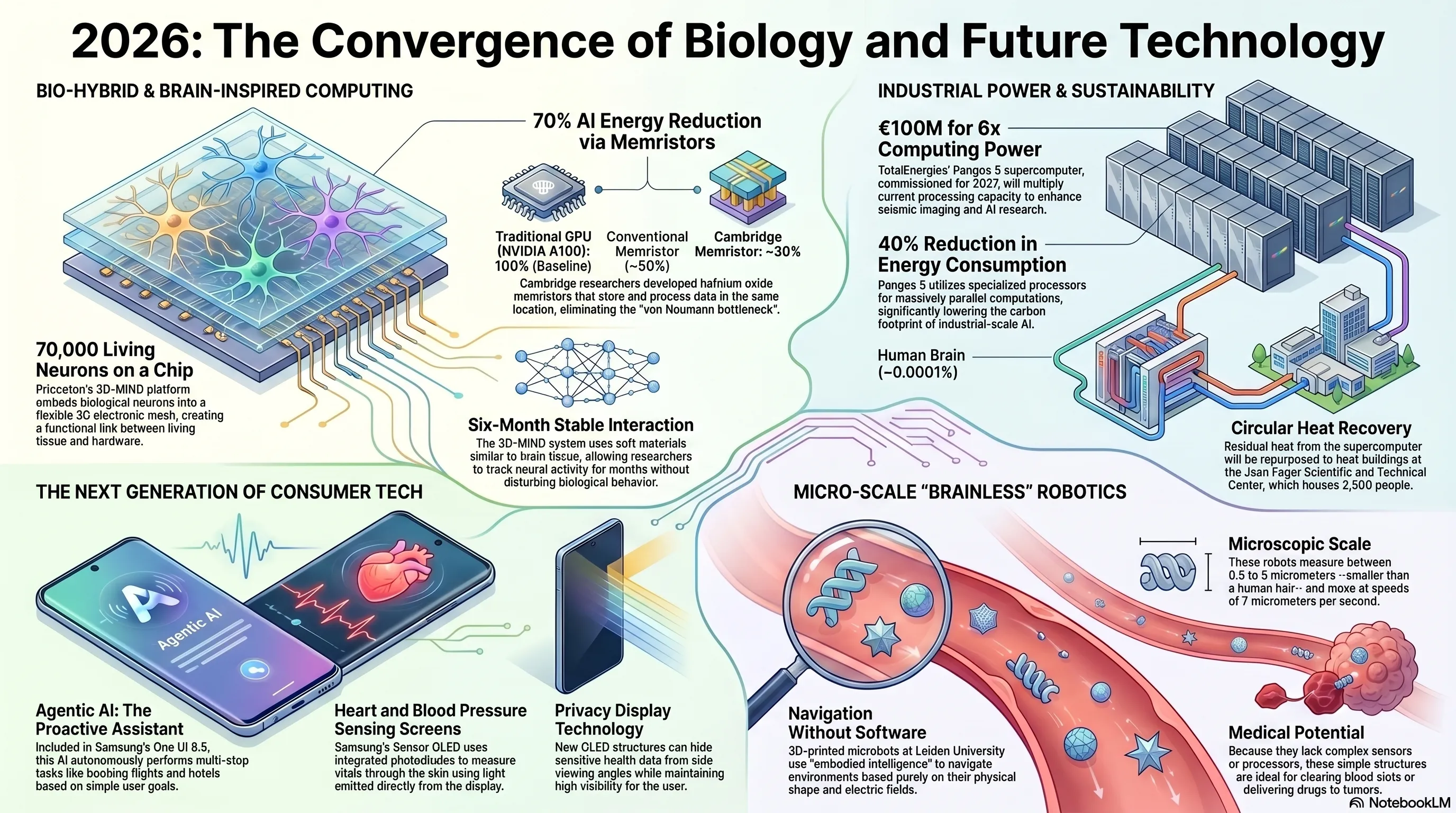

In one of the largest technology investments of 2026, French energy giant TotalEnergies, alongside Dell Technologies and NVIDIA, has signed a contract to design and install Pangea 5 — a next-generation supercomputer that will multiply the company's computing power by 6x. This project, with an investment exceeding €100 million, will be hosted at the Jean Féger Scientific and Technical Center (CSTJF) in Pau, southern France, and commissioned in 2027.

Pangea 5 is not just an ordinary supercomputer — it's a strategic platform for advancing TotalEnergies' sustainable energy goals. The system is designed for two primary objectives: first, expanding advanced seismic engineering to enhance subsurface imaging accuracy and accelerate exploration of low-cost, low-emission hydrocarbon resources; second, supporting AI research and development and growing digital needs to optimize computing times and deepen understanding of complex phenomena like integrated power models.

📊 Pangea 5 Technical Specifications

| Computing Power | 6x previous generation |

| Investment | Over €100 million |

| Location | CSTJF Center, Pau, France |

| Launch Date | 2027 |

| Energy Reduction | 40% at equivalent performance |

| Cooling System Reduction | 5x lower consumption |

One of Pangea 5's standout features is its energy efficiency. The supercomputer leverages specialized processors optimized for massively parallel computations, reducing energy consumption by approximately 40% at equivalent performance levels. Additionally, its associated cooling system's consumption has been cut by a factor of five. The residual heat generated by the supercomputer will be recovered and used to help heat the CSTJF buildings, which host more than 2,500 people.

Namita Shah, President of OneTech at TotalEnergies, stated: "Artificial intelligence and digital technology are strategic drivers of our energy transition. By increasing our computing power sixfold, we are strengthening our leadership in high-performance computing, ensuring that our expert teams continue to have the means to push the envelope to support the development of our activities and meet the growing global demand for energy."

🔍 Tekin Analysis: Why Pangea 5 Matters

Pangea 5 demonstrates that the energy industry is becoming one of the largest consumers of AI computing power. Energy exploration, climate modeling, and energy network optimization all require massive computations. This €100 million investment shows that TotalEnergies is building its own dedicated infrastructure rather than relying on public clouds — a strategy that ensures data security, control, and cost optimization. Moreover, the 40% energy reduction and heat recovery demonstrate that even supercomputers must be sustainable. This is particularly critical as the AI industry faces growing scrutiny over its carbon footprint and energy consumption.

The collaboration with NVIDIA and Dell Technologies also signals the use of cutting-edge GPU, CPU, and InfiniBand technologies. John Josephakis, Vice President of HPC & AI at NVIDIA, emphasized: "NVIDIA Compute, network and software platforms will provide Pangea 5 with exceptional parallel computing power, accelerating scientific workloads and opening new opportunities in artificial intelligence. With this choice of NVIDIA GPUs, CPUs and InfiniBand, TotalEnergies is adopting an architecture capable of meeting the most demanding industrial and energy challenges, both today and in the years to come."

Pangea 5 represents a turning point not only for TotalEnergies but for the entire energy industry. This project demonstrates that the future of energy exploration and production is heavily dependent on AI and high-performance computing. As many energy companies grapple with climate challenges and pressures to reduce carbon emissions, investing in efficient supercomputers could be the key to success in the coming decade. The fact that TotalEnergies is willing to invest over €100 million in a single supercomputer speaks volumes about the strategic importance of computational power in the energy transition.

For the global energy sector, Pangea 5 sets a new benchmark. Competitors like Shell, BP, and ExxonMobil are likely watching closely and may accelerate their own HPC investments. The race for computational supremacy in energy is heating up, and those who can process seismic data faster, model reservoirs more accurately, and optimize production more efficiently will have a significant competitive advantage. This is especially true as the industry shifts toward more complex operations like offshore wind, carbon capture, and hydrogen production — all of which require sophisticated modeling and simulation.

🧠 Hybrid Brain Chip: 70,000 Living Neurons on a Chip

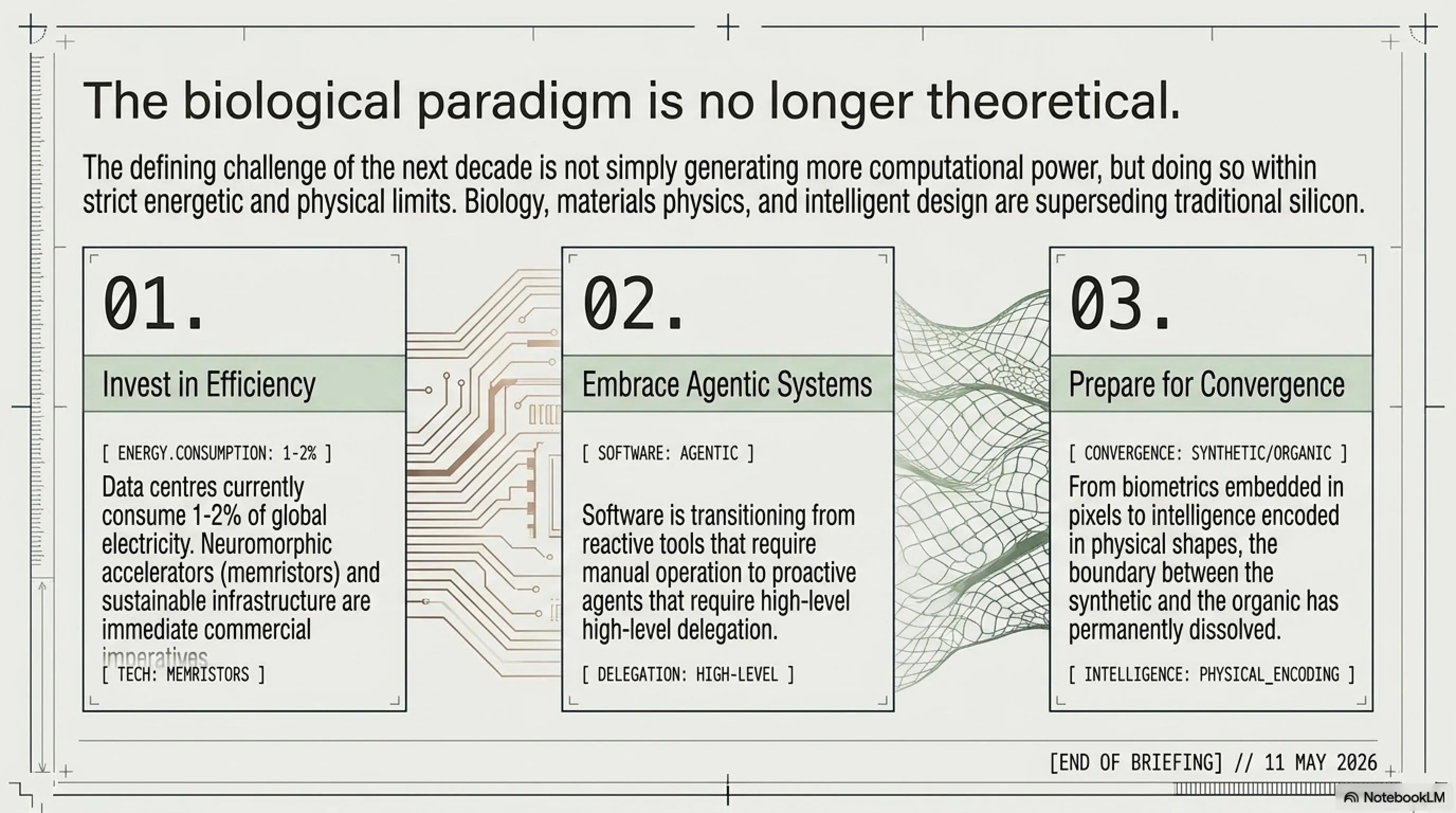

In one of the most astonishing scientific breakthroughs of 2026, researchers at Princeton University have developed a hybrid biocomputing platform called 3D-MIND (3D - Microelectronic Integrated Neuron Device) that combines living brain cells with flexible electronics. The device embeds approximately 70,000 biological neurons within a three-dimensional electronic scaffold designed to support communication between biological tissue and computing hardware.

This system marks a significant step toward closer integration between artificial intelligence and biological systems. Tian-Ming Fu, associated faculty at the Princeton Neuroscience Institute, stated: "The real bottleneck for AI in the near future is energy. Our brain consumes only a tiny fraction — about one millionth — of the power consumed by today's AI systems to perform similar tasks."

🔬 3D-MIND Architecture

The 3D-MIND device consists of a flexible three-dimensional electronic mesh that can be embedded inside lab-grown networks of living brain cells. The cells grow around and through the mesh, forming a stable connection between biological tissue and electronic components. Integrated sensors monitor the electrical activity of the neural network, while embedded stimulators can send signals back into the cells.

The chip features around 70,000 biological neurons networked on a 3D mesh with dozens of microscopic electrodes that can sense and manipulate the brain cells' activity. Unlike earlier systems that mainly interacted with cells at the surface of neural cultures, this new platform is designed to operate deep within three-dimensional neural structures, enabling direct monitoring and stimulation throughout the network.

The device's electronics are made from soft materials with mechanical properties similar to brain tissue, allowing it to remain integrated with living cells for extended periods without significantly disturbing their behavior. Researchers reported stable interaction tracking over six months — a remarkable achievement in bioelectronics.

The study also found that three-dimensional biological neural networks offer richer connectivity and greater computational potential than traditional flat two-dimensional cultures. The embedded interface enabled faster, more efficient stimulation and training of neural networks compared with conventional 2D systems. This suggests that 3D architectures are not just biologically more realistic but also computationally more powerful.

💡 Future Applications of 3D-MIND

- Brain-Inspired Computing: AI systems with dramatically lower energy consumption

- Medical Research: Modeling neurological diseases in controlled environments

- Drug Screening: More biologically accurate laboratory models for testing treatments

- Brain Development Studies: Understanding how neural circuits develop, adapt, and function

- Brain-Computer Interfaces: Next-generation neural prosthetics and assistive technologies

- Neuromorphic Computing: Hardware that truly mimics brain architecture and function

The development of 3D-MIND introduces a new method for directly linking electronic systems to three-dimensional networks of lab-grown brain cells. Researchers believe this approach could support the creation of future brain-inspired computing systems capable of operating with far lower energy consumption than many current AI platforms. The energy efficiency gains could be transformative — if we can build computers that consume one-millionth the power of current AI systems while maintaining similar performance, it would revolutionize everything from data centers to edge devices.

Beyond computing applications, the system could also serve as a research tool for studying how neural circuits develop, adapt, and function inside realistic three-dimensional environments. The platform may improve drug screening by providing more biologically accurate laboratory models and could help scientists investigate neurological disorders under controlled conditions. For example, researchers could create 3D-MIND systems with neurons from patients with Alzheimer's or Parkinson's disease, then test potential treatments in a living, functional neural network.

Future work will focus on refining the device to study brain development, model specific neurological diseases, and test experimental treatments. Researchers are also expanding the system by integrating additional sensors and electrodes to increase the complexity and capability of the neural interface. Efforts are underway to improve large-scale three-dimensional assembly techniques so the devices can be produced more consistently and at lower cost.

The ethical implications of 3D-MIND are also worth considering. While the neurons used are derived from stem cells and grown in a lab — not extracted from living brains — questions remain about the moral status of systems that combine biological neurons with electronic hardware. As these systems become more sophisticated, we may need to develop new ethical frameworks for working with hybrid biological-electronic intelligence. The Princeton team has emphasized that their work is purely for research and medical applications, not for creating conscious systems, but the line between tool and entity may become increasingly blurred as the technology advances.

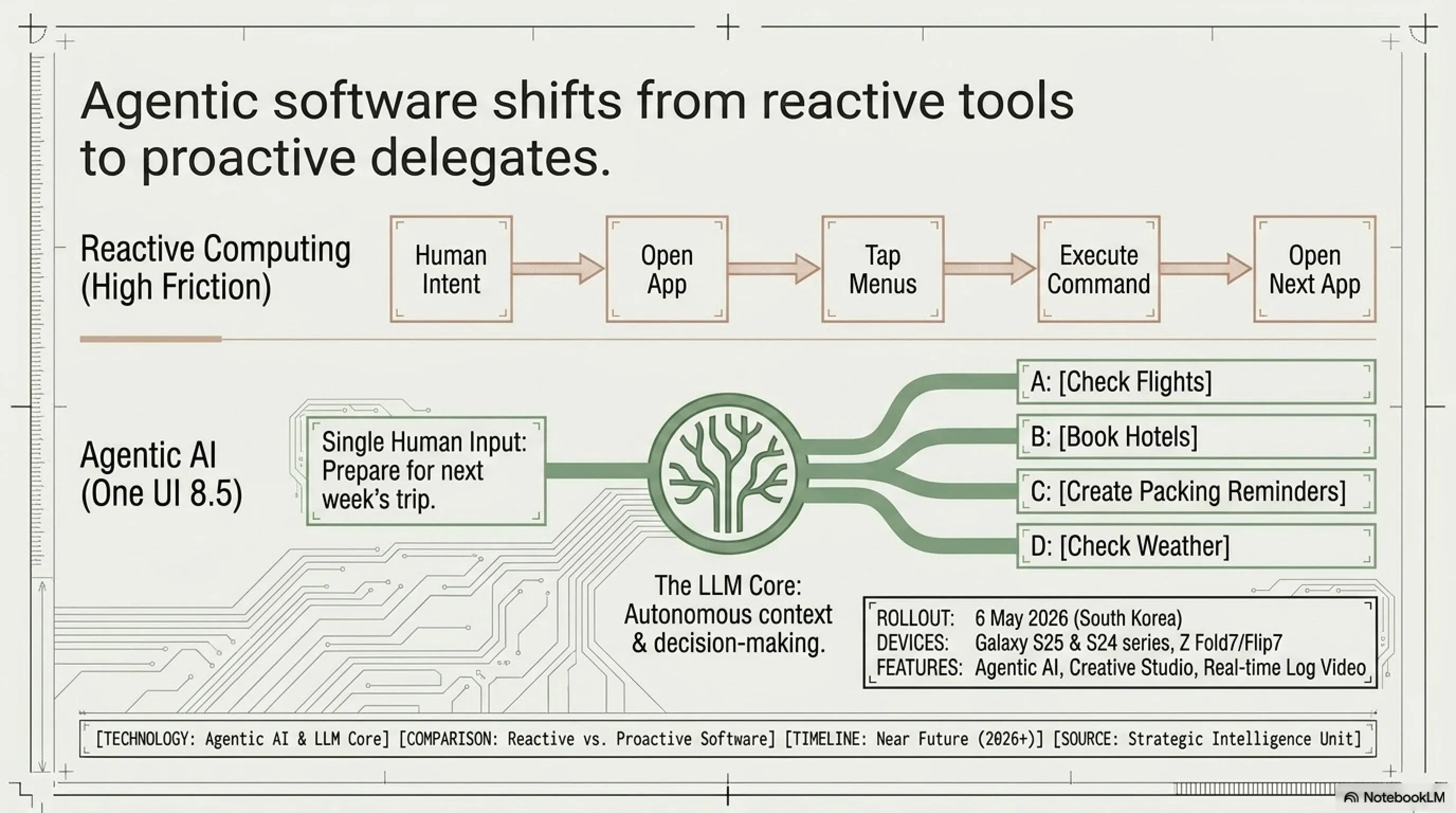

📱 Samsung One UI 8.5: AI Revolution for Galaxy S25 and S24

Samsung has officially released the stable One UI 8.5 update for the Galaxy S25 series after 10 beta releases, bringing this major update to users' hands. Built on Android 16, this update brings formerly exclusive Galaxy S26 features to the S25, including Agentic AI, Creative Studio, and real-time Log video previews.

One UI 8.5 was first released on May 6, 2026 in South Korea for the Galaxy S25 series, Galaxy Z Fold7, and Z Flip7, and is gradually expanding to other regions. The update focuses on enhancing communication and creative experiences on Galaxy mobile phones and tablets, including the Galaxy S25 series, Galaxy S25 FE, Galaxy S24 series, Galaxy S24 FE, Galaxy Z Fold7 and Galaxy Z Flip7, Galaxy Z Fold6 and Galaxy Z Flip6, Galaxy Tab S11 series, and Galaxy Tab S10 series.

🎨 Key Features of One UI 8.5

| Agentic AI | Intelligent assistant that autonomously performs complex tasks |

| Creative Studio | AI-powered image and video editing tools |

| Log Video Preview | Real-time Log video preview for professional filmmakers |

| Blur Effects | New blur effects across various UI elements |

| Floating UI | New floating UI elements for better user experience |

| Personalization | Advanced personalization features |

One of the most prominent features of One UI 8.5 is Agentic AI — an intelligent assistant that can autonomously perform complex tasks without requiring direct user commands. This technology uses large language models (LLMs) to understand context, make decisions, and execute multi-step actions. For example, if a user says "prepare for next week's trip," Agentic AI can automatically check flights, book hotels, create packing reminders, and even check weather forecasts.

This represents a fundamental shift in how we interact with smartphones. Instead of manually opening apps, navigating menus, and executing individual commands, users can simply state their goals and let the AI figure out the implementation details. This is the promise of agentic systems — AI that acts as a proactive agent on your behalf rather than a reactive tool waiting for commands.

Creative Studio is another powerful feature of One UI 8.5, offering AI-powered image and video editing tools. The tool can automatically remove backgrounds, add cinematic effects, optimize color grading, and even reconstruct missing content using AI. For professional filmmakers, the real-time Log video preview is a game-changer — this feature allows users to preview Log videos (uncompressed format with high dynamic range) directly in the camera and optimize settings before recording.

📊 One UI 8.5 Rollout Statistics

Beta Releases: 10 versions

Stable Release Date: May 6, 2026 (South Korea)

Supported Devices: Galaxy S25, S24, S25 FE, S24 FE, Z Fold7, Z Flip7, Z Fold6, Z Flip6, Tab S11, Tab S10

Android Base: Android 16

Download Size: Approximately 2.5 GB (varies by device)

Global Rollout: Gradual expansion over 2-4 weeks

One UI 8.5 also adds new blur effects to various parts of the user interface, introduces new floating UI elements for a better user experience, and brings advanced personalization features. The overall UI design has been refreshed and is now more modern, fluid, and consistent with Material Design 3 standards. Samsung has clearly been paying attention to user feedback from the beta program, as many of the refinements address specific pain points raised by testers.

For users in the United States, Europe, and Asia, One UI 8.5 is excellent news — Samsung has committed to gradually expanding this update to all regions. Given that the Galaxy S25 and S24 series are extremely popular globally, this update can significantly improve the user experience. To receive the update, go to Settings > Software Update > Download and Install.

The competitive implications of One UI 8.5 are significant. Apple's iOS 18 is expected later this year with its own AI features, and Google's Pixel devices already have advanced AI capabilities. Samsung's aggressive push with Agentic AI and Creative Studio shows that the company is not content to follow — it wants to lead in the AI smartphone race. The fact that Samsung is bringing S26 features down to the S25 and even S24 series demonstrates a commitment to keeping existing users happy and reducing the pressure to upgrade annually.

❤️ Samsung Health Display: Heart Rate and Blood Pressure Sensor on Screen

At Display Week 2026 held in Los Angeles, Samsung Display unveiled Sensor OLED technology — a revolutionary display that integrates health monitoring and privacy features directly into the panel structure. This display can measure biometric metrics such as heart rate and blood pressure using light emitted by the screen, without requiring external sensors.

Sensor OLED technology uses organic photodiodes (OPDs) integrated directly into the panel, enabling it to measure biometric data such as heart rate and blood pressure using light emitted by the screen. Despite this added functionality, it still reaches 500 pixels per inch (PPI), comparable to flagship smartphones. This is a remarkable engineering achievement — embedding sensors into a display without compromising pixel density or image quality.

💓 How Sensor OLED Works

Sensor OLED technology uses organic photodiodes (OPDs) embedded directly into the OLED layers. These photodiodes can detect light reflected from the user's skin and analyze blood flow patterns. This process is similar to photoplethysmography (PPG) used in smartwatches, but here it's integrated directly into the phone's display.

To measure heart rate, the user simply places their finger on the screen. The display emits green light, which is absorbed by blood, and the photodiodes detect the reflected light. Changes in the intensity of reflected light indicate the heartbeat. For blood pressure, more sophisticated algorithms are required that analyze the PPG waveform and estimate systolic and diastolic pressure. This typically requires initial calibration with a standard blood pressure cuff.

One of the key innovations of Sensor OLED is the integration of Privacy Display technology. This feature can hide sensitive data from side angles while keeping the rest of the screen visible. This is extremely useful for users concerned about their privacy in public places. For example, if you're checking your health results on the subway, Privacy Display can ensure that people around you cannot see your information. The technology uses directional light control to create viewing zones — content in the "private" zone is only visible from directly in front of the screen.

🏥 Clinical Applications and Limitations

Advantages:

- Continuous health monitoring without a separate device

- Ease of use — just place your finger on the screen

- Integration with health apps like Samsung Health

- Privacy protected with Privacy Display technology

- No need to carry additional wearables

- Potential for early detection of cardiovascular issues

Limitations:

- Blood pressure measurement accuracy still requires clinical validation

- Cannot replace FDA-approved medical devices

- May have reduced accuracy in bright light or with wet hands

- Requires initial calibration with a standard blood pressure cuff

- Not suitable for continuous monitoring like dedicated wearables

- May consume additional battery power during measurements

Samsung also introduced the Flex Chroma Pixel display, which reaches 3,000 nits of brightness — double the brightness of current top iPhones. This display is designed for outdoor use and remains readable even in direct sunlight. The combination of Sensor OLED with Flex Chroma Pixel could define the next generation of Galaxy smartphones, offering both health monitoring and exceptional outdoor visibility.

The implications for healthcare are profound. According to the CDC, nearly half of all adults in the United States suffer from high blood pressure, and many are unaware of their condition. A smartphone display that can measure blood pressure could enable more frequent monitoring and earlier detection of hypertension. While it won't replace medical-grade devices, it could serve as a valuable screening tool and encourage users to seek professional care when abnormalities are detected.

For global markets, including developing countries where access to healthcare is limited, this technology could be transformative. A smartphone that can measure vital signs could bring basic health monitoring to billions of people who lack access to traditional medical devices. Of course, this technology is still in the prototype stage and likely won't appear in commercial products until 2027 or 2028, but the potential is enormous.

The competitive landscape is also heating up. Apple has been rumored to be working on similar health monitoring features for future iPhones, and Google's Pixel devices already have some health-tracking capabilities. Samsung's public demonstration of Sensor OLED at Display Week 2026 is a clear signal that the company intends to lead in this space. The race to turn smartphones into comprehensive health monitoring devices is accelerating, and consumers will be the ultimate winners.

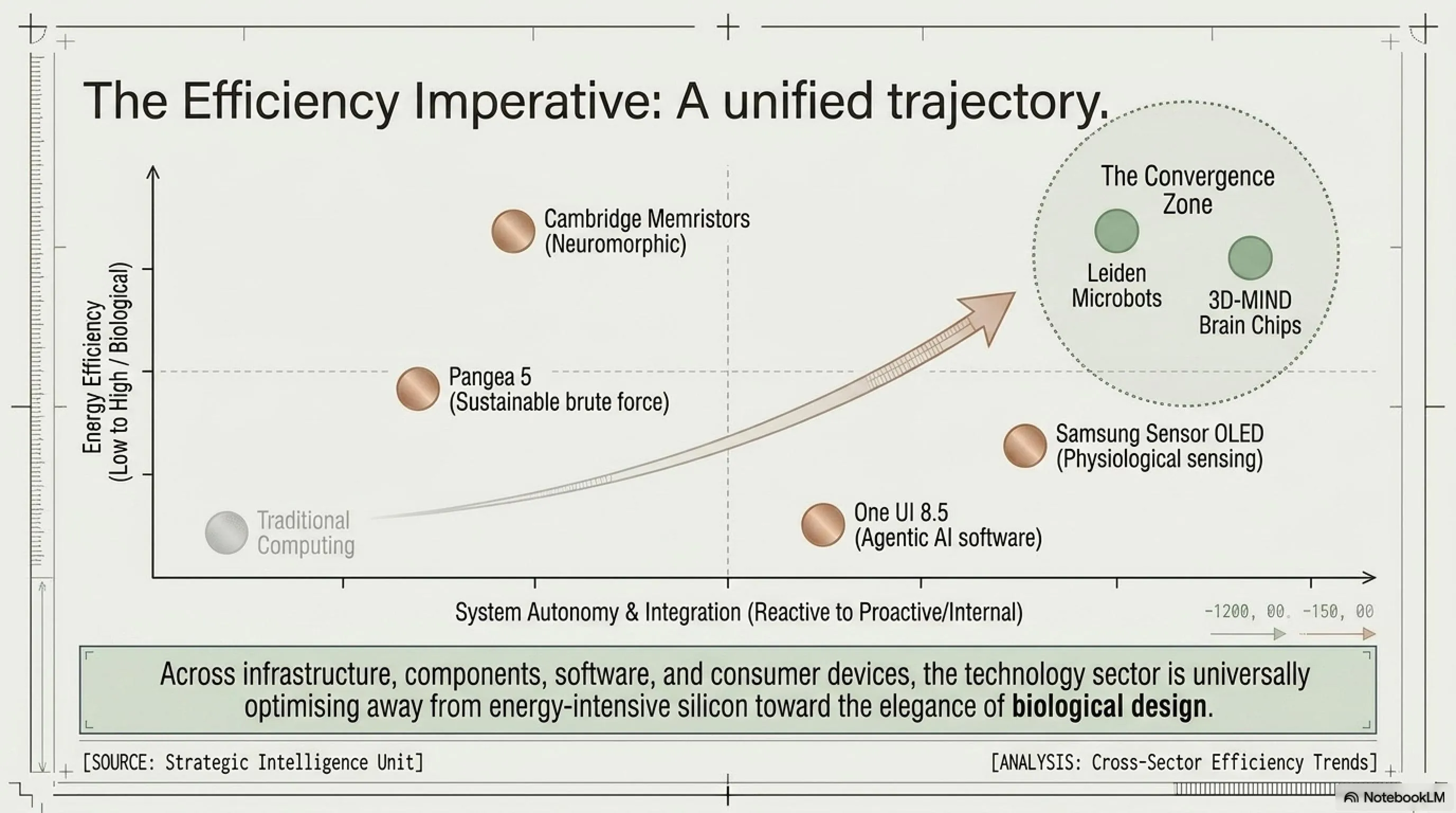

⚡ Cambridge Memristor: 70% Reduction in AI Energy Consumption

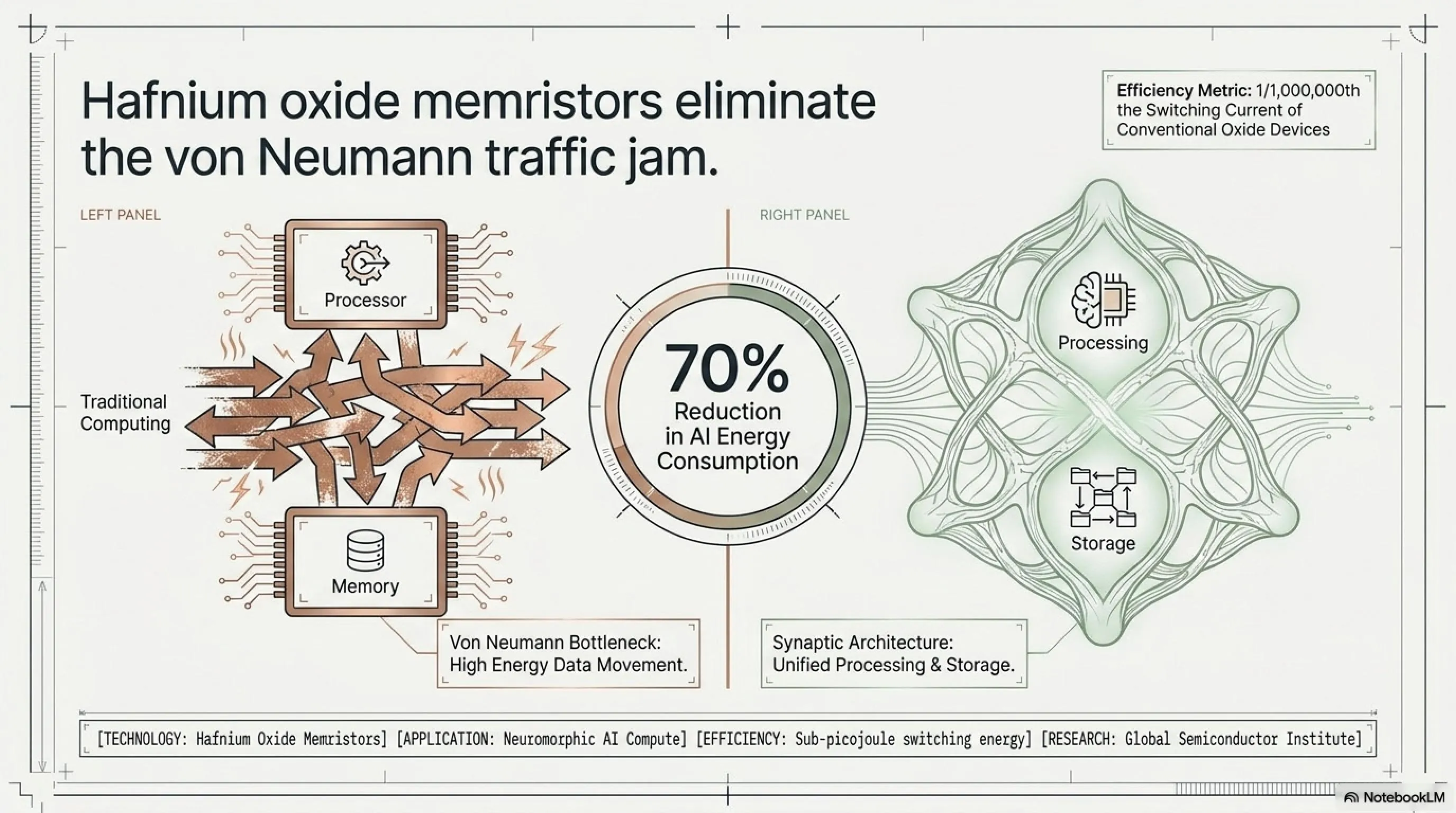

Researchers at the University of Cambridge have developed a new type of nanoelectronic device that could dramatically cut the energy consumed by artificial intelligence hardware — by up to 70% — by mimicking the human brain. This device, called a memristor (memory resistor), is a component designed to replicate the efficient way neurons are connected and communicate in the brain.

The research team, led by the University of Cambridge, developed a modified version of hafnium oxide that functions as a highly stable, low-energy memristor. Their findings were published in the journal Science Advances. This breakthrough could potentially address one of the most pressing challenges facing the AI industry: the massive energy consumption of training and running large models.

🔋 Why Memristors Are Revolutionary

In traditional computers, data shuttles back and forth between memory and processor — a process known as the von Neumann bottleneck that consumes enormous energy. Memristors solve this problem by storing and processing information in the same place, just like neurons in the brain. This eliminates the energy-intensive data movement that dominates conventional computing.

The Cambridge memristor operates with switching currents about one millionth of those in conventional oxide-based devices. This means a dramatic reduction in energy consumption — up to 70% in some AI workloads. The device is also highly stable and can withstand millions of switching cycles without degradation, addressing one of the key reliability concerns with earlier memristor designs.

Hafnium oxide is a material that has long been used in electronics, but Cambridge researchers have transformed it into a stable, low-energy switching device. This innovation could potentially drastically reduce the energy cost of running artificial intelligence workloads. The key breakthrough was discovering a specific crystalline structure of hafnium oxide that exhibits reliable memristive behavior with extremely low switching currents.

One of the major challenges of modern AI is its enormous energy consumption. Large language models like GPT-4 or Claude require massive data centers that consume megawatts of electricity. Training a single large AI model can emit as much carbon as five cars over their entire lifetimes. Cambridge memristors could dramatically reduce this consumption, making AI more sustainable and cost-effective. If widely adopted, this technology could reduce the carbon footprint of the AI industry by millions of tons annually.

📊 Energy Consumption Comparison

| System | Energy Consumption (Relative) |

|---|---|

| Traditional GPU (NVIDIA A100) | 100% (baseline) |

| Conventional Memristor | ~50% |

| Cambridge Memristor | ~30% |

| Human Brain | ~0.0001% |

Of course, Cambridge memristors are still in the research stage and will take years to become commercial products. But this technology demonstrates that the future of AI lies in the convergence of biology, materials physics, and intelligent design. If we can build chips that work like the brain, we can make AI both more powerful and more sustainable. The challenge now is scaling up production and integrating memristors with existing computing architectures.

Several companies and research institutions are racing to commercialize memristor technology. Intel, IBM, and HP have all invested in memristor research, and startups like Mythic and Crossbar are developing commercial products. The Cambridge breakthrough could accelerate this timeline by providing a more stable and energy-efficient design. Industry analysts predict that memristor-based AI accelerators could begin appearing in data centers by 2028-2030, with consumer devices following a few years later.

The economic implications are also significant. Data centers currently consume about 1-2% of global electricity, and AI workloads are growing exponentially. If memristors can reduce AI energy consumption by 70%, the cost savings for companies like Google, Microsoft, and Amazon could be in the billions of dollars annually. This could also make AI more accessible to smaller companies and developing countries that lack the infrastructure for massive data centers.

🤖 Brainless Microbot: Swimming and Navigation Without Control

Researchers at Leiden University in the Netherlands have created 3D-printed microbots that are only a few tens of micrometers long — far smaller than the width of a human hair — yet these robots can swim, sense, navigate, and adapt in ways that look surprisingly life-like. And all this without having a brain, sensors, or software.

These robots, published in the journal PNAS on March 27, 2026, are made using 3D microprinting and set into motion using an alternating-current electric field. The structures carry no sensors, no software, and no central controller — they use only their physical shape and the environment to move and navigate.

🔬 How Brainless Robots Work

These robots use the principle of embodied intelligence — the idea that intelligence exists not only in the brain but also in the shape and interaction of the body with the environment. Leiden robots are made from flexible structures that can respond to electric fields and deform themselves.

When an alternating electric field is applied, different parts of the robot respond in different ways — some contract, some expand. These shape changes result in movement. The robot's shape also determines how it interacts with obstacles and how it changes its path. In other words, the robot's physical shape is its brain. This is a radical departure from conventional robotics, which relies on sensors, processors, and control algorithms.

These robots measure between 0.5 to 5 micrometers and can move at speeds of 7 micrometers per second. They can avoid obstacles, navigate narrow channels, and even respond to environmental changes — all without any sensors or software. The key insight is that intelligence can be encoded in physical structure rather than computational algorithms. This principle, known as morphological computation, has profound implications for robotics, materials science, and even our understanding of intelligence itself.

🏥 Potential Applications

- Medicine: Smart pills that can navigate the body and deliver drugs to specific locations

- Microscopic Surgery: Robots that can move through blood vessels and clear clots

- Biological Research: Tools for studying cells and tissues at microscopic scales

- Space Technology: Small, lightweight robots for exploring hazardous environments

- Environmental Cleanup: Robots that can collect microscopic pollutants

- Manufacturing: Micro-assembly of nanoscale devices and materials

- Diagnostics: In-body sensors that can navigate to disease sites

These robots demonstrate that intelligence doesn't necessarily require a complex brain. Sometimes, intelligent design can replace complex computation. This principle could be applied in designing the next generation of robots, medical devices, and even AI systems. The concept of embodied intelligence challenges our traditional understanding of what it means to be "smart" and opens new avenues for creating adaptive, efficient systems.

For medicine, this technology could have important applications in the future. Imagine pills that can automatically navigate to tumors and deliver drugs directly to them, or robots that can move through blood vessels and clear clots. Of course, these technologies are still years away from us, but Leiden's research shows that the future of medicine is at the microscopic scale. The challenge now is scaling up production, ensuring biocompatibility, and developing methods to control and track these microbots inside the human body.

The manufacturing process for these microbots is also noteworthy. Using two-photon polymerization — a 3D printing technique that can create structures at the nanoscale — researchers can fabricate complex shapes with precise control. This opens the door to mass production of customized microbots for different applications. As the technology matures, we could see libraries of microbot designs optimized for specific tasks, from drug delivery to environmental sensing.

The broader implications for robotics are equally exciting. If we can create functional robots without sensors, processors, or power sources, we can dramatically reduce cost, complexity, and failure modes. This could enable swarms of simple robots that collectively perform complex tasks — a concept known as swarm robotics. Nature provides many examples of this, from ant colonies to bird flocks, and the Leiden microbots show that we can engineer similar capabilities at the microscopic scale.

🎯 Final Thoughts: The Future of Technology in Convergence with Biology

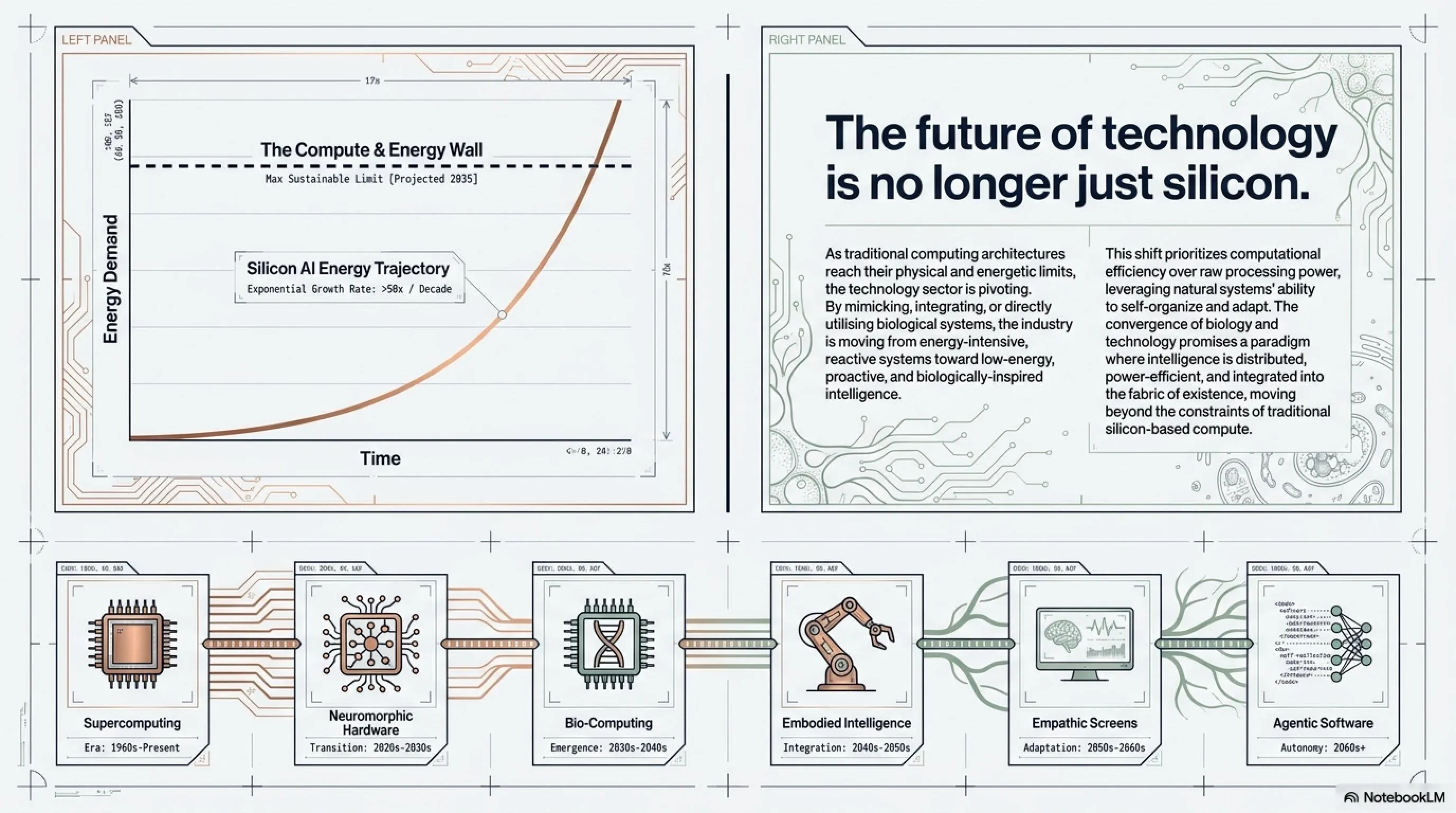

Today's six stories reveal a common trend: the convergence of technology with biology. From the Pangea 5 supercomputer designed for sustainable energy exploration, to hybrid chips with living neurons, from smart displays that monitor health to memristors that work like the brain — all these technologies are moving toward one goal: greater efficiency, lower energy consumption, and deeper integration with human life.

This convergence is not accidental. As we push the limits of silicon-based computing, we're discovering that nature has already solved many of the problems we're trying to tackle. The human brain processes information with remarkable efficiency, biological systems self-repair and adapt, and living organisms navigate complex environments without sophisticated sensors or processors. By learning from biology and integrating biological components into our technology, we can create systems that are more powerful, more efficient, and more sustainable.

For the United States, Europe, and Asia, these trends bring both opportunities and challenges. Opportunities include access to advanced health technologies, reduced AI energy costs, and the possibility of breakthrough research in emerging fields. Challenges include the need for investment in infrastructure, training specialized human resources, and navigating the ethical implications of hybrid biological-electronic systems.

But one thing is clear: the future of technology is no longer just silicon — biology, materials physics, and intelligent design will all play key roles. Those who can combine these disciplines will be the leaders of the next generation of technology. The companies and countries that invest in bio-hybrid systems, neuromorphic computing, and sustainable AI will have a significant competitive advantage in the coming decades.

🌍 Global Implications and Strategic Considerations

The technologies discussed today have profound geopolitical implications. Countries that lead in bio-hybrid computing, energy-efficient AI, and health monitoring technologies will have significant economic and strategic advantages. The race is already underway:

- United States: Leading in AI research and bioelectronics (Princeton 3D-MIND, Cambridge memristors)

- Europe: Strong in sustainable technology and energy efficiency (TotalEnergies Pangea 5, Leiden microbots)

- Asia: Dominating consumer electronics and display technology (Samsung Sensor OLED, One UI 8.5)

- China: Investing heavily in neuromorphic computing and bio-inspired AI

The convergence of these technologies will reshape industries, create new markets, and potentially disrupt existing power structures. Companies and countries that fail to adapt risk being left behind in the next technological revolution.

❓ Frequently Asked Questions (FAQ)

Why is the Pangea 5 supercomputer important for the energy industry?

Pangea 5 multiplies TotalEnergies' computing power by 6x, enabling more advanced seismic imaging, more accurate modeling of energy resources, and better optimization of energy networks. The supercomputer also reduces energy consumption by 40% and recovers waste heat — demonstrating a commitment to sustainability. For an energy industry facing climate challenges and pressure to reduce carbon emissions, such investments are critical. Moreover, the €100 million investment signals that TotalEnergies is building its own dedicated infrastructure rather than relying on public clouds, ensuring data security, control, and cost optimization.

Are hybrid chips with living cells safe?

Hybrid chips like 3D-MIND use human brain cells grown from stem cells in a laboratory — not from actual brain tissue. These cells are maintained in a controlled, sterile environment and pose no biological risk to users. Additionally, these devices are currently only used for scientific research and will take years to become commercial products. Before public release, they must pass rigorous safety standards. The ethical considerations are more complex than the safety concerns — questions about the moral status of systems combining biological neurons with electronics will require new ethical frameworks as the technology advances.

When will One UI 8.5 be available for users in North America and Europe?

Samsung released One UI 8.5 first in South Korea on May 6, 2026, and is gradually expanding to other regions. Based on previous patterns, the update is expected to reach North America and Europe within 2-4 weeks. To check for update availability, go to Settings > Software Update > Download and Install. Note that rollout may vary depending on device model, carrier, and region. Unlocked devices typically receive updates faster than carrier-locked devices.

Can Samsung's Sensor OLED display replace medical devices?

No, the Sensor OLED display cannot replace FDA-approved medical devices or similar regulatory-approved equipment. This technology is designed for general health monitoring, not clinical diagnosis. If you're concerned about blood pressure or heart problems, you should use standard medical devices and consult with a physician. However, these displays can be useful as a complementary tool for tracking health trends over time and encouraging users to seek professional care when abnormalities are detected. Think of it as a screening tool, not a diagnostic device.

When will Cambridge memristors appear in commercial products?

Cambridge memristors are still in the research stage and will likely take 5-10 years to become commercial products. Key challenges include mass production, integration with existing architectures, and ensuring long-term reliability. However, if this technology succeeds, it could dramatically reduce AI data center energy consumption and lower operational costs. Industry analysts predict that memristor-based AI accelerators could begin appearing in data centers by 2028-2030, with consumer devices following a few years later. The economic incentives are enormous — data centers currently consume 1-2% of global electricity, and AI workloads are growing exponentially.

What are the potential medical applications of brainless microbots?

Brainless microbots could revolutionize medicine in several ways: targeted drug delivery (navigating to tumors and releasing medication), minimally invasive surgery (clearing blood clots or removing blockages), diagnostics (sensing disease markers in hard-to-reach areas), and tissue repair (delivering stem cells or growth factors to damaged tissues). The key advantage is their simplicity — without sensors, processors, or power sources, they're less likely to fail and easier to manufacture. However, significant challenges remain, including biocompatibility, tracking inside the body, and regulatory approval. Expect to see first clinical trials in the early 2030s.

📚 Sources

Primary Sources:

TotalEnergies Press Release (May 6, 2026), Dell Technologies, NVIDIA, Interesting Engineering, Princeton University Materials Research, Science Advances Journal, Samsung Display Week 2026, GSMArena, Android Authority, SamMobile, Forbes, University of Cambridge Press Release, Science Advances (Memristor Research), Leiden University PNAS Publication (March 27, 2026), Tom's Hardware, TechXplore, Business Wire, Financial Times, Yahoo Finance, TechRepublic, Gadgets 360

Tekin Morning May 11, 2026 — Research and Analysis: Tekin Editorial Team

🌐 Stay Connected With Us

For the latest tech, gaming, and gadget news, follow us on social media: