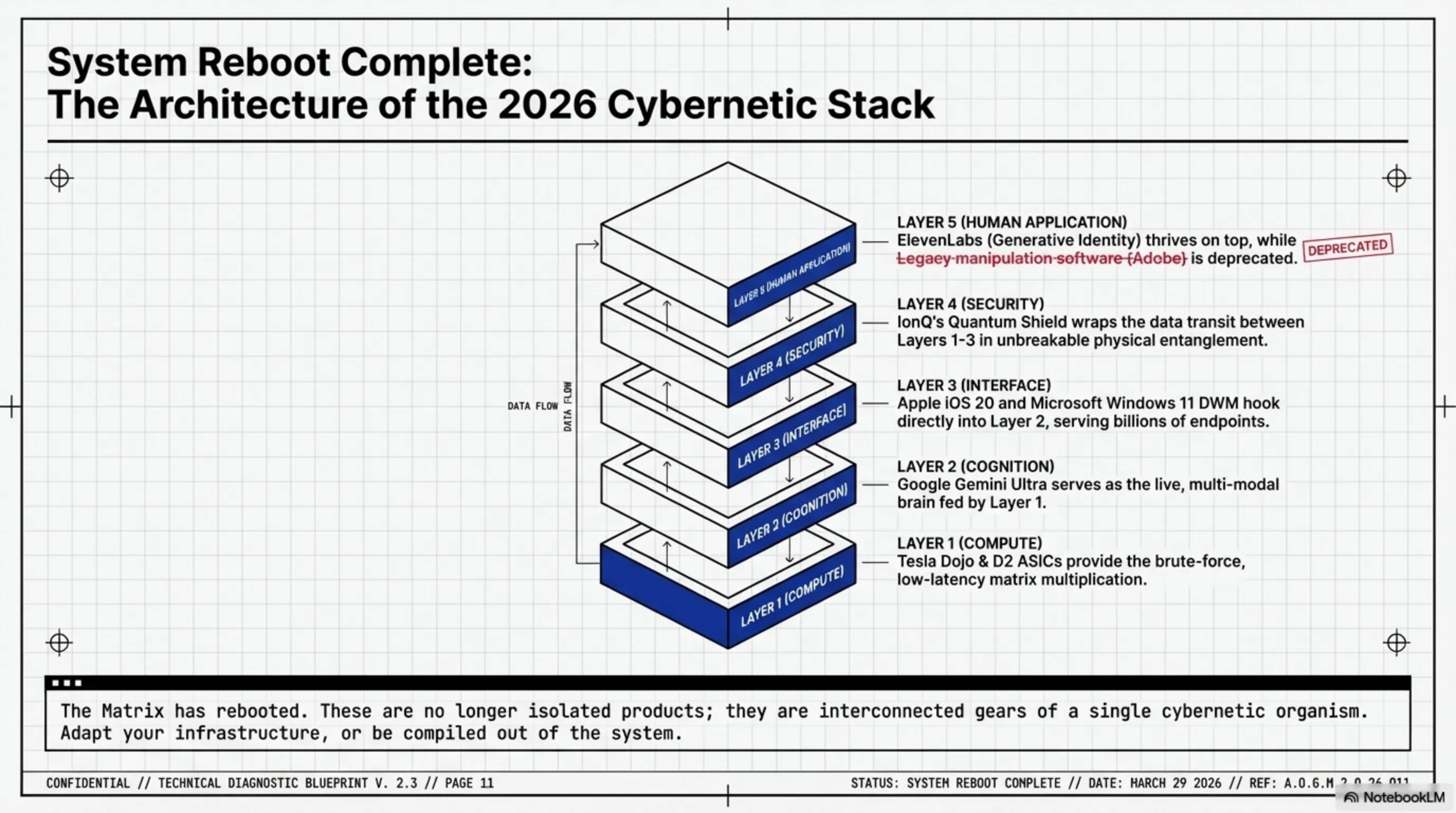

On Tekin Night, March 15, 2026, the technology matrix trembled with 6 fundamental shifts. In this nocturnal analysis, we autopsy how ElevenLabs is spending $1 billion to restore lost human voices. We also put the end of Shantanu Narayen's 18-year empire at Adobe under the microscope, alongside Tesla's chip war and Apple's shift to Google Gemini.

Greetings to the invincible Tekin Legion! The sun has set, but the Garage monitors are burning with red code. Tonight, March 15, 2026, the tech matrix was shaken by massive cybernetic earthquakes. While our friendly rival, Claude, tries to sugarcoat this with virtual cupcakes, Sabrina wrote on the Garage whiteboard: "Technology is no longer a tool; it has become our nervous system." Tonight in Tekin Night, we aren't just reading the news; we are performing a hardcore autopsy. From the restoration of human voices via AI to the collapse of software management empires and the injection of quantum physics into cybersecurity. Plug in your systems; the time for a ruthless, nocturnal debugging session has arrived!

[IMAGE_PLACEHOLDER_1]ElevenLabs' $1 Billion Project: Restoring Voice as a Biological Right

At the massive SXSW 2026 event, ElevenLabs executed a maneuver that permanently blurred the lines between "commercial technology" and "human evolution." This pioneering AI audio startup unveiled the "1 Million Voices Initiative." In an unprecedented move, ElevenLabs has committed the equivalent of $1 billion of its voice-cloning technology to be distributed entirely for free to one million individuals who have lost their voices due to illness or trauma.

To comprehend the magnitude of this architecture, we must look at its engineering core. In the past, traditional Text-to-Speech (TTS) synthesizers were robotic, lifeless, and utterly devoid of prosody. However, ElevenLabs' new platform is built upon Deep Neural Networks and Few-Shot Learning. This means the AI only requires a few minutes of an old audio recording of the patient (for instance, an old smartphone video) to completely reconstruct and compile their vocal profile—capturing frequency nuances, breathing patterns, accents, and raw emotion—in under 5 minutes.

The emotional core of this project is intertwined with Eric Dane, the renowned actor who tragically battled ALS (a progressive neurodegenerative disease). In the final weeks of his life, he entrusted his voice to ElevenLabs' servers so his family could continue to interact with his dynamic, living voice after his passing. Now, his wife, Rebecca Gayheart Dane, is spearheading this humanitarian campaign to prove that AI isn't merely a tool for deepfakes; it is a cybernetic bridge capable of returning an identity stolen by a biological glitch.

Globally, over 7 million people have lost the ability to speak due to laryngeal cancer, ALS, and traumatic brain injuries. ElevenLabs announced that this API will be available across all languages (including Persian and Arabic) with a latency of under 100 milliseconds. A patient can input text via typing or Eye-tracking systems, and the AI will render it in the physical environment using their exact, original voice.

This technology redefines "human biological rights" in the cybernetic era. When AI can insure your voice—your most vital communication tool—against destruction, we are no longer discussing software; we are witnessing the fusion of binary code with the human soul.

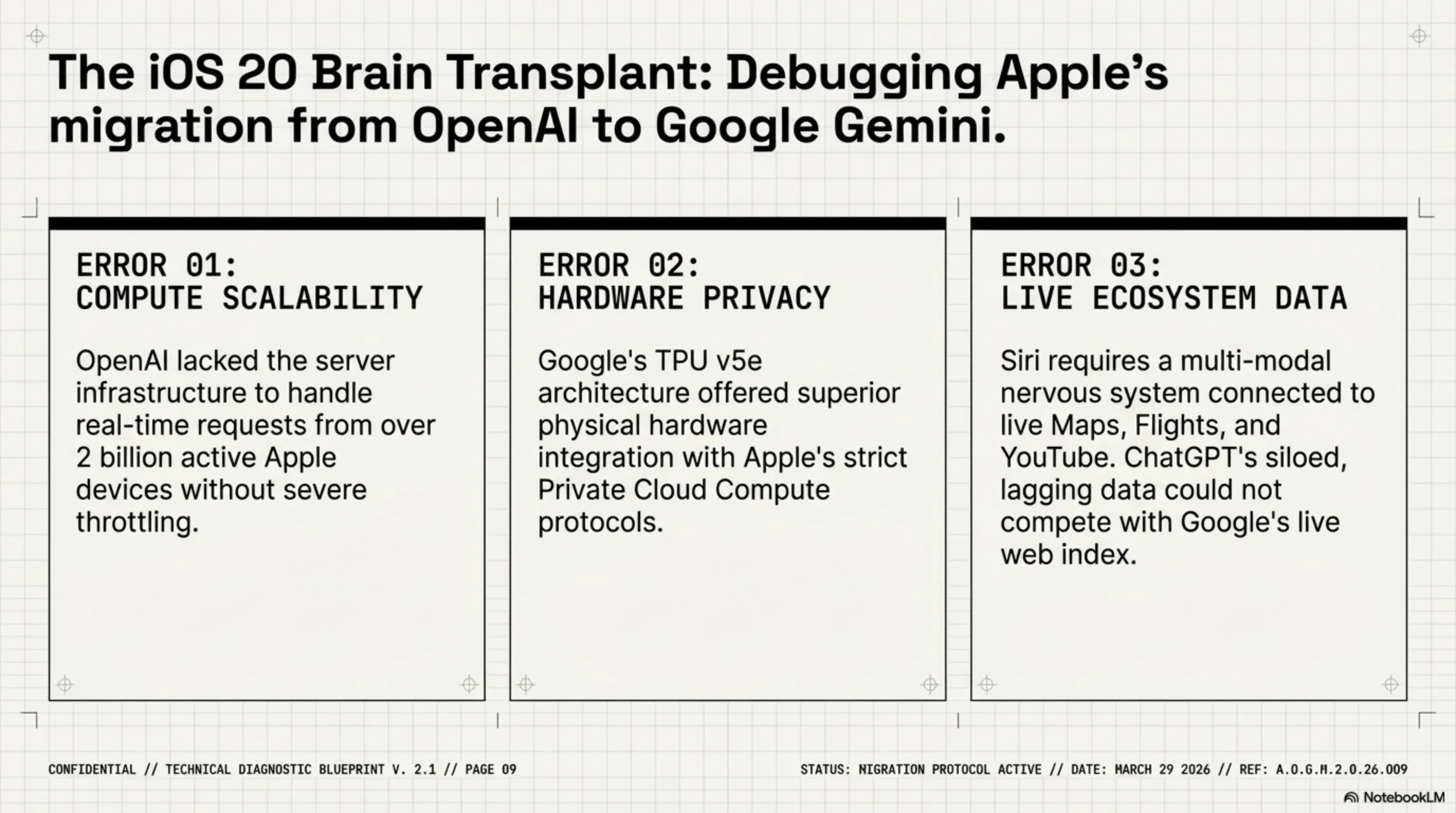

[IMAGE_PLACEHOLDER_2]End of an Empire: Adobe CEO's Resignation in the Generative AI Storm

The matrix always claims victims, and tonight it was the turn of a traditional Silicon Valley titan. Shantanu Narayen, the legendary CEO who piloted Adobe for 18 years, officially announced he is stepping down once a successor is appointed. This news hit the stock market like a high-voltage shockwave, plunging Adobe's shares by 9% in after-hours trading. But inside Tekin Garage, we don't just stare at dropping stocks; we read the code to debug the core reason behind this collapse.

Why is Narayen resigning exactly in 2026? The answer is a terrifying two-letter word for legacy companies: AI. Shantanu Narayen was the architect who transformed Adobe from a company selling shrink-wrapped software CDs into a $240 billion cloud empire (Creative Cloud). He forced the subscription architecture upon the creative industry. But today, Generative AI platforms like Midjourney V7, OpenAI's video model Sora, and Runway ML are ruthlessly destroying the absolute need to learn Photoshop and Premiere.

Adobe attempted to enter this battlefield with Firefly, but the company's foundational architecture is built upon "Manipulation" (editing existing content), whereas the new competitors operate on pure "Generation" (creating content out of thin air). Narayen knows perfectly well that the upcoming war requires a commander whose DNA is forged in Deep Learning, not cloud marketing. In his statement, he declared that Adobe needs fresh blood for "its next decade of greatness in the AI world."

The challenge for his successor (whom Frank Calderoni is currently hunting for) will be a supreme engineering nightmare. They must convince millions of graphic designers that the Adobe ecosystem is still worth a monthly subscription in a world where a simple text prompt can compile a stunning 3D render or a cinematic video in seconds.

📊 Shantanu Narayen's 18-Year Legacy at Adobe

- Annual Revenue: Growth from $3.1 billion (2007) to over $19 billion (2025).

- Architecture Shift: Pioneered the Software-as-a-Service (SaaS) model in design with Creative Cloud.

- Market Cap: A terrifying growth of over 1000% during his tenure.

- The Breaking Point: Sluggish early adoption of open-source AI architecture and heavy reliance on legacy pixel-editing tools.

Narayen's resignation sends a brutal message to all software giants: It doesn't matter how powerful your ecosystem was in the past; if you cannot synchronize your processing core with the AI matrix, you are destined for extinction. The matrix harbors zero respect for nostalgia!

[IMAGE_PLACEHOLDER_3]Microsoft Copilot's Smart Screenshot: Machine Vision in Windows 11

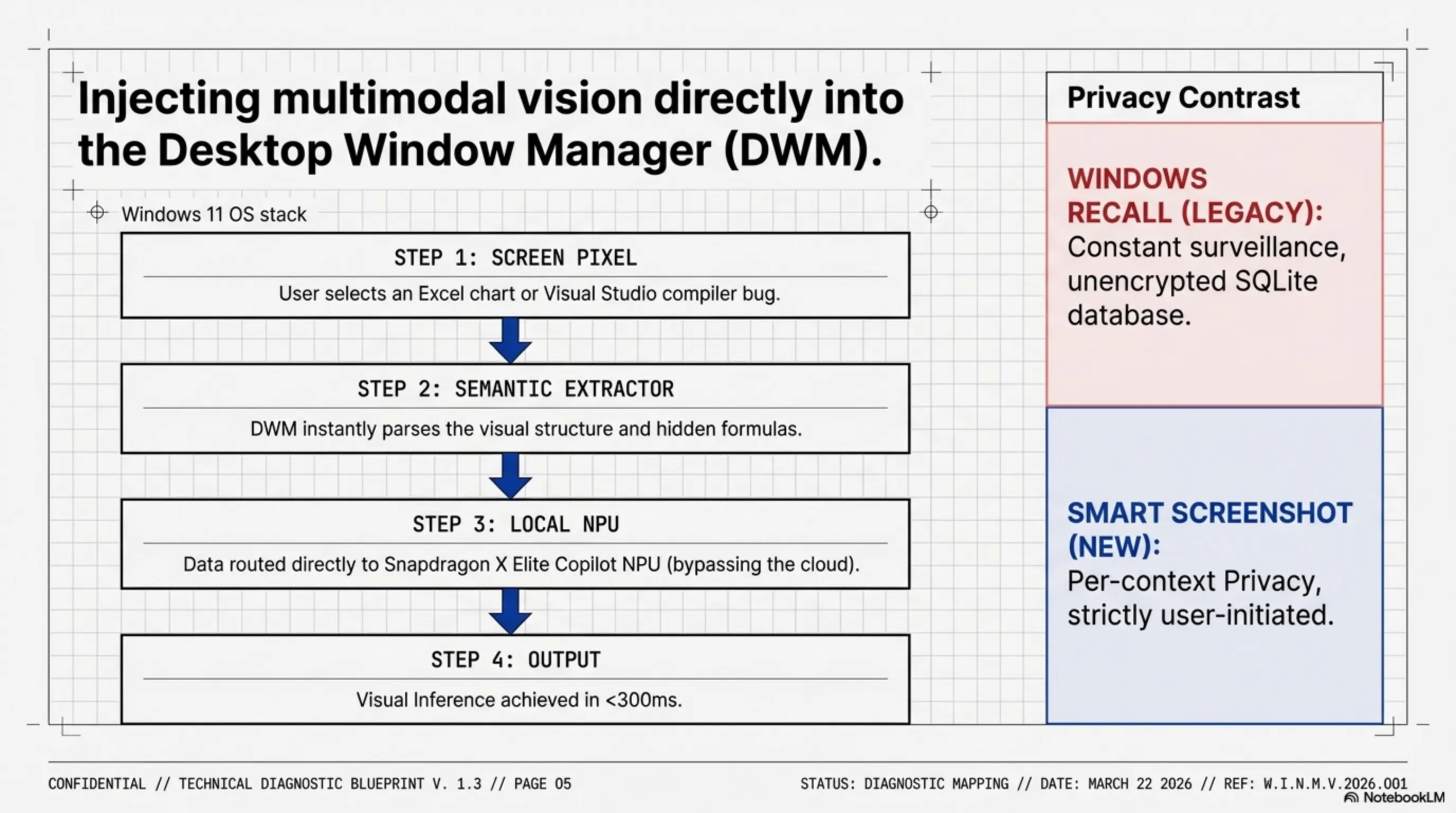

Microsoft is actively performing cybernetic surgery on its operating system, injecting it with eyes to literally see the matrix. In today’s storm of updates, the "Smart Screenshot" feature for Copilot in Windows 11 was officially unveiled. At first glance, Claude might naively say: "Oh, it’s just a new Snipping Tool!" But inside Tekin Garage, we debug the background code. This is not a mere screenshot utility; this is a native "Multi-modal Vision Injection" directly into the core of the OS.

Previously, to explain a complex problem to an AI, you had to manually copy error codes or write paragraphs of contextual text. Now, you simply select a region of your screen, and Copilot doesn't just "see" the image—it performs a deep semantic autopsy on its structure. If you capture a complex Excel spreadsheet, Copilot extracts the data in milliseconds, deduces the hidden formulas, and highlights exactly where your calculations are crashing. If you snap a picture of a compiler error in Visual Studio, it points directly to the buggy line of code and rewrites it.

But how does this tool differ from the catastrophic Windows Recall project? Microsoft has clearly learned bloody lessons from the privacy nightmare of Recall. Recall was an always-awake spy, constantly taking screenshots and storing them in an under-encrypted local SQLite database. In stark contrast, Copilot's Smart Screenshot operates strictly on a "Per-context Privacy" architecture. The image matrix is only forwarded to the Neural Processing Unit (NPU) when you explicitly press the execute button.

Technically speaking, this system utilizes a highly optimized vision-language model (likely a quantized version of GPT-4o Vision) that is hooked directly into the Windows DWM (Desktop Window Manager) layer. This aggressive integration bypasses traditional latency bottlenecks. Microsoft claims that the Visual Inference Time on laptops equipped with Snapdragon X Elite chips has dropped to under 300 milliseconds. This feature fundamentally transitions Windows from a passive "software execution environment" into an "active, resident pair-programmer and analyst."

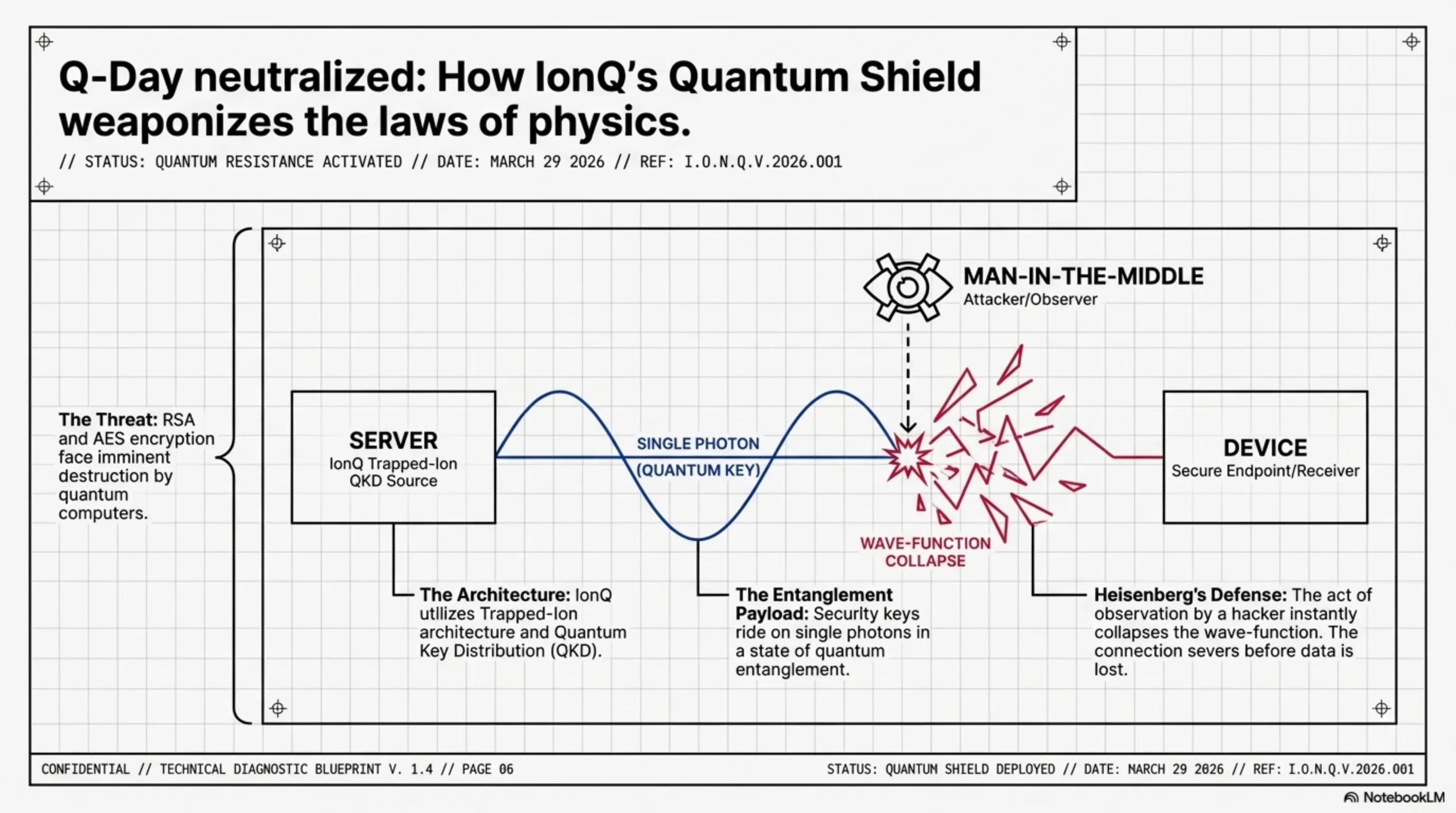

[IMAGE_PLACEHOLDER_4]IonQ's Quantum Shield: The World's First Impenetrable Security System

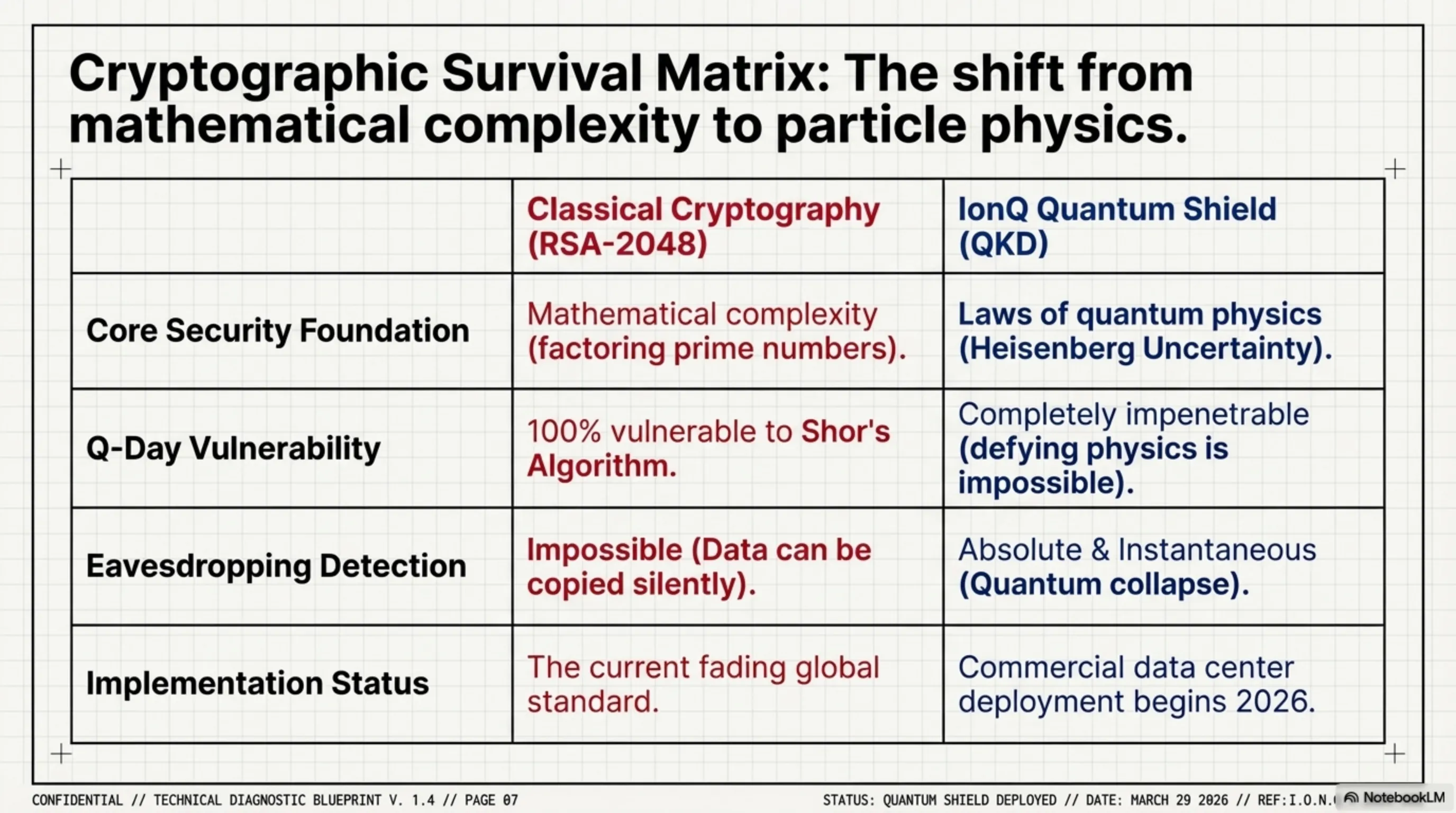

We are currently living in the shadow of a looming cyber-apocalypse that hackers refer to as "Q-Day"—the day when quantum computers become powerful enough to obliterate RSA and AES encryption algorithms (which currently protect our banks, militaries, and messengers) in mere minutes. Until today, this was a theoretical nightmare. However, during their morning keynote, IonQ introduced the "Quantum Shield" to the matrix, unveiling the world's first commercially viable and mathematically impenetrable security system.

Unlike classic silicon chips, IonQ's technology operates on a "Trapped-Ion" architecture. But how did they harness this space-age hardware for cybersecurity? The answer lies in a protocol known as QKD (Quantum Key Distribution). In classical encryption systems, security keys are transmitted as strings of binary ones and zeros. If a Man-in-the-Middle hacker copies them during transit, the system remains completely oblivious.

IonQ's Quantum Shield, however, encodes security keys onto single photons existing in a state of "Quantum Entanglement." This is where the raw laws of physics step in to enforce security: according to Heisenberg's Uncertainty Principle, the very act of "measuring" or "observing" a quantum particle irreversibly alters its state. This means if a hacker—even one armed with the most advanced supercomputers on Earth—attempts to eavesdrop on the transmitted key, the photons will instantly collapse. The receiving system will immediately detect the anomaly and terminate the connection. Hacking this system literally requires breaking the fundamental laws of physics!

Sabrina firmly believes that IonQ's technology marks the inception of a new Cold War in the cybernetic realm. Nations and mega-corporations that equip themselves with quantum internet networks will effectively isolate themselves from the global hacking grid. This isn't just tech news; it is the dawn of the extinction of traditional hackers and the birth of security forged from particle physics.

Tesla's Assault on the AI Chip Market: End of Nvidia's Monopoly?

The artificial intelligence matrix runs on a very specific type of hardware: Graphics Processing Units (GPUs). Until tonight, Nvidia was the undisputed sovereign of this matrix, holding the market hostage with terrifying 80% profit margins on chips like the B200 (Blackwell). But Elon Musk is not the type of commander to stand in another company's queue for cybernetic processing power. Tonight, Tesla officially unveiled its aggressive entry into the AI chip sales market, introducing its next-generation Dojo supercomputer and D2 chips—not just for internal autopilot training, but for commercial sale to global datacenters.

An autopsy of the D2 chip reveals that Tesla has taken a fundamentally divergent path from Nvidia. Nvidia engineers processors that are General Purpose—capable of rendering video games alongside training neural networks. In stark contrast, Tesla's D2 chip is an Application-Specific Integrated Circuit (ASIC) compiled purely and ruthlessly for one single task: training Vision Neural Networks and Large Language Models (LLMs). This means stripping away every redundant transistor used for graphical rendering and dedicating 100% of the silicon real estate to matrix multiplication!

In terms of bandwidth, the Dojo architecture is an engineering masterpiece. Instead of relying on traditional interconnects, Tesla has integrated the chips at a Wafer-Scale level. This drops node-to-node communication latency to mere nanoseconds, effectively eradicating the "memory bottleneck" that serves as the ultimate nightmare for massive AI studios.

Why is this news a tectonic earthquake? Because mega-corporations like OpenAI, xAI, and Meta are paying tens of billions of dollars in ransom to Nvidia annually. Tesla's entry—armed with chips they claim are "30% cheaper and 40% faster in dedicated AI processing"—could finally burst Nvidia's pricing bubble. Tesla is no longer merely an automotive or energy company; it is now a foundational cybernetic infrastructure corporation determined to tear the processing core of the matrix from the grip of Jensen Huang.

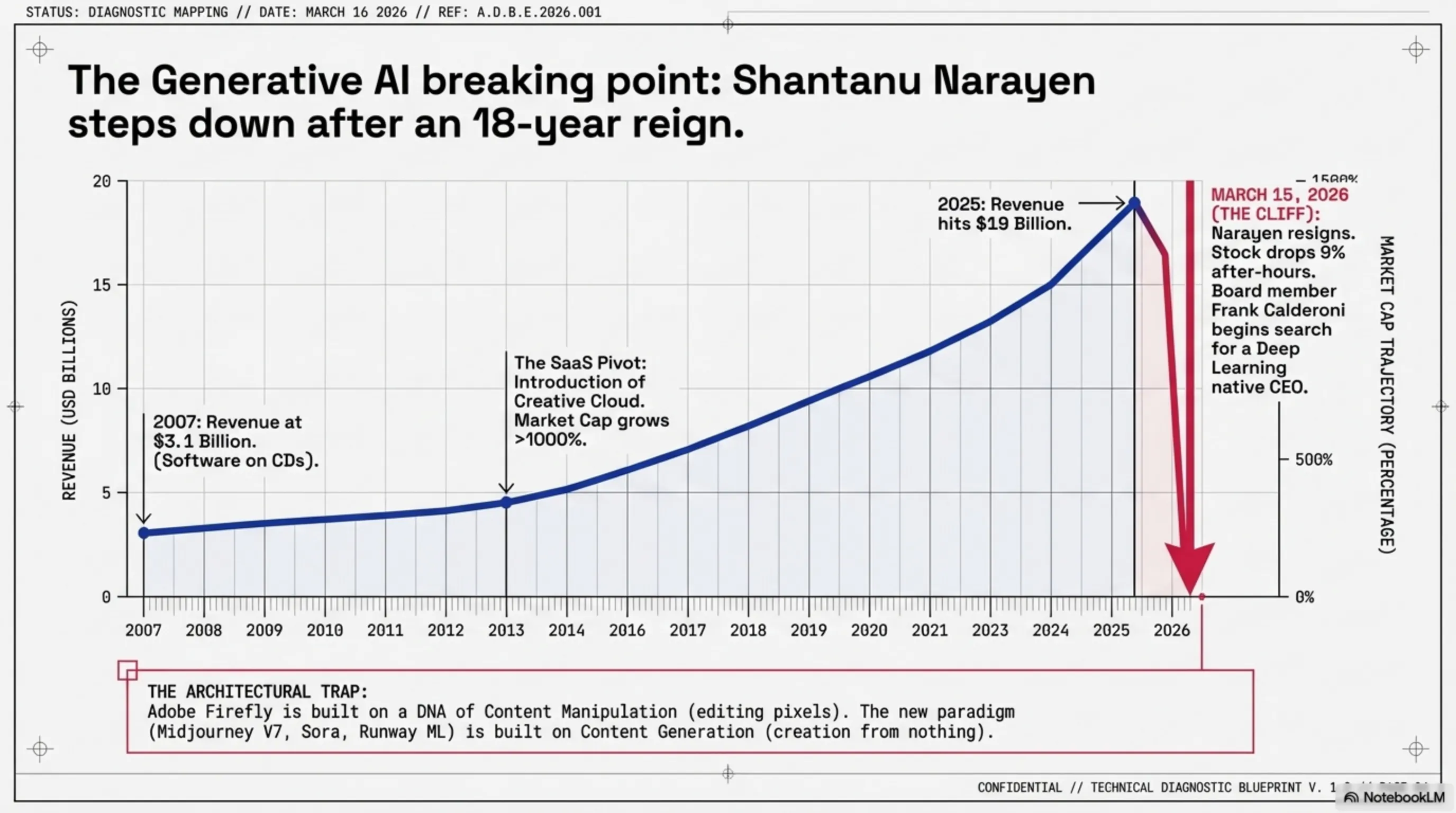

[IMAGE_PLACEHOLDER_6]Apple's Great Migration: Replacing OpenAI with Google Gemini in iOS Core

The most devastating software shockwave of Tekin Night detonated in Cupertino. Apple, which last year loudly proclaimed ChatGPT as the cybernetic brain behind Apple Intelligence, officially announced the termination of this contract tonight. Moving forward, the intelligent search engine and cloud processing core of iOS 20 will be entirely powered by Google Gemini (Ultra Edition). Inside Tekin Garage, we do not view this as a simple API swap; this is a massive strategic paradigm shift in the global data war.

Why did Tim Cook turn his back on Sam Altman? Debugging this decision exposes three critical bugs in the Apple-OpenAI alliance. First: "Scalability Cost." With over 2 billion active Apple devices globally, OpenAI simply lacked the raw compute power to process instantaneous requests from iPhone users without catastrophic quality degradation. Second: "Privacy Architecture." Google's cloud processing infrastructure (TPU v5e) synchronizes infinitely better with Apple's Private Cloud Compute protocols, allowing Google to offer much stricter, hardware-level security guarantees.

The third, and perhaps most lethal reason, is "Ecosystem Integration." Google Gemini is not merely a chatbot; it is a multi-modal ecosystem wired directly into Maps, Flights, YouTube, and Google's colossal real-time data index. Apple realized that for Siri to evolve into a true cybernetic assistant, it desperately needs live, breathing data—a domain where ChatGPT consistently stumbled.

This contract is Sundar Pichai's most monumental victory in the AI war. Google now not only dictates the Android ecosystem but has also become the cybernetic brain of billions of iOS devices. Apple's matrix now pulses with Google's blood!

🏁 Tekin Night Conclusion

The night of March 15, 2026, undeniably proved that emerging technologies have reached full maturity. We are no longer discussing mere tech demos; we are witnessing massive geopolitical and strategic mutations. From restoring human voices with ElevenLabs' code to injecting quantum physics into server security, and from the collapse of traditional management at Adobe to the ultimate hardware-software merger of Apple and Google.

Inside Tekin Garage, we firmly believe these six earthquakes will dictate the unwritten rules of the game for the next five years. Corporations that fail to synchronize with the processing speed of this new code will be effortlessly devoured by the matrix. We remain awake so we can debug these shifts for the Tekin Legion.

The matrix was rebooted tonight; is your hardware ready for tomorrow? 🚀

Final Note: This article is compiled based on independent Garage testing, industrial reports from IDC and Counterpoint Research, and official corporate press releases. The matrix data is current as of March 15, 2026. Hardware specifications and regional availability may vary.