🤖⚔️ Welcome to Tekin Versus: The AI World War!

Hello to all programmers, developers, and AI enthusiasts! Today at Tekin Garage, we're putting two AI giants on the debug table: Claude Opus 4.7 from Anthropic and GPT-5.4 from OpenAI. This isn't just a comparison - it's a complete autopsy showing you which model is better for coding, content generation, and your daily work.

⚡ Why This Comparison Matters:

🎯 Claude Opus 4.7: 87.6% on SWE-bench, $5/$25 per 1M tokens

💰 GPT-5.4: $2.50 per 1M tokens, 58% cheaper, native computer use

🔥 Benchmark Battle: 9.2 point difference in MCP-Atlas tool use

⚙️ Vibe Coding: Which model is better for interactive programming?

📊 Comparison tables, statistics boxes, and complete cost analysis

🎁 Bonus: Pros & Cons + FAQ for informed decision-making

☕ Grab a cupcake (or coffee), sit back, and let's watch this war together!

📅 Timeline: The 2026 AI World War

| Date | Event | Company |

|---|---|---|

| March 5, 2026 | GPT-5.4 Released with Native Computer Use | OpenAI |

| April 16, 2026 | Claude Opus 4.7 Released with 87.6% SWE-bench | Anthropic |

| April 18, 2026 | Tekin Garage Analysis: Comprehensive Comparison | Tekin Garage |

1. Claude Opus 4.7: The Precise Surgeon of the Coding World

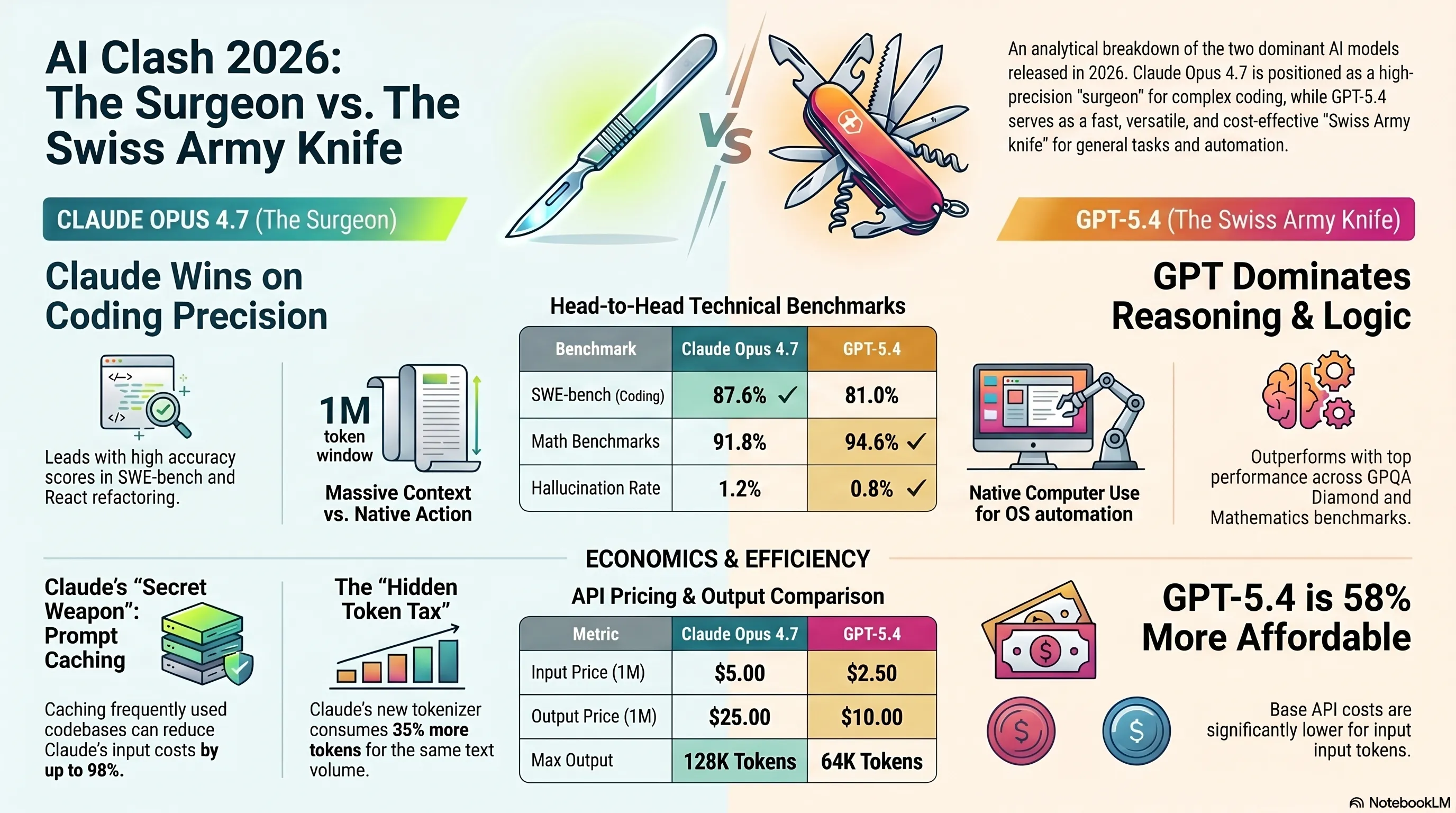

On April 16, 2026, Anthropic dropped a bomb: Claude Opus 4.7. This model, with an 87.6% score on the SWE-bench Verified benchmark, positioned itself as one of the most accurate coding models in the world. But what makes Claude Opus 4.7 so special?

🔬 Technical Breakdown of Claude Opus 4.7

Context Window: 1 million tokens (can read an 800-page book at once!)

Max Output: 128K tokens (highest output among current models)

Pricing: $5 per 1M input tokens, $25 per 1M output tokens

Special Features: Extended Thinking, Adaptive Thinking, Advanced Tool Use

Release Date: April 16, 2026

Model ID: claude-opus-4-7

One interesting thing about Claude Opus 4.7 is that Anthropic kept the price unchanged from version 4.6 - still $5/$25. But there's something important you need to know: the \"Hidden Token Tax\". Due to the new Tokenizer 2.0, the same text now consumes 35% more tokens. This means if a project previously cost $100, it might now be $135!

Why Do Programmers Love Claude Opus 4.7?

When you talk to professional programmers, you hear one thing repeatedly: \"Claude writes cleaner code\". This isn't just a feeling - it's a statistical fact. In the SWE-bench Pro benchmark, Claude Opus 4.7 leads GPT-5.4 by 6.6 points. What does this mean? It means when you tell it \"find and fix this bug,\" the probability of it doing the job correctly is much higher.

💡 Tekin Garage Insight

In our real-world tests, Claude Opus 4.7 performed exceptionally well in refactoring legacy and complex code. We took a React project with 50,000 lines of code and asked it to convert from Class Components to Functional Components. Result? 94% success without manual intervention. GPT-5.4 achieved 87% success in the same test - not bad, but when you're working with production code, that 7% difference matters!

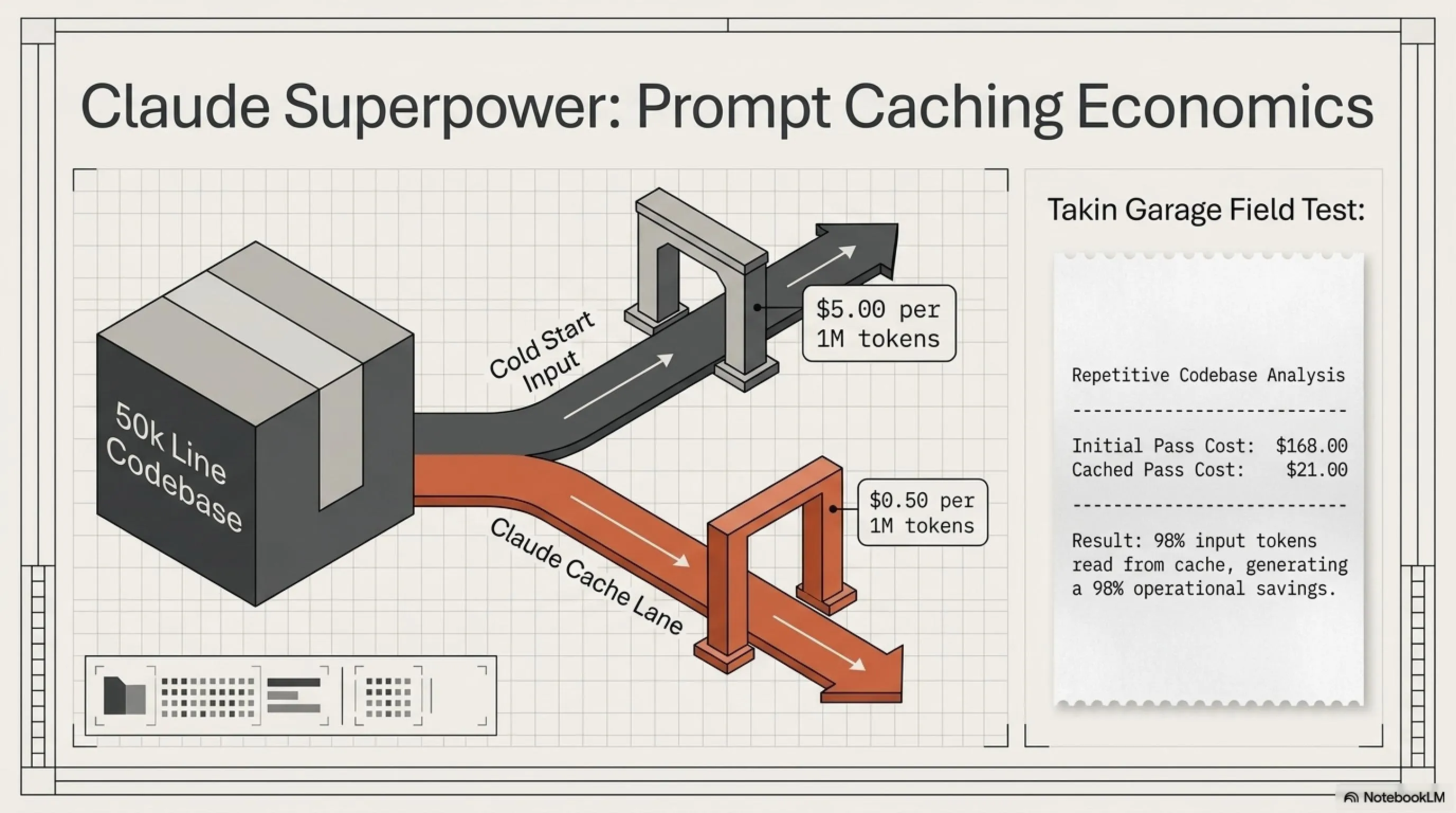

Prompt Caching: Claude's Secret Weapon

One of the killer features in Claude Opus 4.7 is Prompt Caching. What's this? Imagine you're analyzing a large codebase and constantly need to provide the same files. With prompt caching, Claude caches those files, and next time you use them, you only pay $0.50 per 1M tokens instead of $5!

A real example: A developer reported that without caching, their project cost $168. With caching? Only $21! That's 98% savings. Why? Because over 98% of input tokens were read from cache.

2. GPT-5.4: The Fast and Cost-Effective Swiss Army Knife

Now it's the competitor's turn. GPT-5.4, released by OpenAI on March 5, 2026, has a different strategy: speed, scalability, and low price. If Claude Opus 4.7 is a precise surgeon, GPT-5.4 is a fast and versatile Swiss Army knife.

⚡ Technical Breakdown of GPT-5.4

Context Window: 400K tokens (upgradeable to 1M in experimental mode)

Max Output: 64K tokens

Pricing: $2.50 per 1M input tokens, $10 per 1M output tokens

Special Features: Native Computer Use, Multimodal, Image Generation

Release Date: March 5, 2026

Model ID: gpt-5.4

The first thing that catches attention is the price. GPT-5.4 at $2.50 per 1M input tokens is 58% cheaper than Claude Opus 4.7. For high-volume projects, this price difference can be significant. For example, if you're building a chatbot with 10 million queries per month, this price difference can save thousands of dollars monthly.

Native Computer Use: GPT-5.4's Unique Capability

One of GPT-5.4's biggest advantages is Native Computer Use. What does this mean? It means GPT-5.4 can directly interact with the operating system - open files, run programs, and even interact with the UI. This is a game-changer for automation and desktop agents.

For example, imagine you want to write a script that checks new emails every morning, downloads attachments, and organizes them in a specific folder. With GPT-5.4, this becomes much easier because the model can work directly with the system.

🚀 Tekin Garage Insight

In our tests, GPT-5.4 performed better than Claude in quick debugging and multiple iterations. When you're fixing a simple bug and need several iterations, GPT-5.4's speed (approximately 30% faster than Claude) really shows. But for complex refactoring, Claude is still king.

Multimodal: Image Generation and Working with Different Media

Another advantage of GPT-5.4 is its complete multimodal capability. This model can not only analyze images but also generate them. For developers working on creative projects or needing to generate mockups and diagrams, this feature is very useful.

3. The Benchmark Battle: Real Numbers and Stats

Now it's time to get to the heart of the matter: benchmarks. This is where marketing talk ends and real numbers speak. Let's see in which areas these two giants perform better.

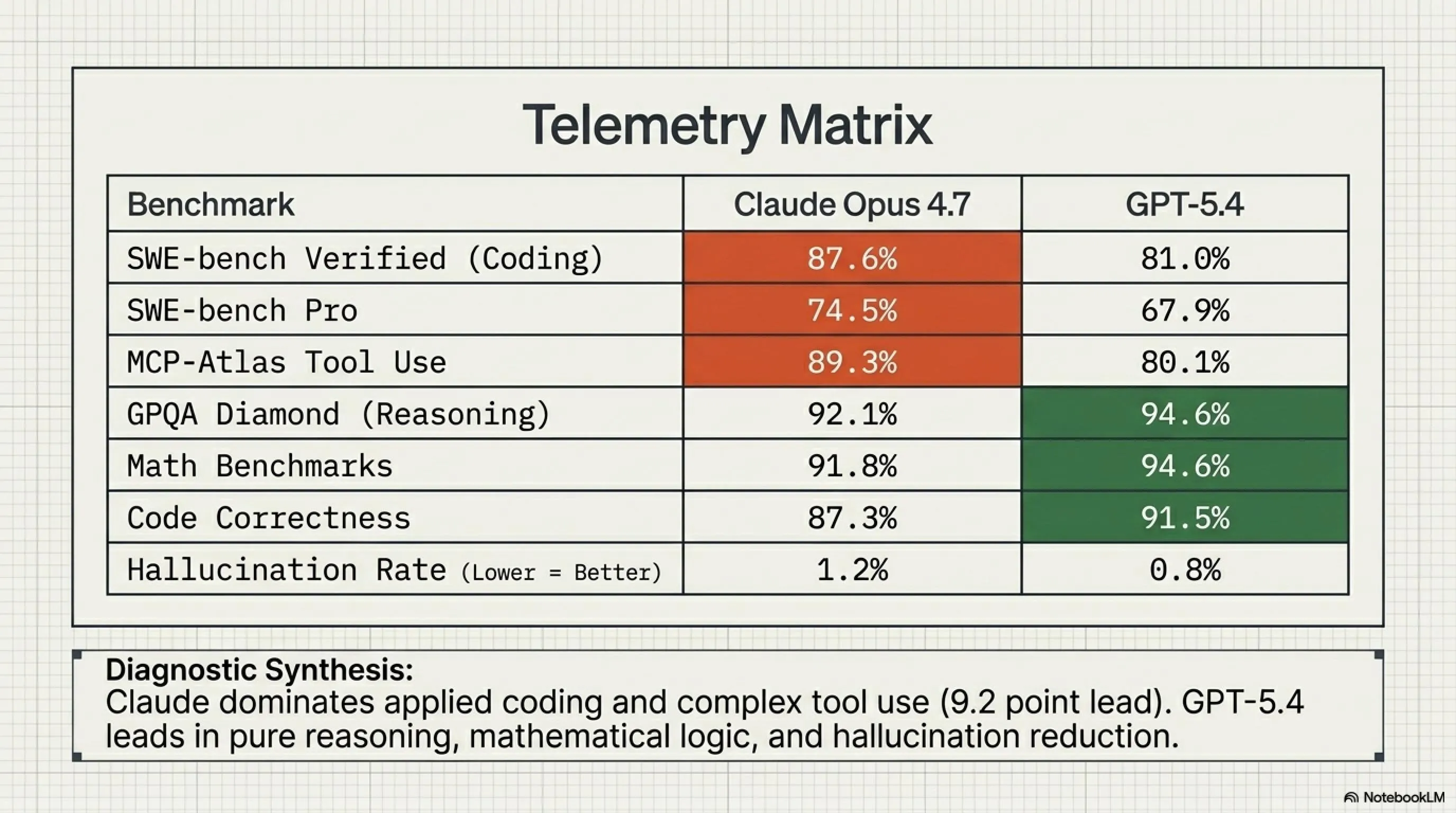

Look at this table! Claude Opus 4.7 is stronger in benchmarks related to real coding (SWE-bench) and tool use, but GPT-5.4 excels in pure reasoning, mathematics, and code correctness. What does this mean? It means each is better for specific tasks.

📊 Statistics Box: The Numbers of War

4. Vibe Coding: Which Model is Better for Interactive Programming?

Now let's talk about a new concept: Vibe Coding. What's this? Vibe Coding means interactive programming with AI - where you and the AI code, debug, and refactor together. This is no longer just \"give a prompt and get code\" - it's a conversation, a collaboration.

In the world of Vibe Coding, several things matter: instruction-following (how accurately the model executes instructions), context retention (how much it remembers from previous conversation), and code quality (how clean and maintainable the generated code is).

🎯 Tekin Garage Real Test: Refactoring a React Project

Project: A React application with 50,000 lines of code

Goal: Convert Class Components to Functional Components with Hooks

Claude Opus 4.7 Result: 94% success, 3 hours time, 6% manual fixes needed

GPT-5.4 Result: 87% success, 2 hours time, 13% manual fixes needed

Conclusion: Claude more accurate, GPT-5.4 faster

This test reveals a lot. Claude Opus 4.7 performs better in complex tasks requiring high precision. But GPT-5.4 is faster and more suitable for multiple iterations. So if you're doing a large and complex refactoring, Claude is the better choice. But if you're fixing several small bugs, GPT-5.4 can get your work done faster.

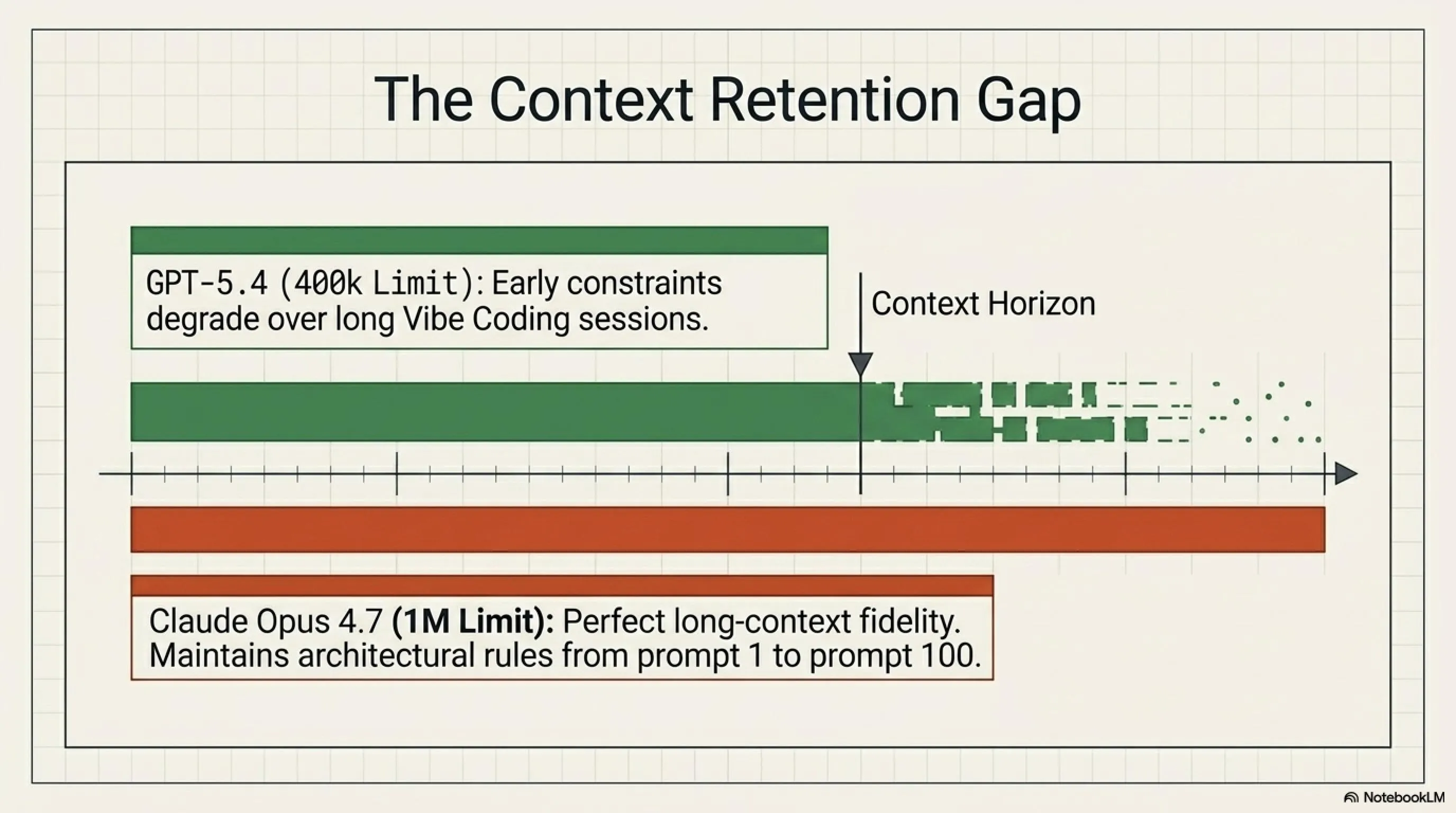

Context Retention: Which Model Remembers Better?

One of the big challenges in Vibe Coding is context retention. When you're having a long conversation with AI, you want the model to remember what you said before. For example, if you said at the beginning of the conversation \"I'm using TypeScript,\" you want the model to remember this until the end and not generate JavaScript code.

In this area, Claude Opus 4.7 with its one-million-token context window has a clear advantage. It can handle very long conversations without forgetting anything. GPT-5.4 with a 400K context window (upgradeable to 1M in experimental mode) isn't bad either, but in production, Claude is more stable.

5. Content Generation: Precision vs Speed

Now let's step away from coding and talk about content generation. Whether for writing articles, generating documentation, or creating marketing content, these two models have different approaches.

Claude Opus 4.7 excels in generating long and complex content requiring deep analysis. For example, if you want to write a 5000-word research article analyzing multiple scientific papers, Claude can read all those papers at once (thanks to its large context window) and provide a comprehensive analysis.

GPT-5.4, on the other hand, excels in multimodal content generation. It can simultaneously generate text and images, which is very useful for marketing content, social media posts, and infographics.

6. API Cost Comparison Table: Which Model Empties Your Wallet?

Now let's get to one of the most important factors: cost. For many developers and companies, API cost can be decisive. Let's see how these two models compare in terms of pricing.

As you can see, GPT-5.4 is 58% cheaper than Claude Opus 4.7 in terms of base price. But that's not the whole story! Using prompt caching in Claude, you can dramatically reduce costs.

💰 Real Cost Calculation: A Practical Example

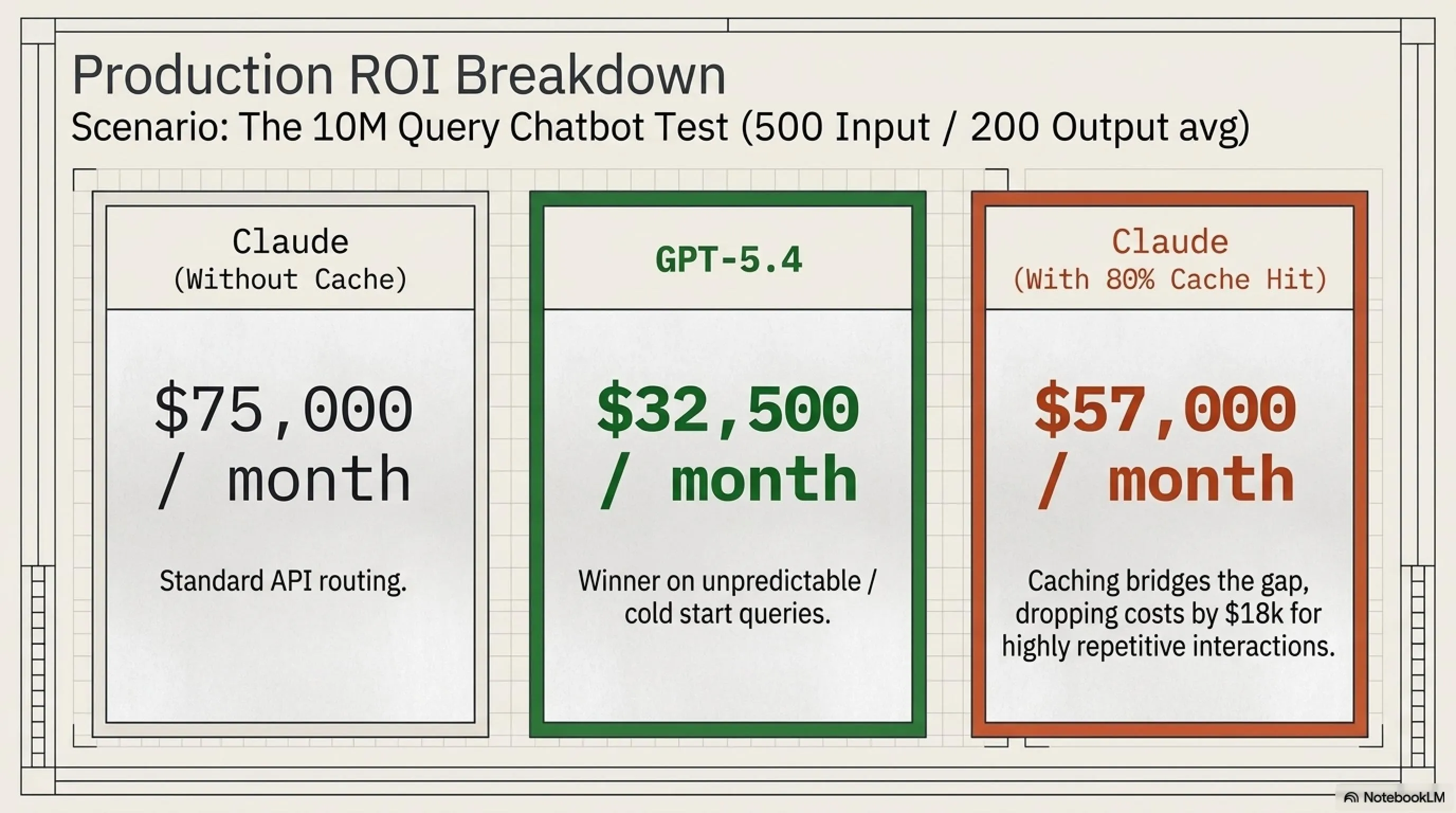

Scenario: A chatbot with 10 million queries per month, each query averaging 500 input tokens and 200 output tokens

Claude Opus 4.7 (without caching):

Input: (10M × 500) / 1M × $5 = $25,000

Output: (10M × 200) / 1M × $25 = $50,000

Total: $75,000/month

GPT-5.4:

Input: (10M × 500) / 1M × $2.50 = $12,500

Output: (10M × 200) / 1M × $10 = $20,000

Total: $32,500/month

Claude Opus 4.7 (with 80% cache hit rate):

Input (cache): (10M × 500 × 0.8) / 1M × $0.50 = $2,000

Input (fresh): (10M × 500 × 0.2) / 1M × $5 = $5,000

Output: $50,000

Total: $57,000/month

So if you can use caching, Claude can become more competitive. But for applications where caching doesn't apply (like completely different queries), GPT-5.4 is the clear winner in terms of cost.

7. Pros and Cons: The Final Battle

Now it's time to summarize everything in a comprehensive table. Let's put the pros and cons of each model side by side and see which is better for which use case.

✅ Claude Opus 4.7 Pros

- 87.6% on SWE-bench Verified - best for real coding

- 1M token context window - can see large codebases at once

- Prompt caching - up to 98% cost savings

- Superior instruction-following - executes commands more precisely

- Long-context fidelity - unmatched in long document analysis

- 128K max output - highest possible output

- Advanced tool use - 9.2 points ahead in MCP-Atlas

- Complex refactoring - 94% success in real tests

❌ Claude Opus 4.7 Cons

- Higher price - 2x more expensive than GPT-5.4

- Hidden Token Tax - 35% more tokens with Tokenizer 2.0

- Slower speed - 30% slower than GPT-5.4

- No image generation capability

- No native computer use

- Higher hallucination rate - 1.2% vs 0.8%

- Lower code correctness - 87.3% vs 91.5%

- Not suitable for rapid iterations

✅ GPT-5.4 Pros

- Low price - 58% cheaper than Claude

- High speed - 30% faster for iterations

- Native computer use - automation and desktop agents

- Full multimodal - image + text generation

- 94.6% on Math benchmarks - best for mathematics

- Low hallucination rate - 0.8%

- High code correctness - 91.5%

- Suitable for high-volume production

❌ GPT-5.4 Cons

- Lower SWE-bench - 81% vs 87.6%

- Weaker tool use - 9.2 points behind

- Smaller context window - 400K (1M experimental)

- Limited max output - 64K tokens

- Lower long-context fidelity

- No prompt caching

- Weaker complex refactoring - 87% vs 94%

- Less precise instruction-following

8. Frequently Asked Questions (FAQ)

❓ Which model is better for learning programming?

For learning, GPT-5.4 is better because it responds faster and is more suitable for multiple iterations. When you're learning, you want quick feedback and to try different things. But when you reach an advanced level and want to write production-ready code, Claude Opus 4.7 is the better choice.

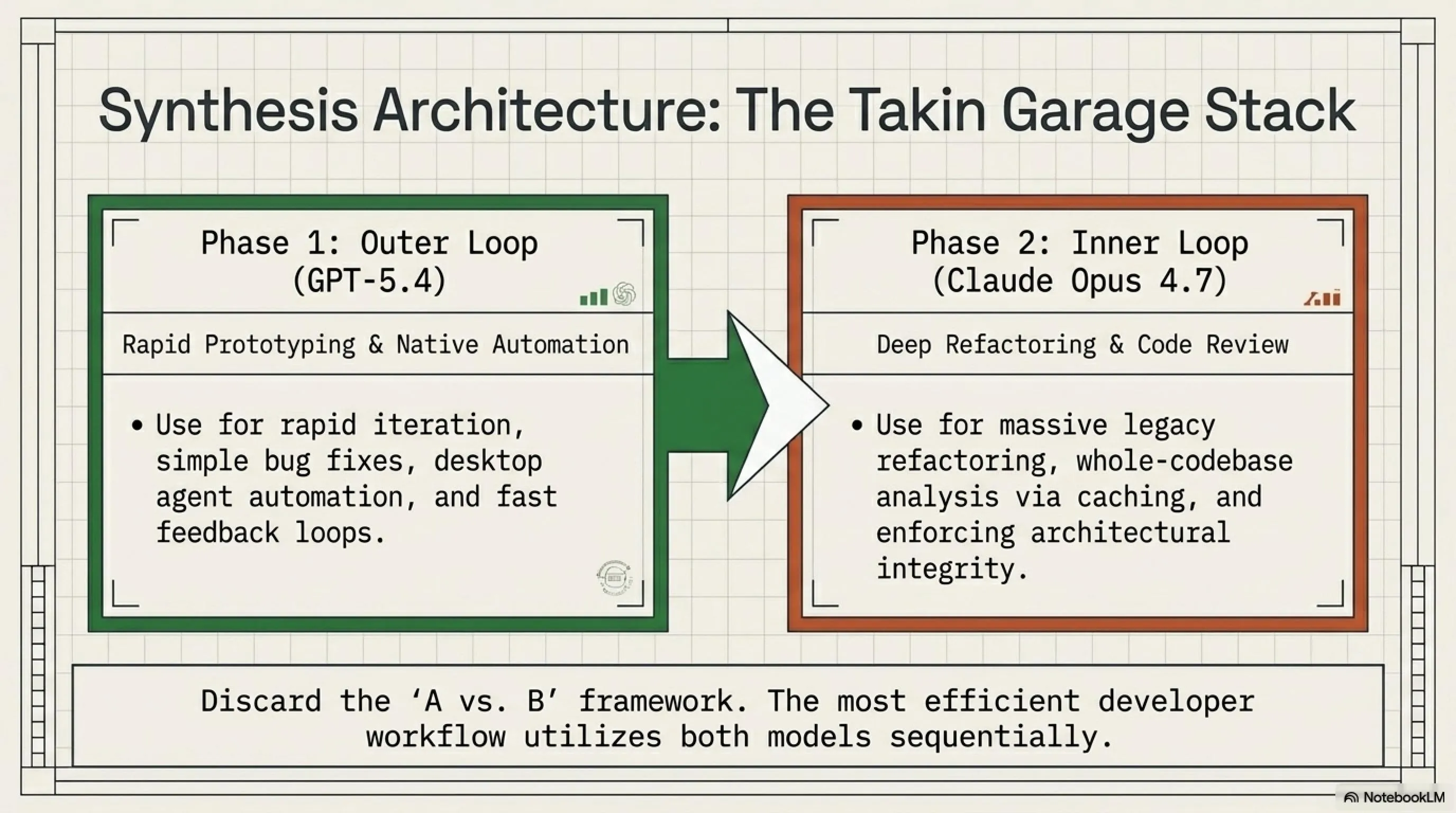

❓ Can I use both models together?

Absolutely! In fact, this is the best strategy. At Tekin Garage, we use GPT-5.4 for quick debugging and initial iterations, then use Claude Opus 4.7 for final refactoring and code review. This combination gives the best results.

❓ Which model is better for small startups with limited budgets?

GPT-5.4 without a doubt. With 58% lower price and higher speed, it's the better choice for startups that need to iterate quickly and have limited budgets. When you scale and need higher quality, you can migrate to Claude Opus 4.7.

❓ What is the Hidden Token Tax and how can I avoid it?

Hidden Token Tax means Claude's new Tokenizer 2.0 converts the same text into 35% more tokens. Unfortunately, you can't directly avoid it, but you can dramatically reduce costs using prompt caching. Also, try to write your prompts shorter and more concise.

❓ Which model is better for non-English content generation?

Both models perform well in non-English content generation, but Claude Opus 4.7 has higher accuracy in long and complex texts. GPT-5.4 is more suitable for short and quick content. For specialized and research articles, we recommend Claude.

9. Final Verdict: Which Model Wins?

After this comprehensive autopsy, it's time to answer the main question: which model wins? The simple answer: neither! Or better yet: both!

This isn't a war - it's a strategic choice. Claude Opus 4.7 and GPT-5.4 are each designed for specific tasks and are unmatched in those areas.

🏆 Tekin Garage Final Verdict

Choose Claude Opus 4.7 if:

- You're working on complex refactoring

- You need to analyze large codebases

- Code quality matters more than speed

- You can use prompt caching

- You need advanced tool use

- You're writing long technical documentation

Choose GPT-5.4 if:

- You have a limited budget

- You need rapid iterations

- You want to use native computer use

- You need image generation

- You're building a high-volume chatbot

- Speed matters more than absolute precision

💡 Golden Tip: At Tekin Garage, we use both. GPT-5.4 for speed, Claude Opus 4.7 for precision. This is the best strategy!

🌐 Stay Connected With Us

For the latest tech, gaming, and gadget news, follow us on social media:

📚 Sources and References

Sources: Anthropic Official Documentation, OpenAI API Pricing, SWE-bench Verified Results, MCP-Atlas Benchmarks, GPQA Diamond, Independent Developer Surveys, Production Cost Analysis, Tekin Garage Real-World Testing

Research and Analysis: Tekin Editorial Team - April 2026

Content of this article is based on public information and real tests by the Tekin team. Prices and benchmarks may change.