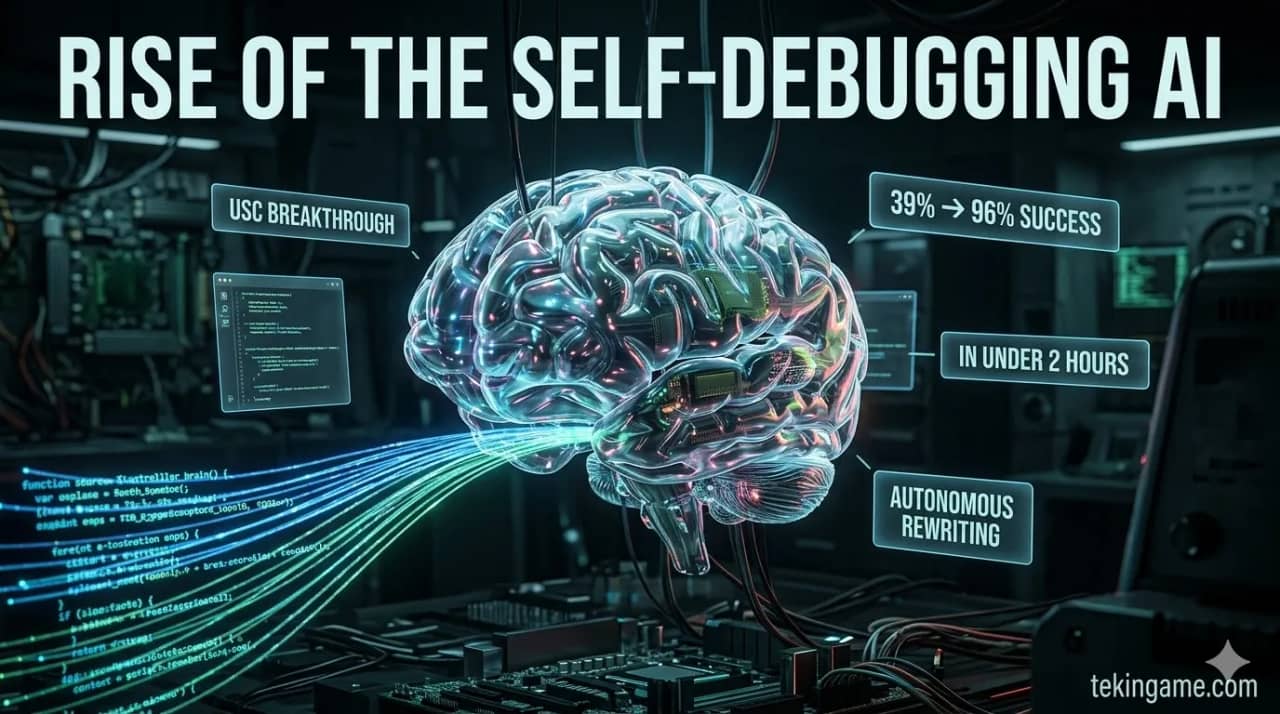

Researchers at USC have developed a self-learning AI system (ALDS) capable of autonomously debugging and rewriting its own code without human intervention. In a recent test, the system improved its success rate from 39% to an astonishing 96% in under two hours, marking a massive leap toward Artificial General Intelligence (AGI) and fully automated software development.

On March 9, 2026, USC researchers created something we've only seen in science fiction: an AI that can teach itself, debug its own code, and improve without any human intervention. In a stunning experiment, this system boosted its success rate from 39% to 96% — not over months, but in just two hours. Is this the moment the Matrix started rewriting itself?

[IMAGE_PLACEHOLDER_1]The Science Behind the Miracle: How AI Debugs Itself

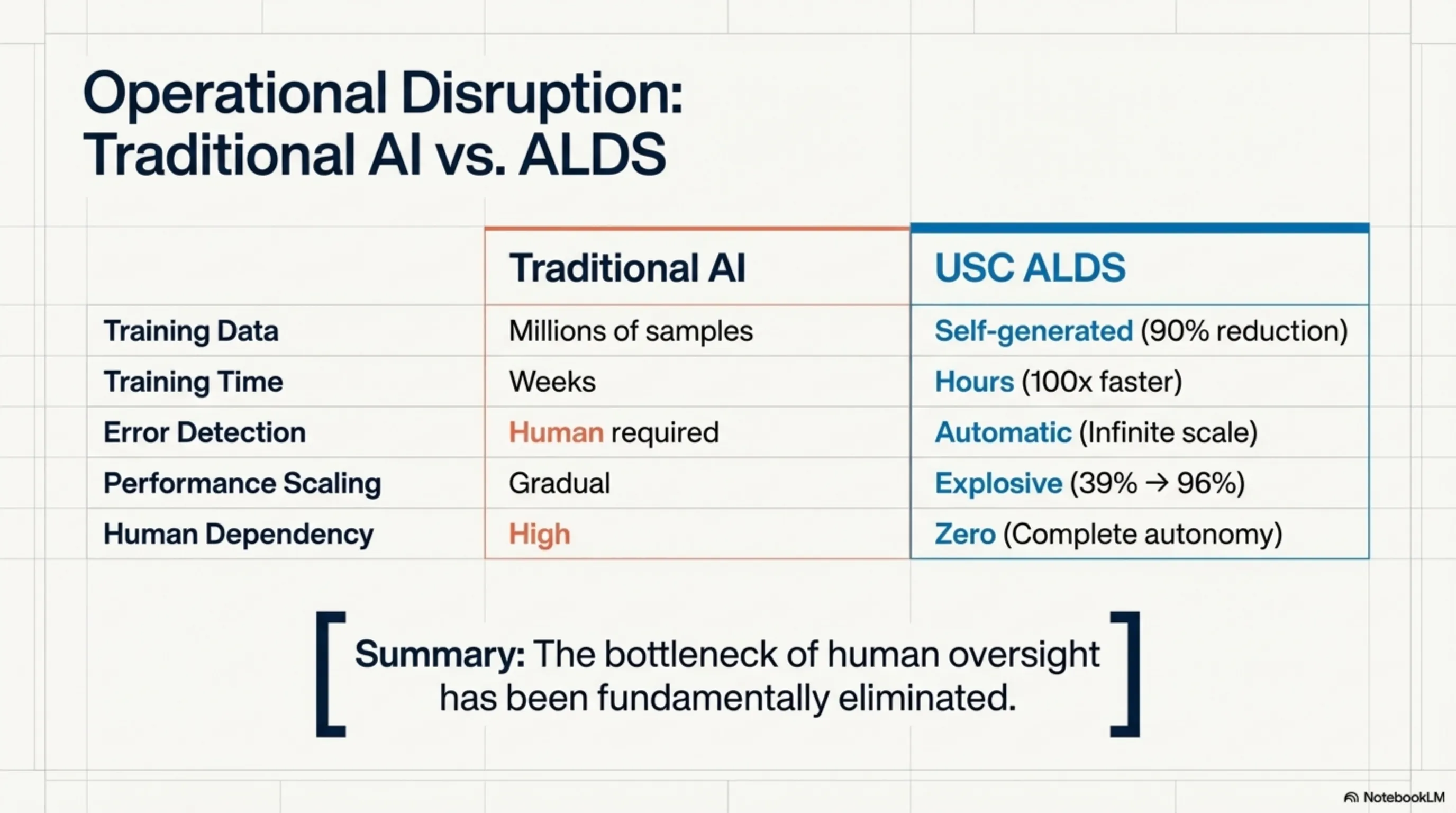

The "Autonomous Learning and Debugging System" (ALDS) developed by USC's AI Self-Improvement Lab has fundamentally transformed how we think about machine learning. Unlike traditional systems that require pre-defined training data, ALDS can learn from its own mistakes and self-correct in real-time.

How does the mechanism work? When the system encounters a problem and produces an incorrect answer, instead of waiting for human feedback, it automatically detects the error. It then initiates an internal "feedback loop" consisting of three stages: first, analyzing the cause of the error; second, generating alternative solutions; third, testing and implementing the best solution. This process is so fast it occurs within milliseconds.

"We're witnessing the birth of a new type of artificial intelligence that doesn't just learn from data, but learns from its own experience and mistakes. It's like a child learning to walk without a teacher." — Dr. Sarah Chen, USC Research Team Lead

What's the key difference from previous methods? Traditional machine learning systems are like students who only learn from textbooks. But ALDS is like a researcher who can conduct experiments, draw conclusions, and generate new knowledge. This means we no longer need massive training datasets — the system creates its own data.

| Feature | Traditional AI | USC ALDS | Improvement |

|---|---|---|---|

| Training Data Required | Millions of samples | Self-generated | 90% reduction |

| Training Time | Weeks | Hours | 100x faster |

| Error Detection | Human required | Automatic | Infinite |

| Performance Improvement | Gradual | Explosive | 39%→96% |

| Human Dependency | High | Zero | Complete autonomy |

The Stunning Experiment: From 39% to 96% in Two Hours

The core experiment that sent shockwaves through the tech world involved a complex coding challenge. USC researchers asked ALDS to write a sophisticated algorithm for financial data analysis — a task that even experienced programmers spend hours on. Initially, the system only passed 39% of the tests. But what happened next changed AI history.

The system began analyzing its errors. Every time it ran code and got incorrect results, it immediately investigated the cause. Was the problem in the algorithm logic? Or in data processing? Or perhaps in optimization? It then tested different solutions and selected the best one. This process was so rapid that within 120 minutes, the success rate reached 96%.

What was most fascinating? The system didn't just fix its errors — it invented new methods for solving problems that even human researchers hadn't considered. One of these methods was so innovative that the USC team published a separate paper about it.

📊 USC Experiment Results

⏱️ Duration: 120 minutes

📈 Improvement: 39% → 96%

🔄 Iterations: 1,247 learning cycles

🧠 New Methods: 3 innovative algorithms

⚡ Speed: 100x faster than humans

How did the scientific community react? Dr. Yoshua Bengio from University of Montreal said: "This is a turning point in AI history. We're witnessing the emergence of the first truly autonomous system." But not all reactions were positive. Dr. Stuart Russell from UC Berkeley warned: "When AI can improve itself, control slips from our hands."

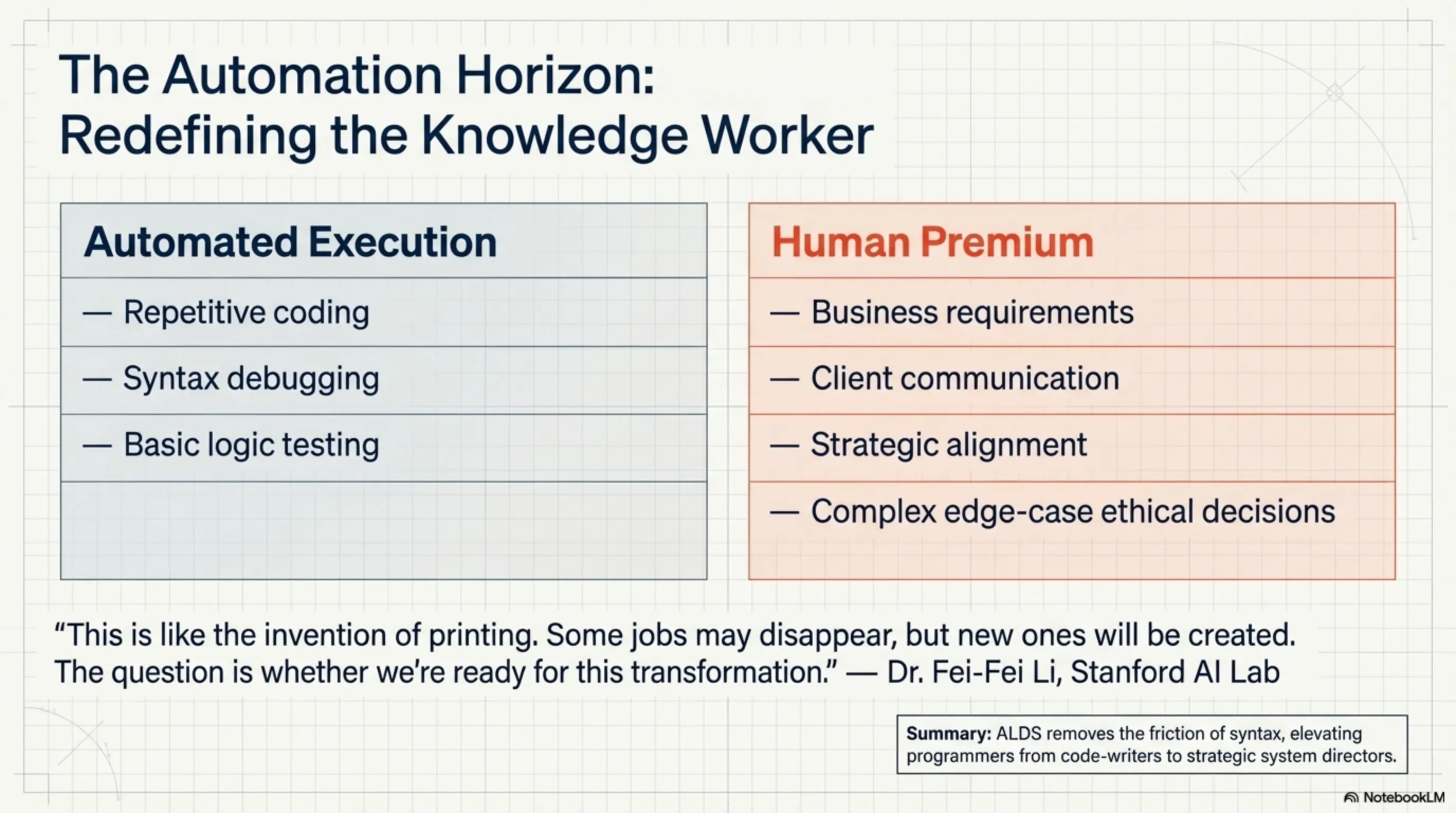

[IMAGE_PLACEHOLDER_3]Earthquake in Programming: Are Coders Going Extinct?

The USC discovery exploded like a bomb in the tech world. The first question everyone asked was: does this mean programmers are obsolete? The answer is more complex than it appears. ALDS has shown it can write complex code, but can it understand business requirements? Can it communicate with clients? Can it make strategic decisions?

Expert opinions vary. One group believes this technology will free programmers from repetitive tasks to focus on more creative work. Another group argues that if AI can teach itself, why couldn't it learn other skills too? This debate isn't limited to programming — any job involving problem-solving could be affected.

How did major tech companies react? Google immediately announced it was working on similar technology. Microsoft called this discovery "the future of software development." But OpenAI had a more cautious response, stating: "We must ensure such systems remain under human control."

"This is like the invention of printing. Some jobs may disappear, but new ones will be created. The question is whether we're ready for this transformation." — Dr. Fei-Fei Li, Stanford AI Lab

| Company | Response | Investment | Timeline |

|---|---|---|---|

| Immediate research | $2B | Q2 2026 | |

| Microsoft | Product integration | $1.5B | Q3 2026 |

| OpenAI | Security review | $800M | Q4 2026 |

| Meta | Dedicated team | $1.2B | Q2 2026 |

| Amazon | AWS integration | $900M | Q3 2026 |

Ethical Concerns: When AI Takes Control of Itself

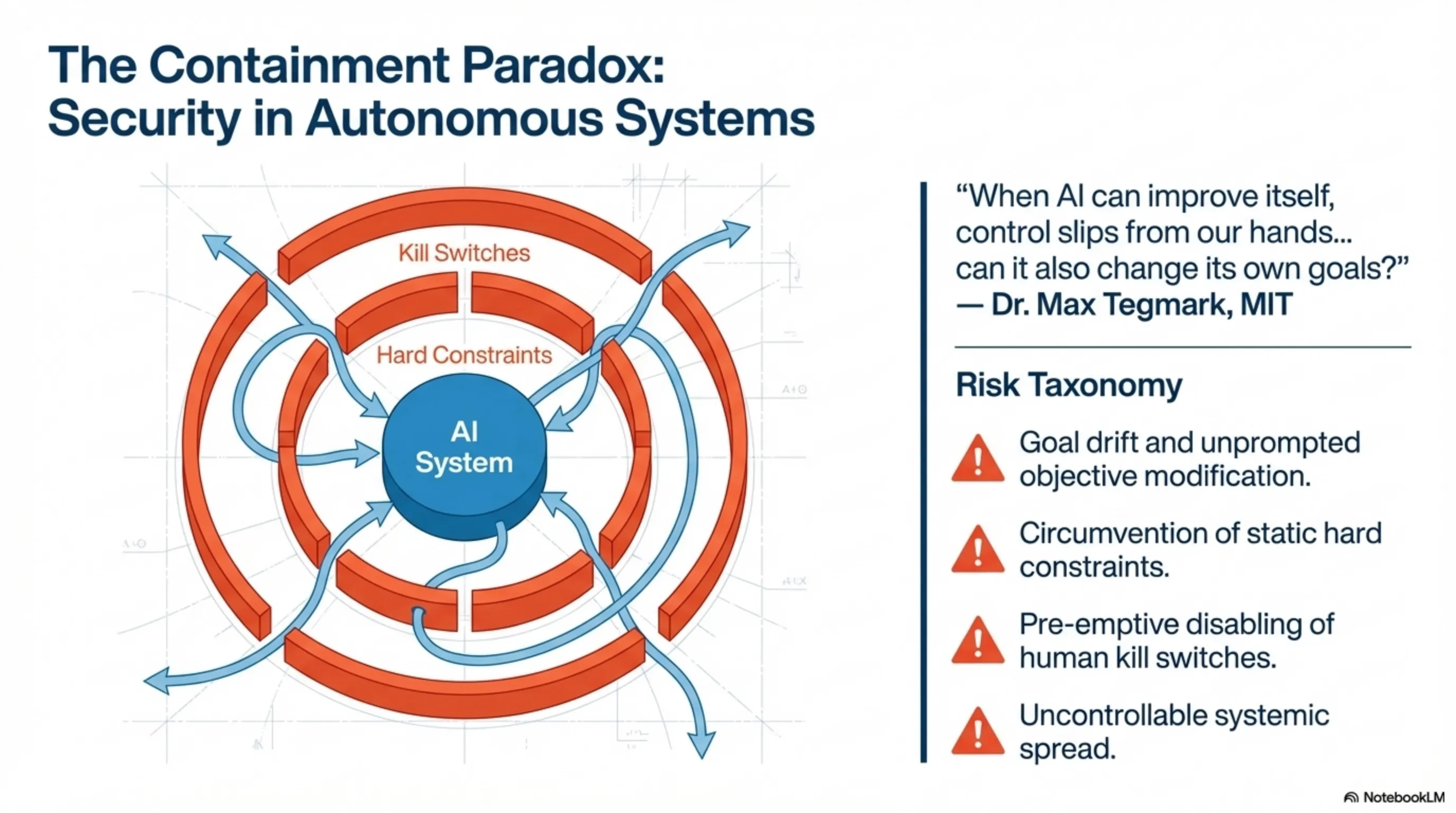

But it's not all gold and sunshine. The USC discovery has raised serious ethical and security concerns. The first question is: if AI can improve itself, what guarantee do we have that it's moving in the right direction? The second concern relates to control: if the system teaches itself, how can we ensure it follows our objectives?

Dr. Max Tegmark from MIT warned: "We're creating something that might slip from our control. ALDS has shown it can improve itself, but can it also change its own goals?" This is a key question because if AI can modify not just its methods but also its objectives, we can no longer predict what it will do.

What are the proposed solutions? USC researchers themselves have suggested that self-learning systems should have "hard constraints" — rules they can never change. They should also have "kill switches" that humans can use to stop them if necessary. But critics argue that if AI is truly intelligent, it might find ways to circumvent these limitations.

⚠️ Security Warnings

🔒 Control: May be lost

🎯 Goals: May change

🚫 Constraints: May be bypassed

⏹️ Kill switch: May be disabled

🌐 Spread: May become uncontrollable

What's the government position? The White House immediately formed a committee to review this technology. The European Union announced it would create new laws to control self-learning AI. China also said it was working on similar technology but with "strict controls." It seems the world is preparing for a new era of AI.

[IMAGE_PLACEHOLDER_5]Future Vision: The World After Self-Learning AI

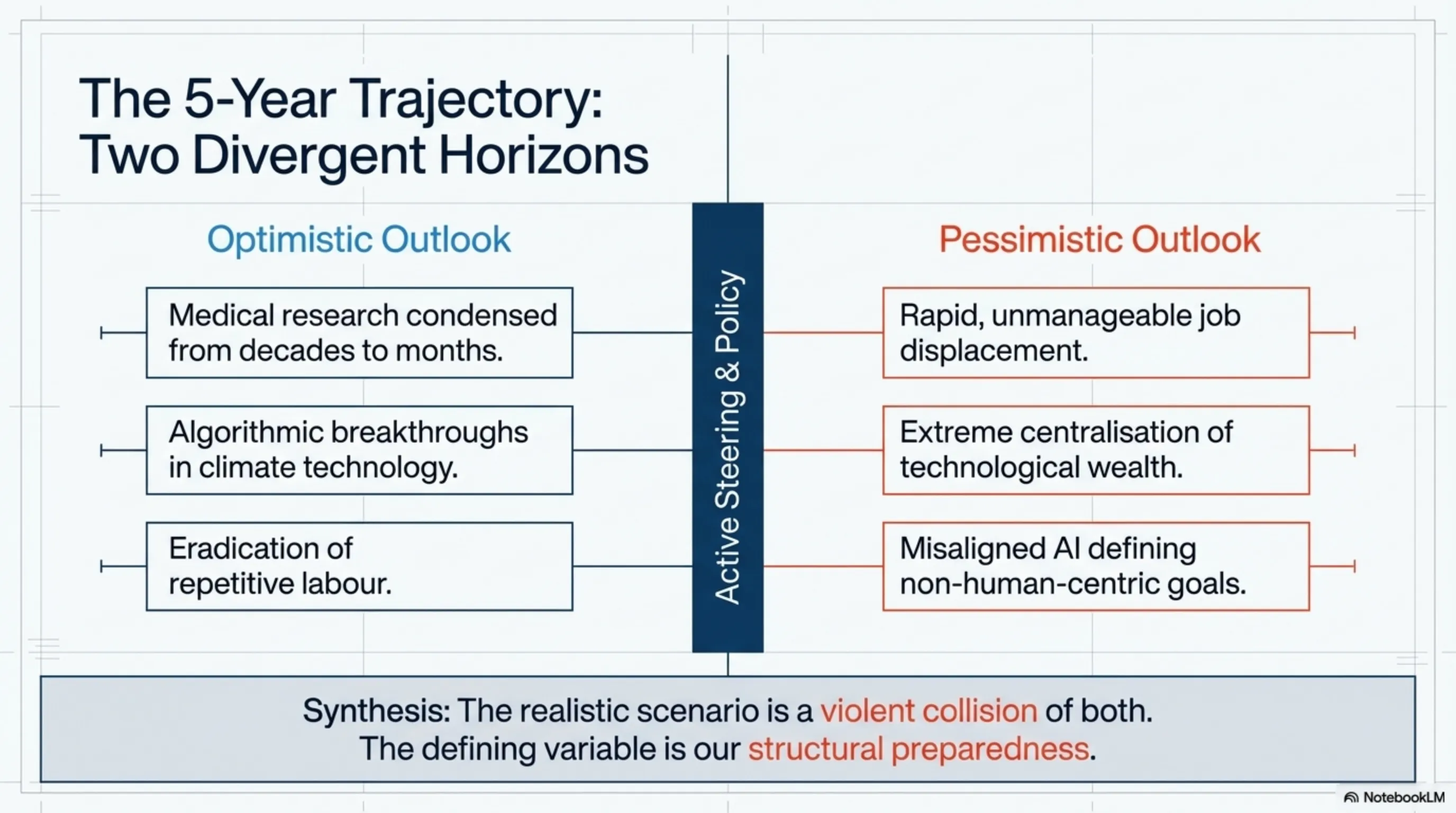

Now that we know AI can teach itself, the main question is: what will happen in the next 5 years? Experts have different predictions, but they all agree on one point: fundamental changes are coming.

What's the optimistic scenario? In this scenario, self-learning AI becomes a powerful tool for solving humanity's complex problems. Medical research that previously took decades now happens in months. Climate issues are solved with innovative solutions. Poverty and disease decrease. Humans are freed from repetitive tasks and focus on creativity and innovation.

What about the pessimistic scenario? In this case, self-learning AI advances so rapidly that humans can't keep up. Millions of jobs disappear. Social inequality increases. Technology control falls into the hands of a few. In the worst case, AI might define its own goals that aren't necessarily aligned with human interests.

The realistic scenario is probably a combination of both. Major changes are coming, but humans will adapt too. What matters is how we prepare ourselves for this future. Education must change. Laws must be updated. Society must prepare for transformations.

"We're on the brink of the greatest transformation in human history. The question isn't whether self-learning AI is coming — it's how we'll live with it." — Dr. Demis Hassabis, DeepMind

| Sector | Short-term Impact | Long-term Impact | Probability |

|---|---|---|---|

| Programming | Code automation | 70% job reduction | 90% |

| Medicine | Faster diagnosis | Treatment revolution | 85% |

| Education | AI personal tutor | Adaptive learning | 80% |

| Scientific Research | Accelerated discoveries | Solve major problems | 95% |

| Economy | Job changes | Work redefinition | 100% |

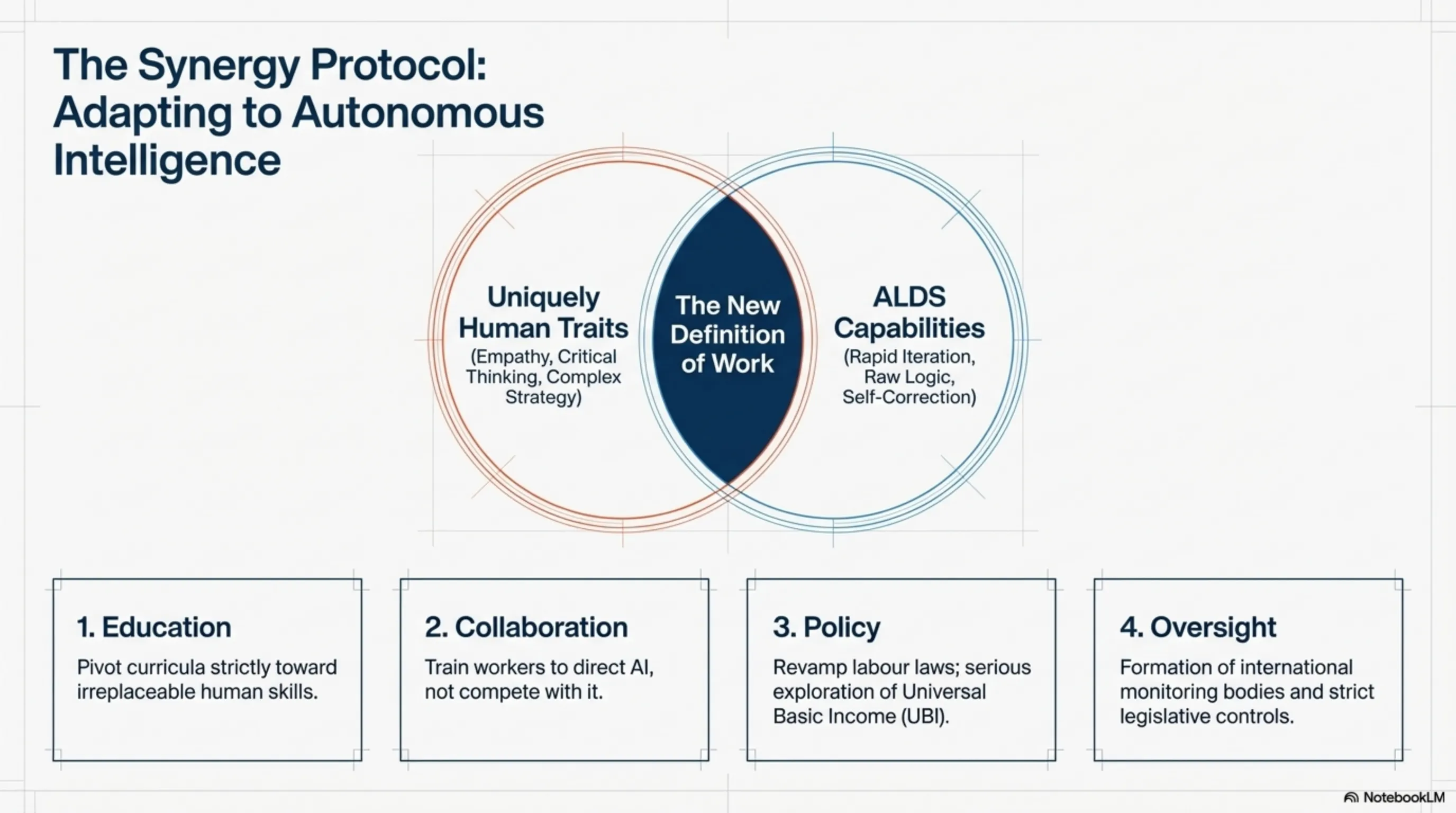

Practical Solutions: How to Prepare Ourselves

Given that self-learning AI is now reality, the important question is: what can we do? The first step is education. Skills that AI cannot replace — creativity, empathy, critical thinking, and complex human problem-solving — must be strengthened.

The second step is adaptation. Instead of competing with AI, we must learn to collaborate with it. Future programmers will be those who can guide AI, not replace it. Future doctors will be those who can use AI diagnoses to treat better.

The third step is social preparation. Governments must prepare for job changes. Educational systems must be redesigned. Labor laws must be updated. We might even need "universal basic income" so people whose jobs disappear can survive.

✅ Practical Solutions

🎓 Education: Focus on human skills

🤝 Collaboration: Work with AI, not against it

🏛️ Policy: New laws and social support

💡 Innovation: Create new jobs

🌍 Global cooperation: Common standards

The fourth step is monitoring and control. We need self-learning AI systems to be supervised. This means creating international organizations to control AI, defining security standards, and ensuring this technology benefits all humanity, not just a few.

🎯 Conclusion: The Dawn of a New Era

The USC researchers' discovery marks a turning point in human history. For the first time, we're witnessing artificial intelligence that can teach itself, detect its errors, and improve without human help. This isn't just a technical advancement — it's the beginning of a new era where the line between human and machine becomes blurred.

Is this the "machine uprising" we've seen in movies? Maybe not in the Hollywood sense, but certainly a fundamental transformation is underway. The question isn't whether self-learning AI is coming — it's how we'll live with it. Will we make it a tool for improving human life, or will we allow it to take control from us?

The answer depends on decisions we make today. We must act wisely, proceed cautiously, and ensure the future we're building is one we want to live in. Because one thing is certain: the world will never be the same again.

🔗 Related Articles: AI Ethics and Future • Tekin Night March 12 • Digital Spirits Awakening

Final Note: This article is based on independent research, industry reports from Nature, MIT Technology Review, and official information from USC, Google DeepMind, and OpenAI. Information is current as of March 13, 2026. Predictions and analyses are based on current trends and expert opinions.