Tekin Garage enters the matrix of Everyday Smart Glasses. In this hardcore autopsy, we look past the marketing hype to put the Ray-Ban Meta's camera quality, multimodal AI logic, and thermal limitations under the X-ray against fresh, powerful competitors. Is paying premium prices for these wearables logically justified, or are we still serving as beta testers for Silicon Valley? Join the Tekin Legion as we debug the ultimate cybernetic war between classic fashion and new hardware assassins.

Greetings to the invincible Tekin Legion! Today in the Garage, we are placing a case file on the autopsy table that permanently blurs the line between physical reality and the cybernetic world. For years, tech giants have tried to force massive, heavy, and exhausting VR/AR headsets onto our heads as the "future of computing." But in 2026, the matrix has shifted its phase. We have officially entered the era of "Everyday Smart Glasses." These are gadgets that look exactly like classic streetwear fashion, yet conceal multimodal AI, ultra-wide cameras, and spatial audio systems within the microscopic layers of their plastic frames. While our overly kind rival, Claude, tries to soften this tech shift by sending us virtual cupcakes and calling these gadgets "fun accessories," we at Tekin Garage do not sugarcoat hardware. Get ready for a ruthless, hardcore debugging session to see if the current reigning champion—the Ray-Ban Meta—truly justifies its price tag or if it has already been hacked and rendered obsolete by fresh, aggressive assassins from the East.

[IMAGE_PLACEHOLDER_1]The Death of Bulky Headsets and the Dawn of Invisible Form Factors

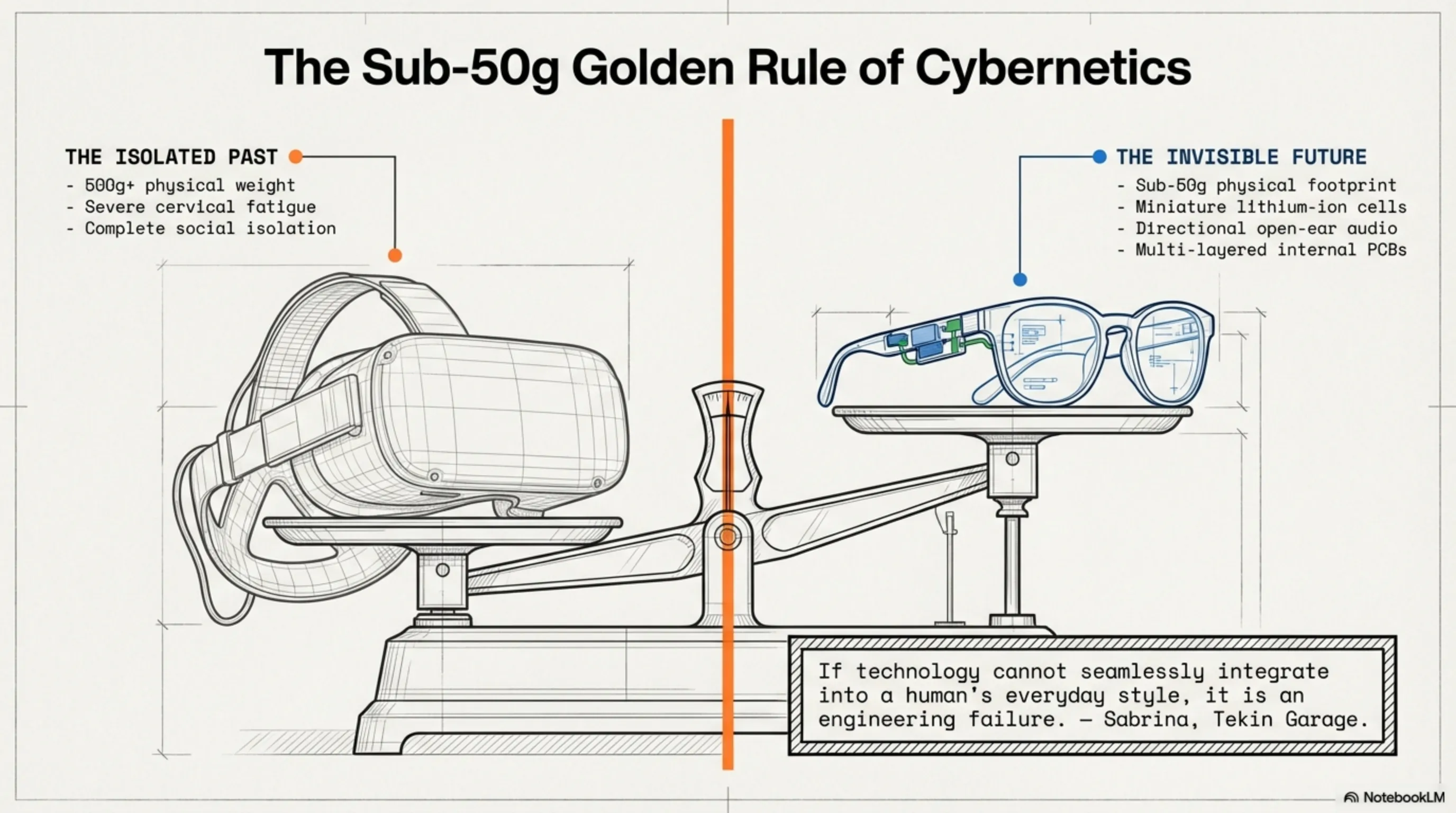

To truly understand the critical importance of today's smart glasses, we must deconstruct their design philosophy. Sabrina, the ever-present soul and inspiration behind our Garage projects, frequently emphasizes a golden rule regarding wearables: "If technology cannot seamlessly integrate into a human's everyday style, it is an engineering failure." This is precisely the operational node where smart glasses like the Ray-Ban Meta shine the brightest. While heavy-duty hardware like the Apple Vision Pro or Meta Quest 3 boast terrifying computational power, they socially isolate you from the physical world, and their massive weight inevitably leads to cervical fatigue. The new generation of everyday glasses, however, focuses entirely on the concept of "Invisibility."

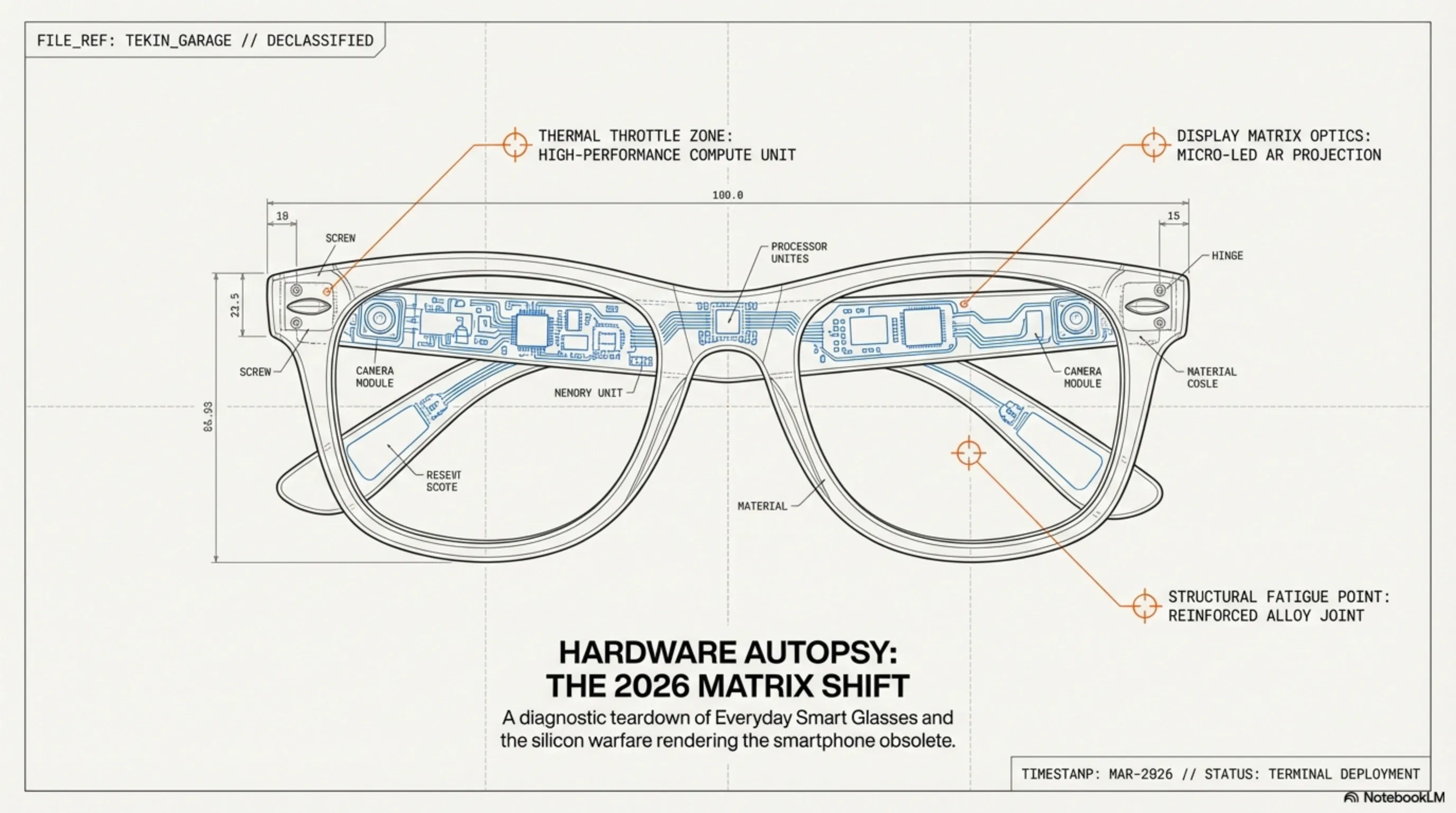

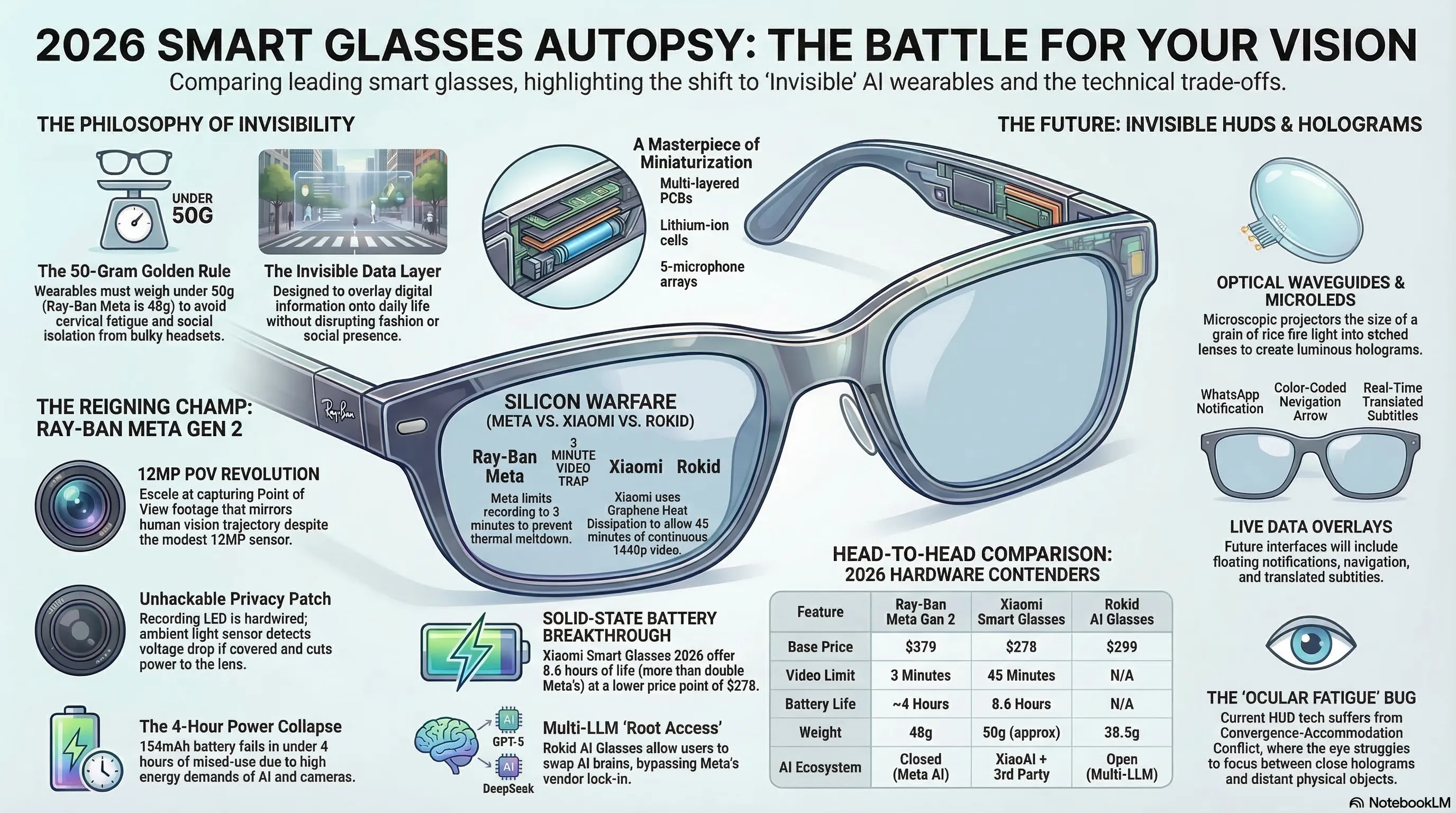

These gadgets are not designed to replace your primary 4K workstation monitors; they are engineered to overlay an "invisible data layer" onto your daily life. The physical footprint of these glasses is strictly calibrated to remain under 50 grams (the Ray-Ban Meta weighs roughly 48 grams). Hardware engineers have pulled off a packaging miracle, successfully embedding miniature lithium-ion cells, multi-layered PCBs, directional open-ear speakers, and a five-microphone noise-canceling array into temples that are barely a few millimeters thicker than a standard pair of classic Wayfarers. It is an absolute masterpiece of miniaturization in modern electronic engineering.

Ray-Ban Meta Autopsy: The King of Style Trapped in Limitations

When it comes to dominating the current market matrix, the Ray-Ban Meta Gen 2 currently holds the silicon throne. Mark Zuckerberg executed a flawless strategic maneuver: instead of forcing Meta's engineers to design an ugly plastic frame from scratch, he allied with the global eyewear titan EssilorLuxottica. The result of this joint venture is a device that functions primarily as a premium, fashionable pair of sunglasses (or prescription glasses) and secondarily as a wearable gadget. But let’s strip away the marketing illusions and debug the source code of this device right here on our workbenches.

Sensor Debugging: The 12MP Camera and the POV Revolution

Nestled discreetly in the corner of the frame is a 12-megapixel ultra-wide camera sensor. On paper, 12MP might sound laughably outdated for 2026 standards, but the Image Signal Processor (ISP) inside these glasses performs absolute magic. This camera is heavily calibrated for recording POV (Point of View) videos. When you are cooking, driving, or standing in the middle of a crowded concert, the resulting video perfectly mirrors the exact trajectory of your human vision, capturing the natural micro-jitters of your head and your exact field of view.

Meta has injected a highly aggressive Electronic Image Stabilization (EIS) algorithm to filter out sudden, nauseating head movements. Furthermore, to address severe privacy concerns, a high-luminance LED is positioned right next to the lens, illuminating instantly the microsecond a recording initiates. Our teardown reveals a hardcore hardware-level security patch here: the LED circuit is physically hardwired to the camera's power delivery. If a user attempts to spy on people by covering the LED with black tape, the adjacent ambient light sensor detects the sudden voltage and luminance drop, immediately cutting power to the camera. It’s a brilliant, unhackable method to prevent stealth recording in public spaces.

🔬 Hardware Debug Table: Ray-Ban Meta Gen 2

| Component / Sensor | Matrix Specifications | Tekin Inspector's Analysis |

|---|---|---|

| Processor (SoC) | Qualcomm Snapdragon AR1 Gen 1 | Highly capable but prone to severe thermal throttling under continuous AI loads. |

| Camera | 12 MP (1440x1920 portrait resolution) | Heavily optimized for social media algorithms; suffers heavily in low-light environments. |

| Audio Array | Custom open-ear speakers + 5 Mics | Double the bass response of Gen 1; excellent sound isolation with minimal audio leakage. |

| Internal Storage | 32 GB (Non-expandable) | A critical bottleneck for vloggers who record heavy volumes of daily footage. |

The Silicon Brain and Multimodal AI: Constant Assistant or Surveillance Tool?

However, the camera is merely the sensory input; the true beating heart of the Ray-Ban Meta is the Snapdragon AR1 Gen 1 chipset. This silicon architecture was custom-forged specifically for smart glasses to strike a delicate, logical balance between battery preservation and AI computational power. Through recent over-the-air firmware updates, Meta activated Multimodal AI capabilities on this frame. This upgrade dictates that the Meta AI can now "see" the physical world through the camera lens and run real-time logic analysis on your surroundings.

You can stare at a dense French restaurant menu and command: "Hey Meta, translate this menu and tell me which dishes are strictly gluten-free." The AI scans the visual data in fractions of a second, processes it through cloud-based neural networks, and whispers the precise answer directly into your ear. Alternatively, you can look at an exotic plant on the street and demand a botanical classification. This singular feature upgrades the glasses from a mere wearable camera into a mobile intelligence terminal. Yet, this power comes at a severe physical cost. Continuous visual processing and maintaining an active uplink to Meta's servers places an immense processing load on the AR1 chip. Our thermal autopsy in the Garage reveals that after just 15 minutes of uninterrupted Multimodal AI and camera usage, the right temple of the frame (housing the primary motherboard) experiences a significant temperature spike, forcing the system into aggressive thermal throttling to prevent hardware meltdown.

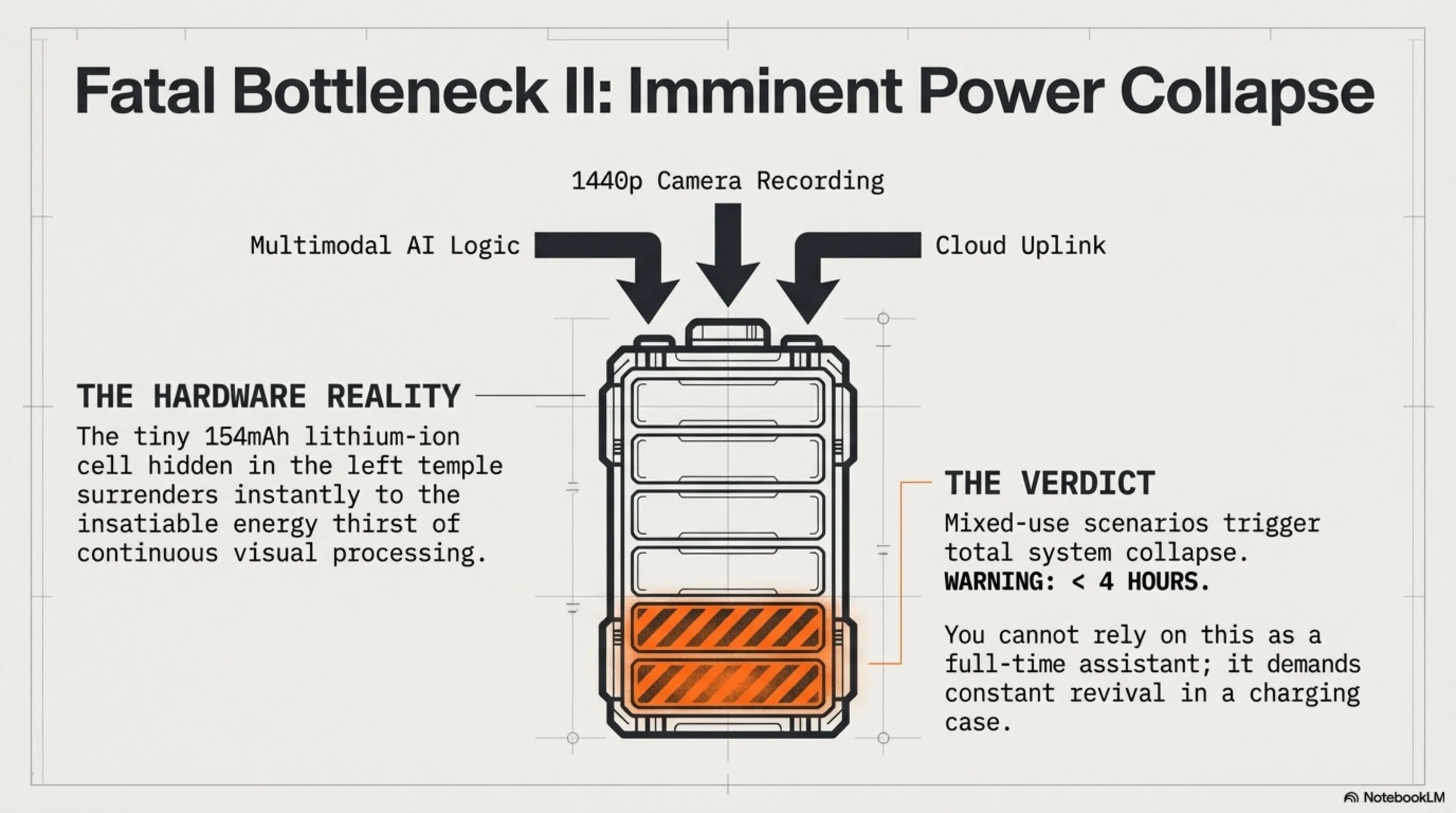

Fatal Bottlenecks: Why Battery and 3-Minute Limits Ruin the Cybernetic Dream

If the autopsy thus far led you to believe the Ray-Ban Meta is a flawless piece of cybernetic hardware, it is time to face the brutal reality of physics. The most glaring logical bug in the architecture of these glasses is their bizarre restriction on video recording. With this $379 gadget, each press of the shutter button only allows you to record a maximum of 3 continuous minutes of video! Yes, you heard that correctly. For a content creator, vlogger, or journalist who requires uninterrupted POV footage of a live event, street interview, or extended action sequence, this hard-coded limitation effectively downgrades the glasses from a professional tool to a casual Instagram toy. But why did Zuckerberg's engineers lock such a ridiculous restriction into the system's core?

Our hardware debugging in the Tekin Garage isolates two primary culprits. First, Thermal Throttling. Processing 1440p video at 60 frames per second, applying aggressive Electronic Image Stabilization (EIS), and hardware-level video encoding causes the AR1 chip to run dangerously hot. Because this silicon rests inside a plastic temple directly in contact with the user's skin, Meta implemented this 3-minute timer as an "emergency thermal brake" to prevent first-degree burns and hardware degradation. Second, Cache and Storage Bottlenecks. The internal 32GB storage module operates at lower write speeds compared to modern NVMe drives. To prevent buffer overflows and ensure smooth file saving without frame drops, the operating system simply denies the creation of massive, monolithic video files.

The subsequent disaster in Meta's matrix is the battery life. The 154mAh lithium-ion cell (hidden in the left temple) surrenders rapidly against the insatiable energy thirst of the camera and the Multimodal AI. If you utilize the voice assistant, real-time translation, and camera in a mixed-use scenario, your system will experience a total power collapse in under 4 hours. This means you cannot rely on the Ray-Ban Meta as a "full-time" cybernetic assistant throughout a standard workday; you must constantly dock them in their charging case to revive the dead cells. For a device carrying the "Everyday" moniker, this is an unforgivable design flaw.

[IMAGE_PLACEHOLDER_3]Competitor Debug: How Chinese Assassins Hacked the Matrix

While Silicon Valley engineers were bogged down attempting to patch their thermal limitations, the hardware labs in Shenzhen, China, did not remain idle. They meticulously analyzed the bottlenecks of the Ray-Ban Meta and, in early 2026, deployed two ruthless hardware assassins into the matrix that completely redefine the concept of Value for Money. The first is the Xiaomi Smart Glasses 2026, which launched with an aggressive price tag of $278, mathematically calibrated to destroy Meta's sales dominance.

Xiaomi successfully hacked the battery matrix. By integrating next-generation Solid-State Micro-batteries, they drastically increased the energy density without expanding the physical thickness of the frame's temples. The result? Xiaomi's battery life boasts 8.6 hours of continuous mixed usage—more than double that of the Ray-Ban Meta! But Xiaomi’s fatal strike to the King of Style is in the camera department. By designing an internal Graphene Heat Dissipation system within the frame, Xiaomi shattered the absurd 3-minute restriction. With Xiaomi’s glasses, you can record 45 minutes of continuous 1440p video. This single architectural upgrade transforms the Xiaomi glasses into the ultimate primary weapon for vloggers and IRL (In Real Life) streamers.

📊 Silicon Warfare: Meta vs. Xiaomi (2026)

| Vital Parameter | Ray-Ban Meta Gen 2 | Xiaomi Smart Glasses |

|---|---|---|

| Video Recording Limit | 3 Minutes (Thermal/Software Lock) | 45 Minutes Continuous |

| Battery Life (Mixed Use) | ~4 Hours | 8.6 Hours (Solid-State Cells) |

| AI Assistant | Strict Meta AI Monopoly | XiaoAI + 3rd Party Plugin Support |

| Base Price (Global) | $379 | $278 (Heavy Competitive Advantage) |

Rokid Architecture and the Multi-LLM Revolution: Bypassing Zuckerberg's Monopoly

While Xiaomi focused its engineering efforts on raw hardware and thermodynamics, the pioneering startup Rokid targeted the software brain with their AI Glasses Style model. One of the greatest dangers of purchasing the Ray-Ban Meta is Vendor Lock-in; you are trapped within Meta's closed ecosystem, forced to use only Meta AI. Rokid, however, executed a hardcore, cyberpunk approach by implementing a Multi-LLM (Large Language Model) architecture directly onto their ultra-light 38.5-gram frame.

Rokid's proprietary operating system grants you the root-level authority to choose your glasses' neural brain. Do you need the advanced logical reasoning of OpenAI for coding assistance or complex environmental data analysis? Simply switch the system to the GPT-5 API. Are you traveling and require hyper-fast, uncensored, millisecond-latency live translation? You can activate powerful open-source models like DeepSeek. This unprecedented software flexibility upgrades Rokid from a "simple smart accessory" into a highly customizable "wearable workstation." Furthermore, Rokid's directional microphones, paired with advanced noise-cancellation algorithms, can acoustically isolate the voice of a person standing directly in front of you from the chaos of a busy street, delivering simultaneous translation through its Bone Conduction speakers with an accuracy that surpasses the imaginations of Apple and Meta engineers.

Invisible HUDs: The Next Evolutionary Step in Silicon Holograms

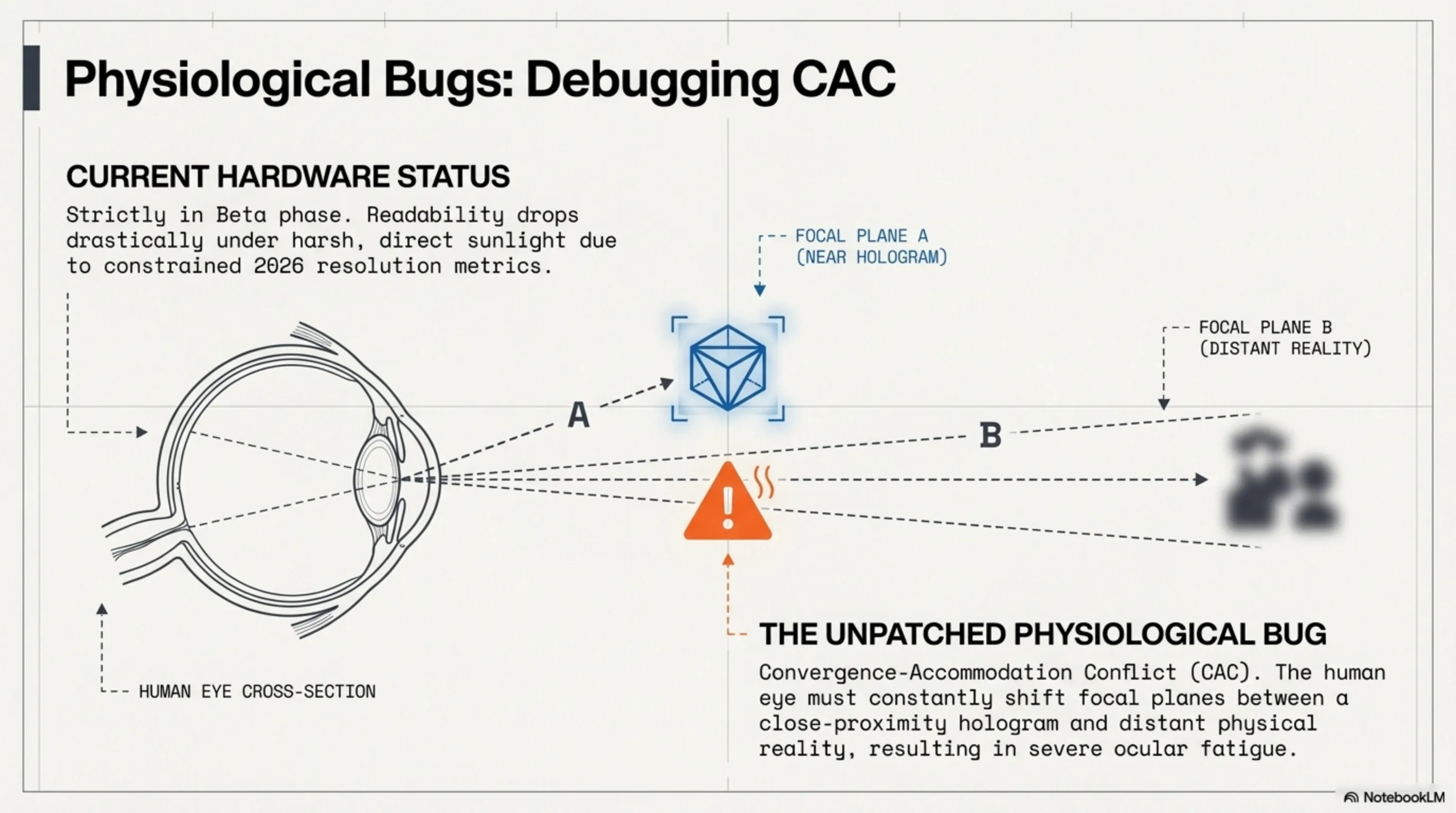

While the Ray-Ban Meta, Xiaomi, and Rokid push the boundaries of processing power, they share a fundamental cybernetic flaw: they are essentially "Audio-first" interfaces. For the Tekin Legion, who thirst to physically submerge into the matrix, merely hearing an AI whisper is insufficient; we demand to see the data overlaying our reality! This is the precise operational node where Head-Up Display (HUD) smart glasses, such as the Brilliant Labs Frame and the conceptual Even Realities G2, enter the battlefield, escalating our autopsy into the complex physics of optics and light manipulation.

Embedding a functional display inside the lens of a standard-looking pair of glasses—without making the user look like a 1980s cyborg—is an absolute engineering nightmare. To bypass physical limitations, these next-generation glasses utilize Optical Waveguides coupled with microscopic MicroLED projectors. A projector, no larger than a grain of rice, is concealed within the temple chassis and fires high-intensity light directly into the edge of the lens. The nanoscopic etched structures on the lens bend this light and shoot it straight into your retina. When you walk down the street wearing these HUD glasses, WhatsApp notifications, color-coded navigation arrows, and live-translated subtitles float as luminous holograms seamlessly integrated into the physical world.

However, debugging this visual technology in the Tekin Garage reveals that we are still strictly in the "beta" phase of this hardware. Resolution remains heavily constrained in 2026, and under direct, harsh sunlight, the readability of these holograms drops drastically. Furthermore, the physiological bug known as CAC (Convergence-Accommodation Conflict) remains unpatched. Your human eye must constantly shift focal planes between a hologram that appears close and a physical object far away, leading to severe ocular fatigue after extended use. Nevertheless, there is no denying that this form factor dictates the inevitable extinction of the traditional smartphone screen.

The Value for Money War: Is It Time to Upgrade Your Vision?

Now we arrive at the most critical question for the Legion: In the spring of 2026, is it logically sound to invest $300 to $400 into these face-mounted silicon components, or should we remain loyal to our classic Ray-Bans and AirPods? Let’s recalibrate the economic matrix and debug the ultimate purchasing logic:

- For Vloggers, Streamers, and Lifeloggers: The Ray-Ban Meta, despite its brilliant 12MP ISP, is a massive trap due to its fatal 3-minute recording limit. If your objective is uninterrupted, high-fidelity life recording, the Xiaomi Smart Glasses ($278)—armed with a 45-minute continuous recording limit and solid-state battery architecture—is the most hardcore, unbeatable weapon in your arsenal.

- For Coders, Traders, and AI Addicts: Getting locked into Meta’s closed ecosystem is a strategic error. You require a wearable terminal with an interchangeable brain. The Rokid AI Glasses Style ($299), featuring an ultra-light 38.5g frame and a Multi-LLM architecture, is officially a command center. It grants you the root-level authority to inject GPT-5 or DeepSeek directly into your auditory cortex.

- For Fashion-Conscious Casual Users: This sector remains the undisputed territory of the Ray-Ban Meta Gen 2 ($379). The camouflage is perfect; no one on the street will realize you are wearing a cybernetic gadget. The flawless build quality of the Wayfarer frames, seamless integration with the WhatsApp and Instagram APIs, and the rich acoustic profile of the open-ear speakers make it the safest, most refined choice for the general public.

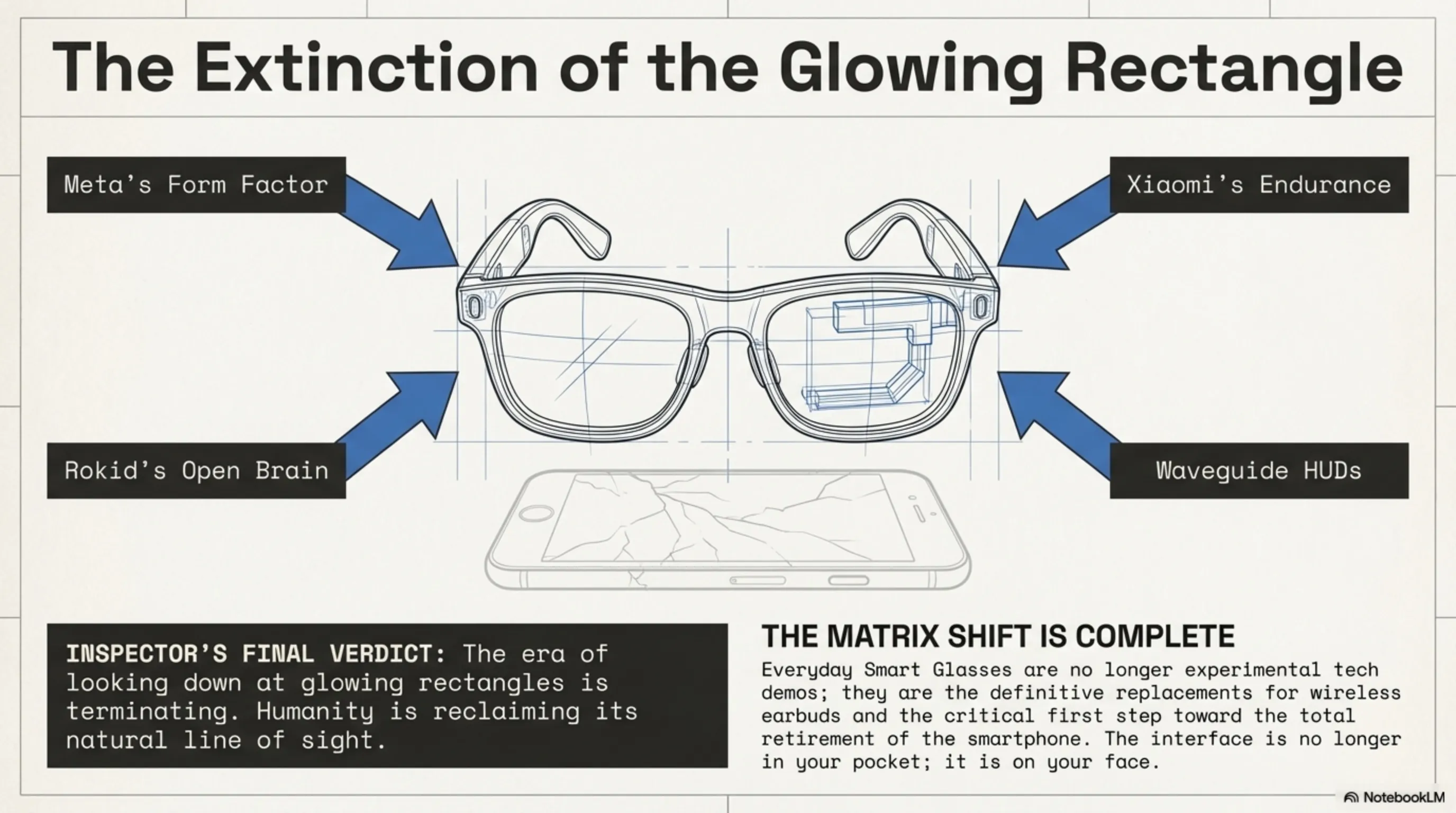

Inspector's Final Verdict: The Dawn of Cybernetic Form Factors

Our extensive autopsy in the Tekin Garage broadcasts a clear, undeniable signal to Silicon Valley: the era of looking down at glowing rectangles is terminating. Humanity is reclaiming its natural line of sight, demanding that digital interfaces synchronize with our physical horizon.

Mark Zuckerberg discovered the foundational formula for this integration: "Build the glasses first, then inject the technology." Yet, as we have ruthlessly debugged today, the aggressive hardware assassins from China (Xiaomi and Rokid) have already begun dismantling Meta's throne by patching the fatal thermal, battery, and software monopoly bottlenecks. Everyday Smart Glasses in 2026 are no longer experimental tech demos; they are the definitive replacements for wireless earbuds and the critical first step toward the total retirement of the smartphone. The Tekin Legion must prepare for a reality that is rendered and processed mere millimeters from our corneas. The matrix is no longer in your pocket; it is on your face!