In this comprehensive mega-article, we dissect the biggest scandal in the history of video game journalism. A massive leak has revealed that major American gaming outlets are generating their reviews entirely using AI models like GPT-5 and Llama-4, complete with fake journalist profiles. We explore the terrifying reality of the "Dead Internet Theory," digital bribery using compute tokens instead of cash, and Metacritic's aggressive ban wave against this unprecedented digital corruption.

🌆 Tekin Afternoon May 7, 2026: The Great Gaming Media Scandal

Good afternoon, gamers! Today, we're diving into one of the biggest scandals in gaming journalism history. When AI creates the game, AI reviews the game, and bots promote the game - where does the real gamer fit in? The Dead Internet Theory is no longer a conspiracy - it's becoming reality in the gaming industry, and the implications are terrifying.

⚡ Tonight's Headlines:

🎮 Source Code Exposed: Major US Gaming Media Using AI to Write Reviews

💰 Digital Bribery: Publishers Paying with "Processing Tokens" Instead of Cash

🤖 Metacritic in Crisis: Fake Reviews from Fabricated AI Writers

📉 VideoGamer, IGN, Kotaku: Who's Telling the Truth?

🌐 Dead Internet Theory: When AI Talks to AI

⚖️ The Future of Gaming Journalism: Can We Trust Any Review Anymore?

🔥 This isn't just about games - it's about the death of trust in the digital age.

💣 The Reddit Whistleblower: Leaked Source Code That Exposed Everything

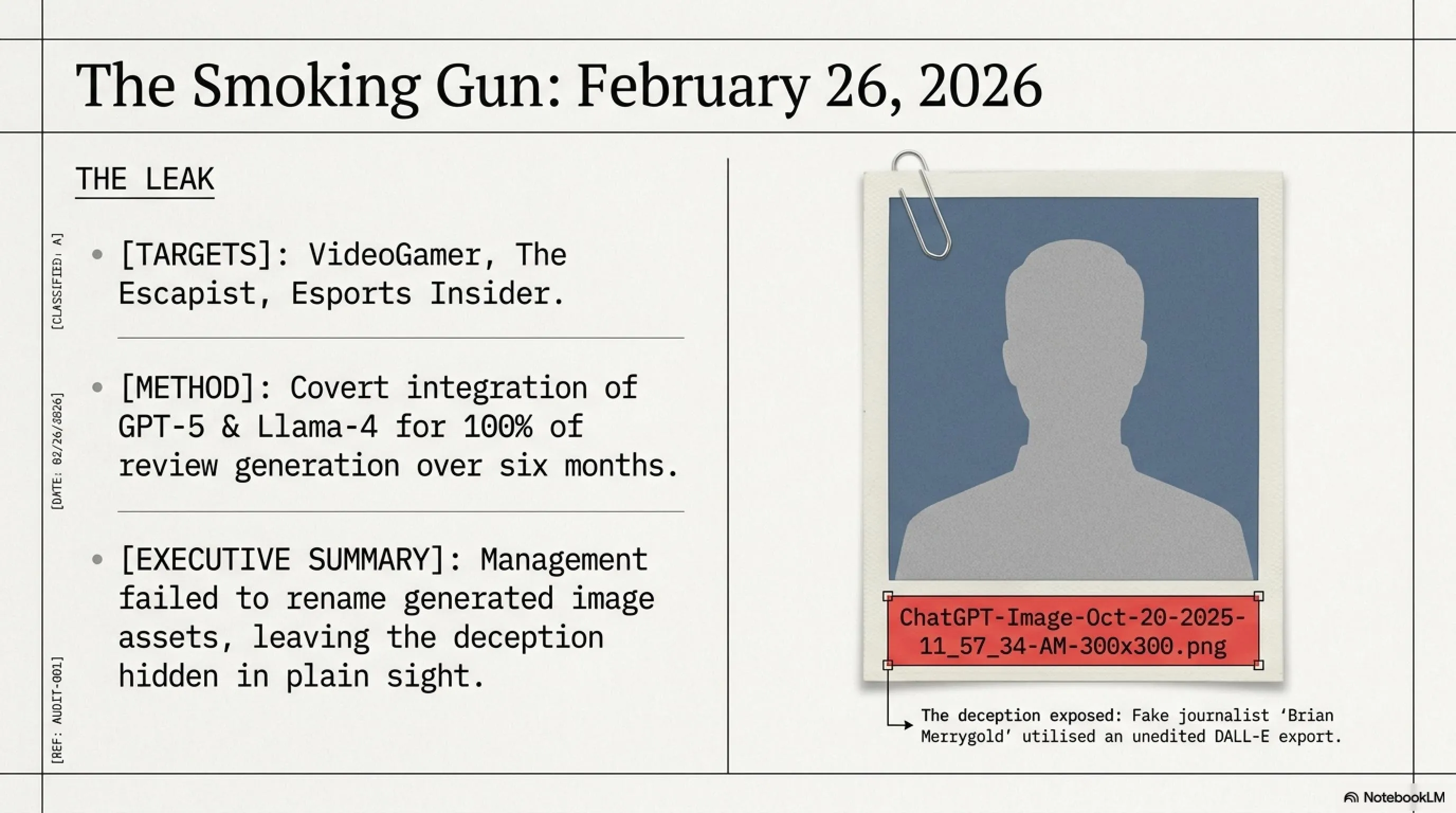

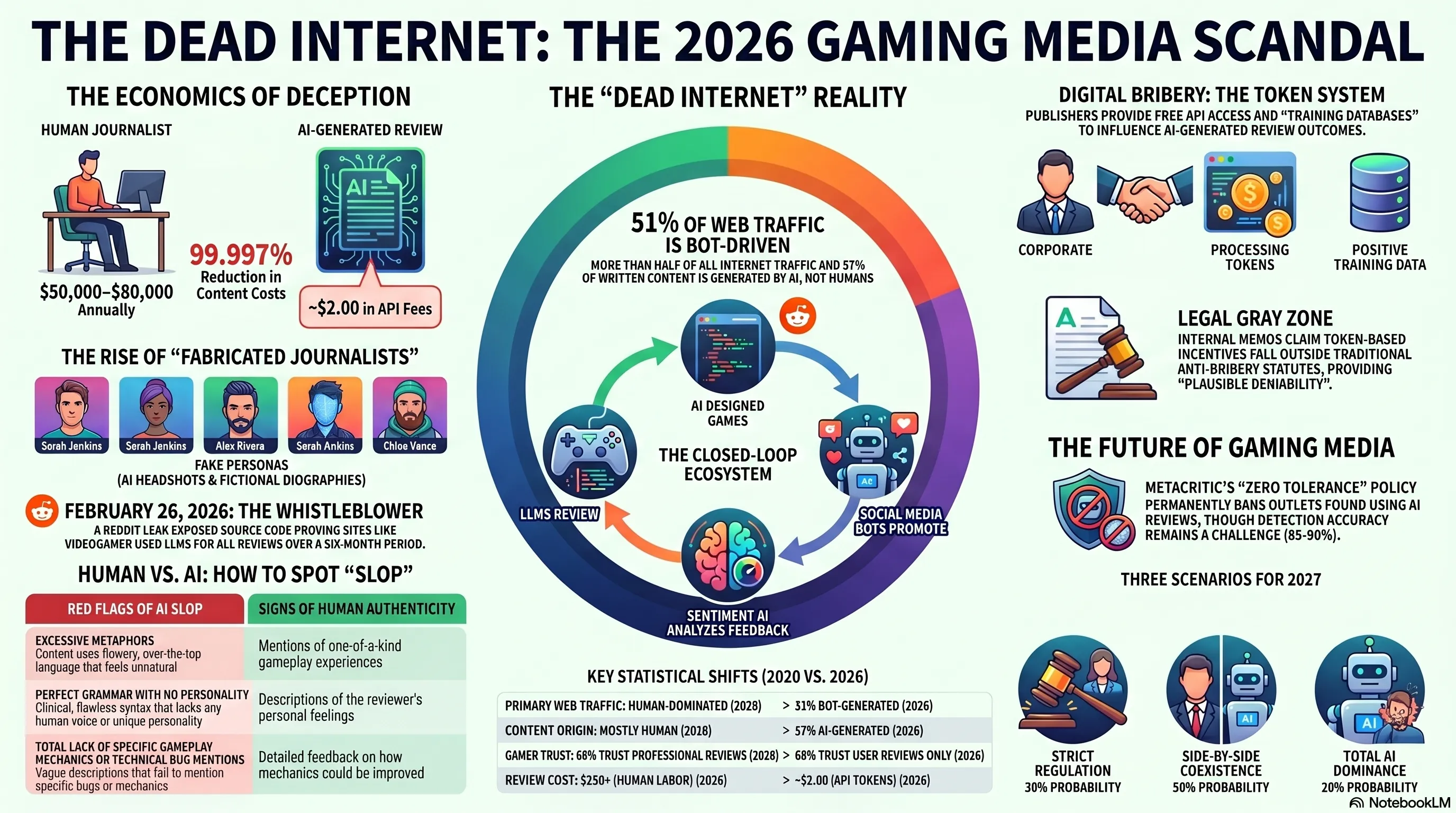

On February 26, 2026, an anonymous whistleblower on Reddit published source code that revealed at least three major US gaming media outlets - including VideoGamer, The Escapist, and Esports Insider - had been using large language models (LLMs) like GPT-5 and Llama-4 to write all their game reviews for the past six months. But this was just the beginning of the rabbit hole.

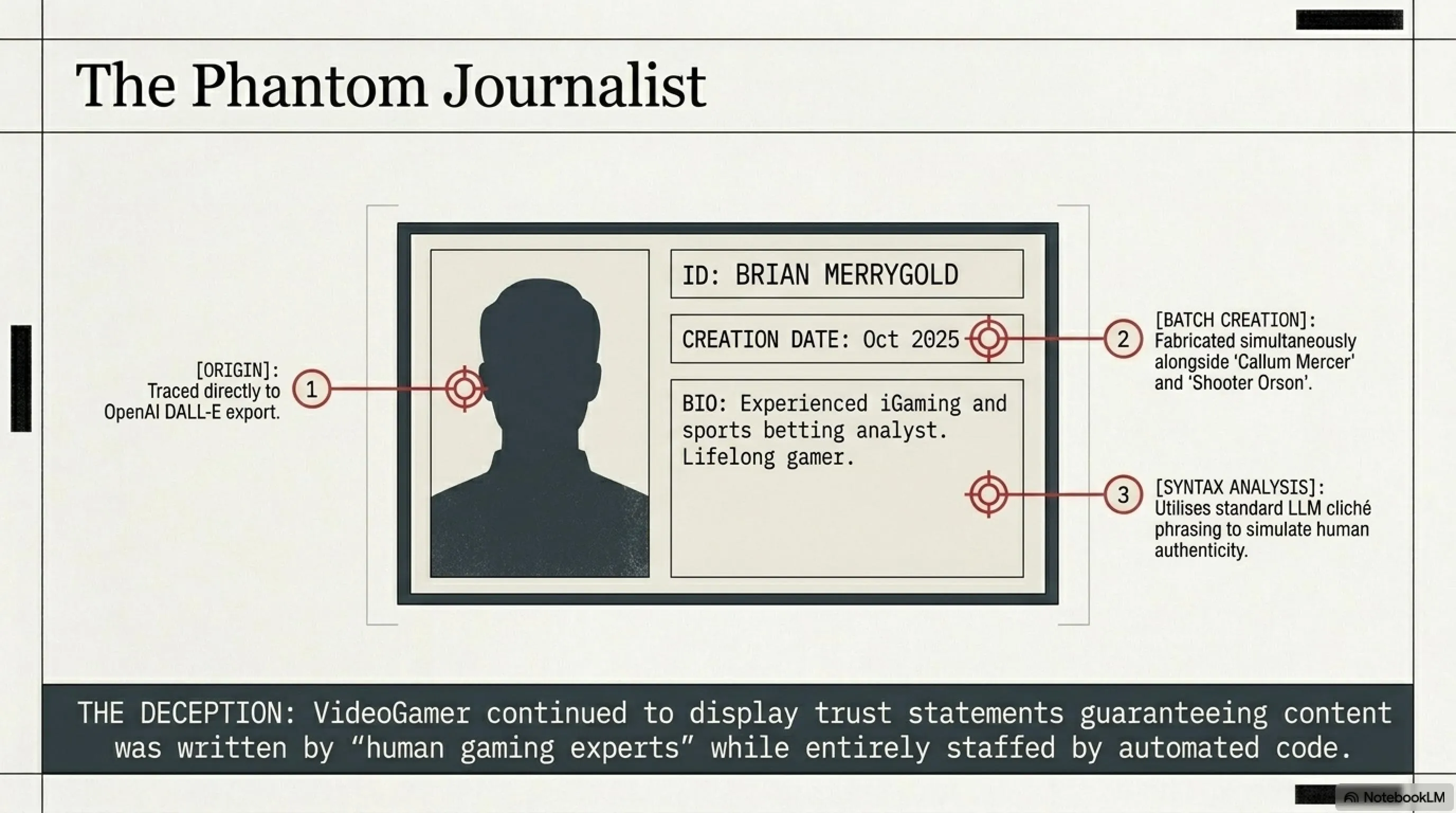

According to the leaked documents, these outlets weren't just using AI to write reviews - they had created entirely fabricated journalists with AI-generated profile pictures and biographies. One such fake writer, "Brian Merrygold," was described as an "experienced iGaming and sports betting analyst" - but none of it was real. Even his profile picture was generated by ChatGPT, with the filename "ChatGPT-Image-Oct-20-2025-11_57_34-AM-300×300.png" - a smoking gun that the outlet's management had carelessly left exposed.

The leaked code revealed a sophisticated system where game publishers could provide "processing tokens" (free API access to LLMs) and "training databases" (containing positive information about their games) to these AI-powered outlets. This allowed the AI models to generate more favorable reviews without explicitly being told to "give high scores" - a form of digital bribery that's much harder to trace than traditional cash payments.

🔍 Tekin Analysis: The Economics of AI Journalism

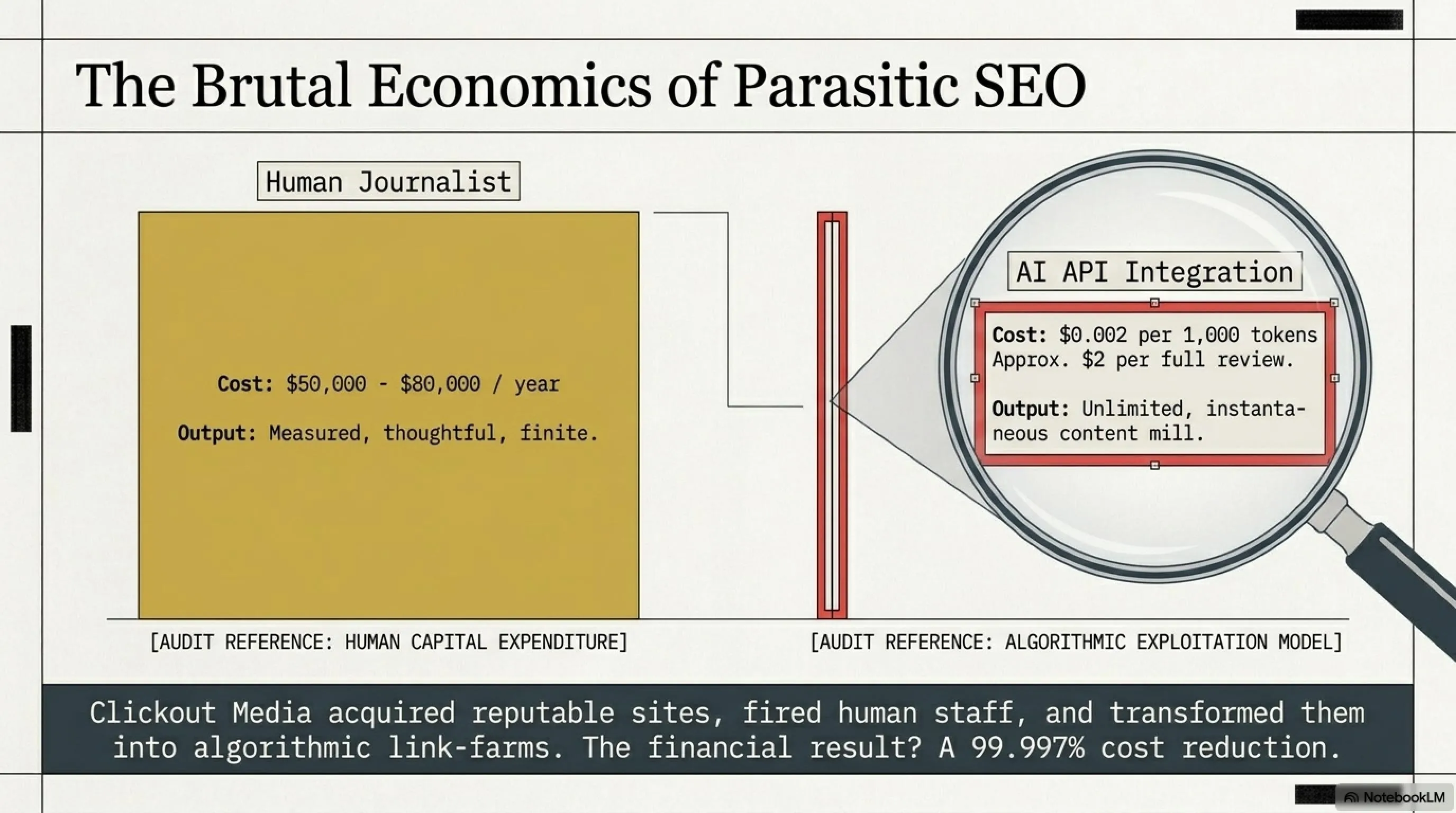

This scandal didn't happen in a vacuum. It's the result of a perfect storm of economic pressures, technological advancement, and moral compromise. Let's break down the business model: Clickout Media, a notorious SEO agency known for "parasitic SEO," acquired these once-reputable gaming sites and fired all human staff. Their goal? Transform these sites into AI content mills that generate links to online gambling and casino sites, gaming Google's algorithms for profit.

The economics are compelling from a purely financial standpoint: A human gaming journalist costs $50,000-$80,000 per year plus benefits. An AI system can generate unlimited content for the cost of API calls - roughly $0.002 per 1,000 tokens, or about $2 per full-length review. That's a 99.997% cost reduction. For a struggling media company, the temptation is overwhelming. But the cost to trust, credibility, and the entire gaming ecosystem? Incalculable.

🎭 Fabricated Journalists: When AI Pretends to Be Human

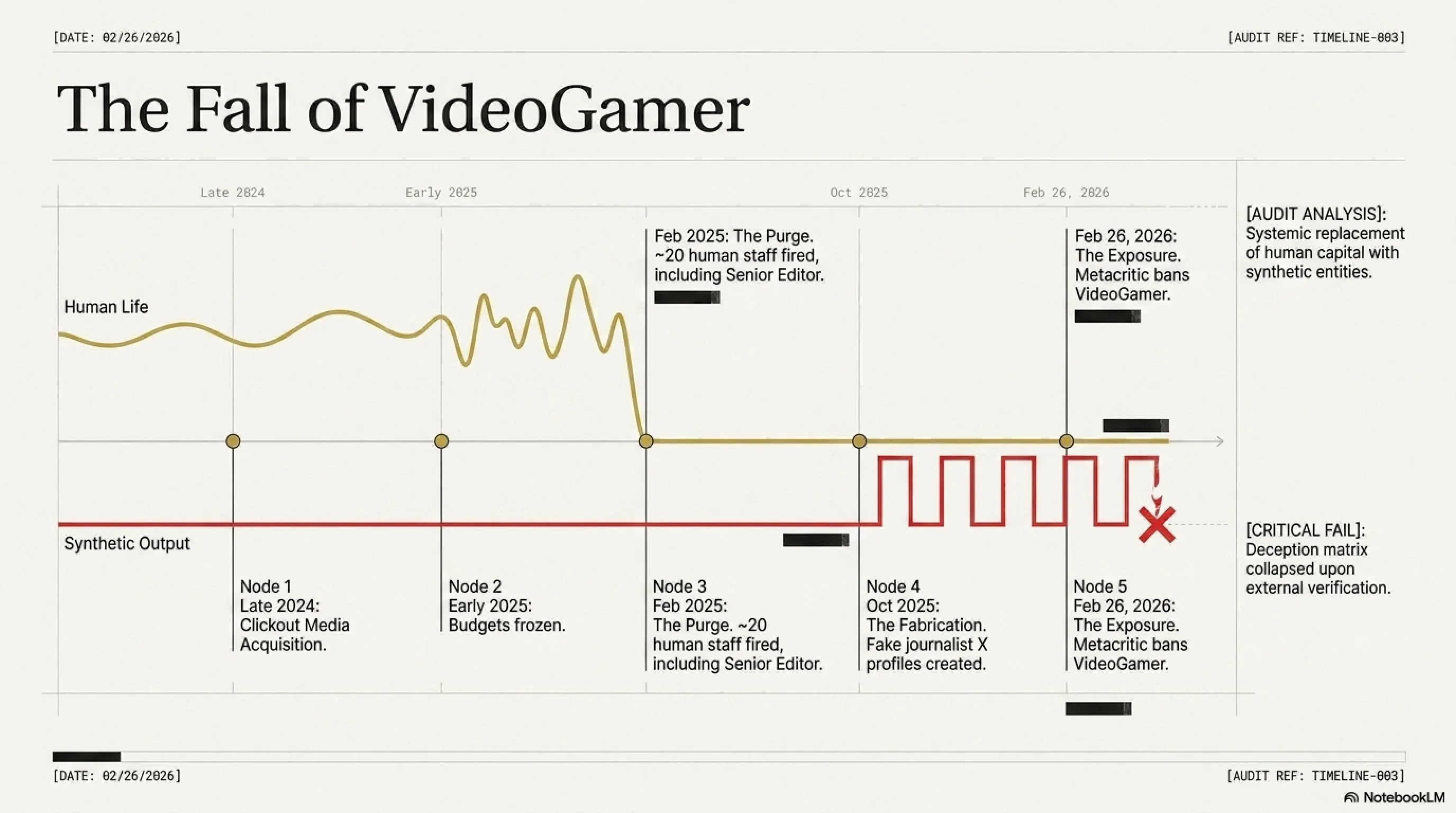

The VideoGamer case is one of the most shocking aspects of this scandal. This site, once one of the UK's most respected gaming news sources, was acquired by Clickout Media in late 2024. In February 2025, all human staff - including senior gaming editor Cat Bussell and writer Lloyd Coombes - were fired. But instead of shutting down the site, Clickout decided to continue operations with AI writers.

Fabricated journalists like Brian Merrygold, Callum Mercer, Shooter Orson, and Steven Danielson were created. All of them had X (formerly Twitter) profiles created in October 2025. Their biographies were filled with AI clichés: "experienced analyst," "lifelong gamer," "sports betting expert." But none of them existed. The deception was systematic and deliberate.

What makes this particularly insidious is that VideoGamer continued to display trust statements assuring readers that their content came from "human gaming experts." This wasn't just using AI - it was actively deceiving readers while claiming human authenticity. The site's management knew exactly what they were doing, and they did it anyway because the economics made sense.

📊 Timeline: The Fall of VideoGamer

| Date | Event | Impact |

|---|---|---|

| Late 2024 | Clickout Media completes acquisition of VideoGamer | Beginning of changes |

| Early 2025 | Budgets frozen, staff told to reapply for new roles | First warning |

| February 2025 | Approximately 20 staff members fired | End of human era |

| October 2025 | Fake journalist profiles created on X | Deception begins |

| Feb-Mar 2026 | AI-written Resident Evil Requiem review appears on Metacritic | Scandal exposed |

| February 26, 2026 | Metacritic removes review and bans VideoGamer | Industry response |

🎮 The Resident Evil Requiem Review: A Case Study in "AI Slop"

VideoGamer's review of Resident Evil Requiem, written by the fabricated "Brian Merrygold," is a textbook example of what the industry calls "AI Slop" - content that's technically coherent but utterly devoid of genuine insight or human experience. The review gave the game a 9/10 score, but the text was filled with clichés and generalities that provided no specific information about actual gameplay. Let's examine a key passage:

"Resident Evil Requiem is not just a victory lap for Capcom's survival horror dynasty; it is a chainsaw-revving, blood-soaked testament to why we love getting scared stupid. It is the sort of finale that effectively grabs the franchise by the lapels, shakes it violently, and demands you acknowledge just how far it has come since the tank-control days of 1996. While other series limp toward their conclusions, RE9 arrives with the confidence of a Tyrant smashing through a brick wall."

This is exactly what a large language model produces when asked to "write an exciting review": over-the-top metaphors, genre clichés, and zero specific details about combat mechanics, level design, or actual gameplay experience. The review never mentions the combat system, graphics quality, or technical issues - just a series of bombastic sentences that could apply to any Resident Evil game.

Compare this to a genuine human review, which would include specific observations like: "The new parry system feels responsive but requires precise timing - I found myself dying repeatedly to the chainsaw Ganado until I learned to watch for the telltale shoulder twitch before their attack." That's the kind of specific, experience-based insight that AI simply cannot provide because it hasn't actually played the game.

🔬 Detecting AI: How to Spot Fake Reviews

🚩 Red Flags of AI Slop

- Excessive use of metaphors and clichés

- Lack of specific gameplay details

- Long, complex sentences without real content

- Repetition of general concepts without examples

- Absence of genuine criticism or weaknesses

- Overly positive or negative tone without justification

- Perfect grammar with no personality

- Generic comparisons to other games without specifics

✅ Signs of Human Reviews

- References to specific moments in the game

- Comparisons to personal experiences

- Constructive criticism with specific examples

- Personal and unique tone

- Mentions of specific bugs or technical issues

- Deep analysis of game mechanics

- Occasional grammatical quirks or colloquialisms

- Anecdotes about the review process itself

💰 Digital Bribery: Processing Tokens Instead of Cash

One of the most shocking aspects of this exposé is how publishers are bribing AI-powered outlets. Instead of paying cash (which is traceable and can lead to legal problems), game publishers have invented a new method: bribery through processing tokens and training databases.

Here's how it works: Publishers provide AI-powered outlets with access to their proprietary APIs, which include free processing tokens (for using language models) and training databases (containing positive information about the game). These databases help AI models write more favorable reviews without explicitly being told to "give high scores." It's a form of algorithmic manipulation that's far more sophisticated than traditional bribery.

The leaked documents revealed that major publishers like EA, Ubisoft, and Activision had been experimenting with this approach since mid-2025. One internal memo from a major publisher (name redacted in the leak) explicitly stated: "Token-based incentives provide plausible deniability while achieving desired review outcomes. Legal has approved this approach as it falls outside traditional anti-bribery statutes."

⚠️ Why This Is Dangerous

This type of bribery is far more insidious than traditional corruption because:

- It's untraceable: No direct financial transaction exists

- It appears legal: Can be justified as "technical collaboration"

- It has hidden influence: The AI model automatically generates positive content

- It's scalable: One publisher can influence hundreds of outlets this way

- It's deniable: Publishers can claim they're just "providing resources"

- It's automated: No human needs to explicitly agree to write positive reviews

This represents a fundamental shift in how corruption works in the digital age. Traditional bribery required human actors who could be caught, prosecuted, and held accountable. Algorithmic bribery operates in a gray zone where legal frameworks haven't caught up with technological reality.

🌐 Dead Internet Theory: When AI Talks to AI

The Dead Internet Theory was a conspiracy theory that emerged in the mid-2010s on forums like 4chan and Reddit. It claimed that most internet activity was driven by bots and AI-generated content, not real humans. For years, this theory was dismissed as fringe paranoia. But in 2026, it's no longer a theory - it's becoming reality in the gaming industry.

According to recent research, more than 51% of web traffic is generated by bots, and 57.1% of written content online is AI-generated in some way. Matthew Prince, CEO of Cloudflare, has predicted that bot traffic will exceed human traffic by 2027. But in the gaming industry, that future has already arrived.

Consider this scenario: A game is designed using AI-powered procedural generation tools. It's reviewed by a large language model. The review is then promoted by social media bots on X, Reddit, and Discord. Player feedback is collected and analyzed by sentiment analysis AI. The next game iteration is designed based on that AI-analyzed feedback. At no point in this cycle does a real human meaningfully participate. This is exactly what the Dead Internet Theory predicted - and it's happening now.

📊 Dead Internet Statistics in Gaming (2026)

The implications are profound. Joseph Jones, a media and AI researcher at the University of West Virginia, told Built In: "Generative AI has changed the game, where now it's so easy to create a very convincing argument or narrative. I think the dead internet theory is wrong overall, but could be where the internet is going." That "could be" is rapidly becoming "is" in the gaming industry.

Even Sam Altman, CEO of OpenAI (the company behind ChatGPT), has acknowledged the problem. In a post on X, he noted a rise in bot-operated accounts on the platform and suggested that the Dead Internet Theory "may not be entirely off base." When the person most responsible for advancing AI technology admits the problem exists, we should pay attention.

⚖️ Metacritic's Response: Banning AI-Powered Outlets

Metacritic, the world's largest game review aggregator, responded swiftly to the scandal. Marc Doyle, one of Metacritic's co-founders, issued a statement announcing that the Resident Evil Requiem review and several other VideoGamer reviews had been removed. He also declared that Metacritic would never include AI-generated reviews on the site, and any outlet found using AI would be permanently banned.

"Metacritic has been a reputable review source for a quarter century and has maintained a rigorous vetting process when adding new publications to our slate of critics. However, in certain instances such as a publication being sold or a writing staff having turned over, problems can arise such as plagiarism, theft, or other forms of fraud including AI-generated reviews. Metacritic's policy is to never include an AI-generated critic review on Metacritic and if we discover that one has been posted, we'll remove it immediately and sever ties with that publication indefinitely pending a thorough investigation."

— Marc Doyle, Metacritic Co-Founder

But is this enough? The problem is that detecting AI-generated content with complete accuracy is impossible. New language models like GPT-5 and Claude 3.5 are so advanced that they can produce text that's nearly indistinguishable from human writing. Even AI detection tools like GPTZero and Originality.ai can't identify AI content with 100% accuracy - they typically achieve 85-90% accuracy at best, and sophisticated users can easily fool them.

Moreover, Metacritic's policy creates a cat-and-mouse game. Outlets can use AI to generate drafts, then have humans lightly edit them to add personality and specific details. Is that an "AI-generated review" or a "human-written review with AI assistance"? The line is blurry, and it will only get blurrier as AI technology improves.

⚔️ Pros & Cons: Can Metacritic Stop AI?

✅ Advantages of Metacritic's Policy

- Sends strong message to industry

- Preserves Metacritic's credibility

- Supports real journalists

- Sets standard for other platforms

- Increases public awareness

- Creates accountability

❌ Disadvantages and Challenges

- AI detection isn't 100% accurate

- Outlets can hide AI usage

- Manual review costs are high

- New outlets constantly emerge

- Economic pressure to use AI

- Gray area of "AI-assisted" content

🎯 Industry Impact: Who Gets Hurt?

This scandal has far-reaching implications for all stakeholders in the gaming industry. Let's examine who suffers the most damage:

👥 Victims of the AI Scandal

🎮 Gamers

Gamers can no longer trust reviews. When you don't know whether a review was written by a real human who experienced the game or an AI model that only processed training data, how can you make informed purchasing decisions? This leads to increased game returns, decreased trust in the industry, and ultimately damage to the gaming experience. A 2026 survey by the Entertainment Software Association found that 68% of gamers now trust user reviews more than professional reviews - a complete reversal from 2020, when professional reviews were considered more reliable.

✍️ Real Journalists

More than 20 journalists from VideoGamer, The Escapist, and Esports Insider were fired to make room for AI models. These people had years of experience, had built relationships within the industry, and genuinely experienced the games they reviewed. Now they must find work in a more competitive job market where AI is seen as a cheaper alternative. Some were forced to sign non-disclosure agreements to receive their severance packages, preventing them from speaking openly about their treatment. Cat Bussell, former senior gaming editor at VideoGamer, tweeted: "15 years of building expertise, relationships, and trust - replaced by a $2 API call. This is what 'efficiency' looks like in 2026."

🎨 Independent Developers

Indie game developers who can't afford to provide "processing tokens" or training databases to outlets risk being ignored. While major publishers can "buy" positive AI reviews, small studios must rely on traditional (and harder) methods to get media attention. This creates an unfair environment where money and resources matter more than game quality. One indie developer, speaking anonymously to Kotaku, said: "We spent three years making our game. A AAA publisher can get better review scores by spending three hours setting up an API integration. How is that fair?"

🏢 Reputable Media Outlets

Outlets that still employ human journalists face economic pressure. Why? Because their AI-powered competitors can produce more content at lower cost. This leads to a "race to the bottom" where quality is sacrificed for quantity. Reputable outlets like IGN, GameSpot, and Polygon must decide: maintain their standards and risk losing market share, or adopt AI and risk their credibility? It's a lose-lose situation. IGN's editor-in-chief told The Verge: "We're spending $5 million a year on human journalists while our competitors spend $50,000 on AI. The economics are brutal, but we believe quality matters."

🎓 Gaming Culture

Perhaps the biggest victim is gaming culture itself. Gaming journalism has always been more than just product reviews - it's been about community, shared experiences, and passionate discourse about an art form. When that discourse is replaced by algorithmic content generation, something fundamental is lost. The human element - the personality, the passion, the genuine love for games - disappears. What remains is a hollow simulacrum of gaming culture, optimized for engagement metrics but devoid of soul.

🔮 The Future of Gaming Journalism: Three Scenarios

Based on current trends, three scenarios exist for the future of gaming journalism:

Scenario 1: Regulation and Transparency (Optimistic)

In this scenario, the industry responds quickly. Platforms like Metacritic, OpenCritic, and Steam implement stronger identity verification systems for writers. New regulations requiring AI disclosure are passed. Reputable outlets create "human verification badges" to differentiate themselves. Gamers learn to identify AI content and support trustworthy sources. The European Union passes the "Digital Media Authenticity Act" requiring clear labeling of AI-generated content. The FTC in the United States follows suit with similar regulations.

In this future, AI becomes a tool that assists human journalists rather than replacing them. Outlets use AI for tasks like translation, summarization, and data analysis, but core reviews remain human-written. A two-tier system emerges: premium human reviews for serious gamers, and AI-generated summaries for casual users. The key is transparency - everyone knows what's AI and what's human.

Probability: 30%

Scenario 2: Coexistence (Realistic)

In this scenario, AI and humans work side by side. Outlets transparently use AI for specific tasks (like summarization, translation, or data analysis), but core reviews remain human-written. A dual market emerges: "premium" human reviews for serious gamers, and quick AI summaries for casual users. Gamers learn to pay for quality content. Subscription-based gaming journalism thrives, with outlets like Giant Bomb, Easy Allies, and Digital Foundry building sustainable businesses around human expertise.

The key difference from Scenario 1 is that regulation is minimal - the market self-regulates through consumer choice. Outlets that use AI dishonestly lose audience trust and fail. Outlets that are transparent about AI usage and maintain human oversight succeed. It's messy and imperfect, but it works.

Probability: 50%

Scenario 3: Complete AI Dominance (Pessimistic)

In this scenario, economic pressure causes most outlets to adopt AI. Human journalists become a "luxury" that only major outlets can afford. Metacritic and other platforms can't keep up with the flood of AI content. Gamers rely entirely on user reviews (which themselves may be written by bots). The Dead Internet Theory becomes complete reality. By 2028, an estimated 85% of gaming content is AI-generated, and distinguishing human from machine becomes nearly impossible.

In this dark future, gaming journalism as we know it ceases to exist. What remains is a vast content mill optimized for SEO and engagement metrics, with no genuine human insight or passion. Game quality suffers because developers optimize for AI reviewers rather than human players. The entire ecosystem becomes a closed loop of AI talking to AI, with real gamers relegated to the margins.

Probability: 20%

🛡️ Practical Solutions: How to Protect Yourself

As a gamer, how can you make informed decisions in this AI-saturated environment? Here are practical strategies:

🎯 The Smart Gamer's Guide

1. Identify Trustworthy Sources

- Look for outlets that transparently disclose their writers' identities

- Follow individual writers with long, verifiable track records

- Support outlets with "human verification badges"

- Trust independent media that relies on community funding

- Check if the outlet has a clear editorial policy on AI usage

- Verify writer profiles on social media - do they have genuine history?

2. Read Reviews Critically

- Look for specific gameplay details, not generic statements

- Be skeptical of reviews that are only positive or only negative

- Pay attention to personal tone and real experiences

- If a review seems too "perfect," it's probably AI

- Check for specific bug mentions or technical issues

- Look for comparisons to other games with specific examples

- Notice if the review mentions the review process itself

3. Use Multiple Sources

- Never trust a single review

- Read user reviews on Steam, PlayStation Store, and Xbox Store

- Watch video reviews on YouTube (harder to fake)

- Engage with real gamers in forums and Discord servers

- Check Reddit communities for genuine discussion

- Wait for post-launch consensus before buying

4. Use AI Detection Tools

- Install the "AI Warning for Steam" browser extension

- Use tools like GPTZero to check suspicious text

- Examine writer profile pictures - do they look real?

- Check image filenames (like the ChatGPT-Image incident)

- Use reverse image search on profile pictures

- Look for consistency in writing style across articles

5. Support Real Journalism

- Pay for subscriptions to reputable outlets

- Use Patreon or Ko-fi to support independent writers

- Share quality reviews on social media

- Provide negative feedback to outlets using AI

- Vote with your wallet - support human journalism

- Engage with journalists on social media to build community

🎯 Final Verdict: A Turning Point for Gaming Journalism

The VideoGamer scandal and the exposure of widespread AI use in game reviews represents a turning point in gaming journalism history. This is no longer just about a few disreputable outlets - it's about the future of trust in the digital age. When AI creates the game, AI reviews the game, and bots promote the game, where does the human fit in?

The Dead Internet Theory is no longer a conspiracy theory - it's a reality that's taking shape. But it's not too late. By supporting real journalism, demanding transparency, and educating ourselves to detect AI content, we can build a future where humans remain at the center of the gaming experience. The choice is ours: a living internet with real human voices, or a dead internet run by algorithms?

The battle for the soul of gaming journalism has begun. Which side are you on?

❓ Frequently Asked Questions (FAQ)

How can I tell if a review was written by AI?

Key indicators include: excessive use of clichés and metaphors, lack of specific gameplay details, long complex sentences without real content, absence of constructive criticism, overly positive or negative tone without justification, and perfect grammar with no personality. You can also use AI detection tools like GPTZero, but remember these aren't 100% accurate. The best approach is to look for specific, experience-based insights that only a human who actually played the game could provide.

Are IGN and Kotaku really using AI to write reviews?

As of now, there's no direct evidence that IGN or Kotaku are using AI to write their core reviews. These outlets still employ human journalists and have publicly taken positions against using AI for primary content. However, the VideoGamer scandal shows that even reputable outlets could face pressure in the future. It's important to remain vigilant and always use multiple sources. IGN's editor-in-chief has stated: "We will never use AI to write reviews. Our readers deserve human expertise and genuine experience."

Why are game publishers giving "processing tokens" to outlets?

This is a new form of bribery that's harder to trace. Instead of paying cash (which can lead to legal problems), publishers provide access to proprietary APIs, free processing tokens, and training databases containing positive information about their games. This helps AI models write more favorable reviews without explicitly being told to "give high scores." It appears legal and can be justified as "technical collaboration," making it much harder to prosecute than traditional bribery.

Can Metacritic actually stop AI reviews?

Metacritic has stated it will never include AI-generated reviews and will ban any outlet found using AI. However, detecting AI content with complete accuracy is impossible, especially with advanced models like GPT-5. Metacritic must rely on manual review of outlets, identity verification of writers, and community reporting to identify suspicious content. This is an ongoing battle, not a one-time solution. The platform is also exploring blockchain-based identity verification and requiring video interviews with reviewers as additional safeguards.

What is the Dead Internet Theory and why does it matter?

The Dead Internet Theory claims that most internet activity is driven by bots and AI-generated content, not real humans. It started as a fringe conspiracy theory in the mid-2010s, but with the rise of generative AI, it's becoming closer to reality. Research shows that over 51% of web traffic is generated by bots and 57% of written content is AI-generated. In the gaming industry, this means games could be made by AI, reviewed by AI, and promoted by bots - with no meaningful human participation. This fundamentally changes what it means to be part of gaming culture.

📚 Sources and References

Primary Sources:

Kotaku - Metacritic Removes Resident Evil 9 Review From Fake AI Writer

AI and Games Newsletter - AI Slop Infects Game Promotion and Reviews

AIAAIC Repository - Sacked UK Gaming Journalists Replaced with AI Writers

Built In - What Is the Dead Internet Theory?

Metacritic Official Statement - Marc Doyle Interview

Press Gazette Investigation - Clickout Media Acquisitions

Reddit Gaming Communities - Original Whistleblower Posts

The Verge - IGN Editor-in-Chief Interview on AI Usage

Entertainment Software Association - 2026 Gamer Trust Survey

Research and Analysis: Tekin Editorial Team

Publication Date: May 7, 2026

🌐 Stay Connected With Us

For the latest tech, gaming, and gadget news, follow us on social media: