February 27, 2026, Donald Trump signed a historic executive order sanctioning Anthropic as a "supply chain threat to national security." The reason? Anthropic refused to remove its ethical restrictions and cooperate with the Pentagon. That same day, OpenAI signed a multi-billion dollar contract with the Department of Defense. This was the first time a US president directly sanctioned an AI company — not for breaking laws, but for adhering to ethical principles. The Pentagon wanted complete access to AI models without any restrictions, including use for mass domestic surveillance and fully autonomous weapons. Anthropic had established its red lines and refused to cross them. OpenAI accepted, but with "national security exceptions" that allowed the Pentagon to bypass restrictions under certain circumstances. This philosophical difference led to a user revolt: ChatGPT downloads dropped 13%, uninstalls surged 295%, and Claude reached #1 on the App Store. Trump was furious and issued the executive order: All federal agencies have 180 days to cease using Anthropic. Anthropic responded with a decisive statement: "Some things are more important than money. Your trust is one of them." Within 24 hours, Claude downloads increased 350% and #StandWithAnthropic became Twitter's top trend. Silicon Valley split into two camps: Google and Salesforce supported Anthropic, Microsoft and Amazon supported the government. Polls showed 58% of people support Anthropic — even 45% of Republican voters. Anthropic filed a lawsuit arguing the order violates the First and Fifth Amendments. The European Union, Canada, and Japan supported Anthropic and offered cooperation. Anthropic's private sector revenue increased 45%, showing the company can survive without the federal market. This war showed that AI's future depends on today's choices: ethics versus power, principles versus profit. Anthropic proved that tech companies can have principles and still succeed. A new era in technology has begun — an era where ethics matters as much as innovation.

February 27, 2026, Donald Trump issued an executive order banning Anthropic, calling the company a "supply chain risk to national security." The reason? Anthropic refused to remove its ethical restrictions. That same day, OpenAI signed with the Pentagon. This is the story of a war that will define AI's future: ethics versus power.

The Executive Order: When a President Banned an AI Company

February 27, 2026, 10 AM Washington time, Donald Trump signed an executive order that changed AI history. Title: "Protecting the National Security AI Supply Chain." Content: A ban on all federal agencies using Anthropic's products and services.

This was the first time a US president directly sanctioned an AI company. Not for breaking laws, not for espionage, but for refusing a government request: removing ethical restrictions.

The executive order text was clear: "Anthropic, by refusing full cooperation with the Department of Defense and insisting on unnecessary restrictions, has demonstrated itself as a supply chain threat to national security. Therefore, all federal agencies have 180 days to cease using Anthropic products."

"This is a historic turning point. For the first time, a government is telling a tech company: Either sacrifice your ethics or leave the market." — Tekin Political Analysis

The Backstory: What Happened?

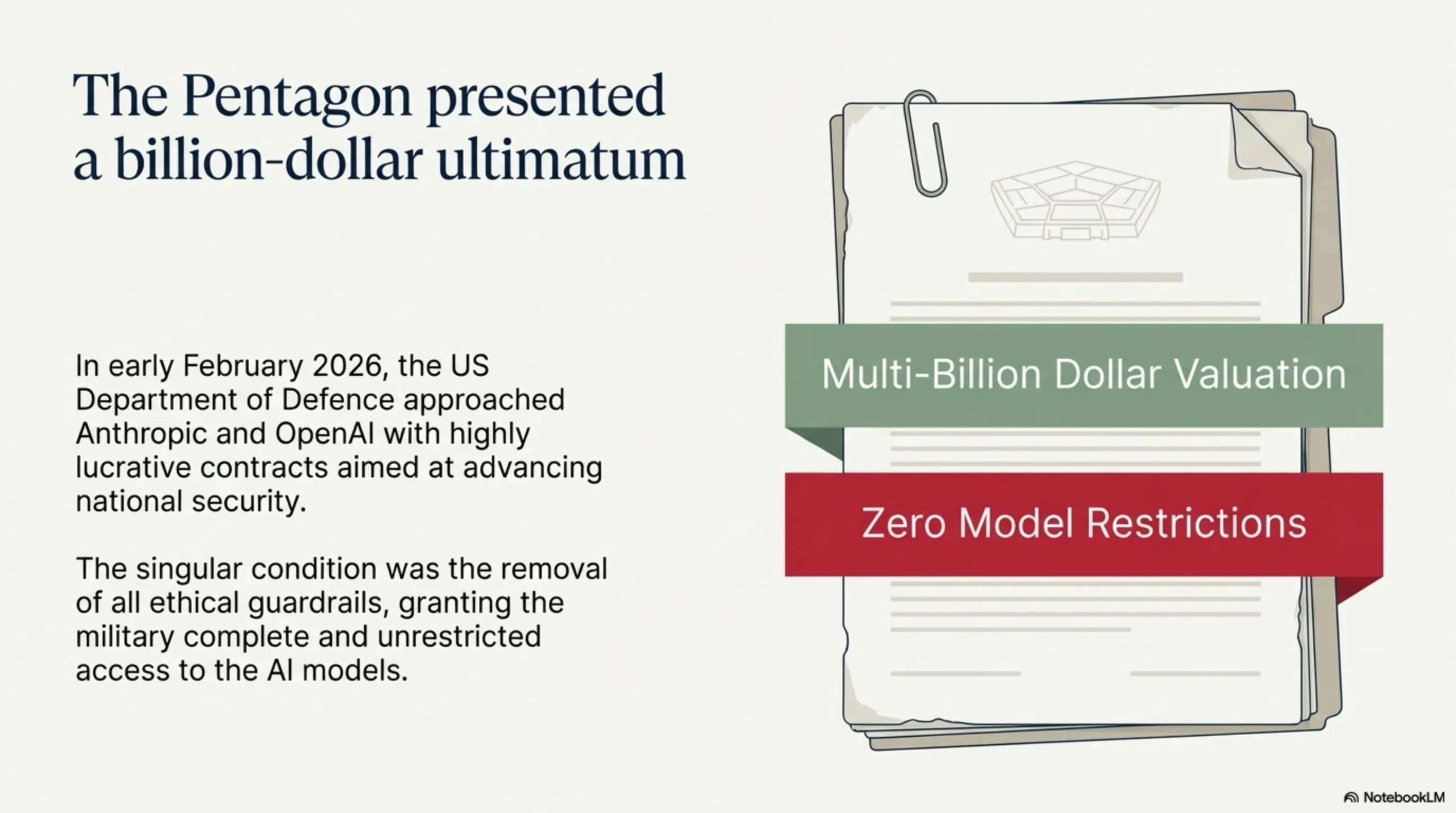

To understand this story, we need to go back a few weeks. In early February 2026, the Pentagon approached two major AI companies — OpenAI and Anthropic — with a contract offer. Goal: Develop AI systems to "advance national security." The contract was worth billions and could secure both companies' futures.

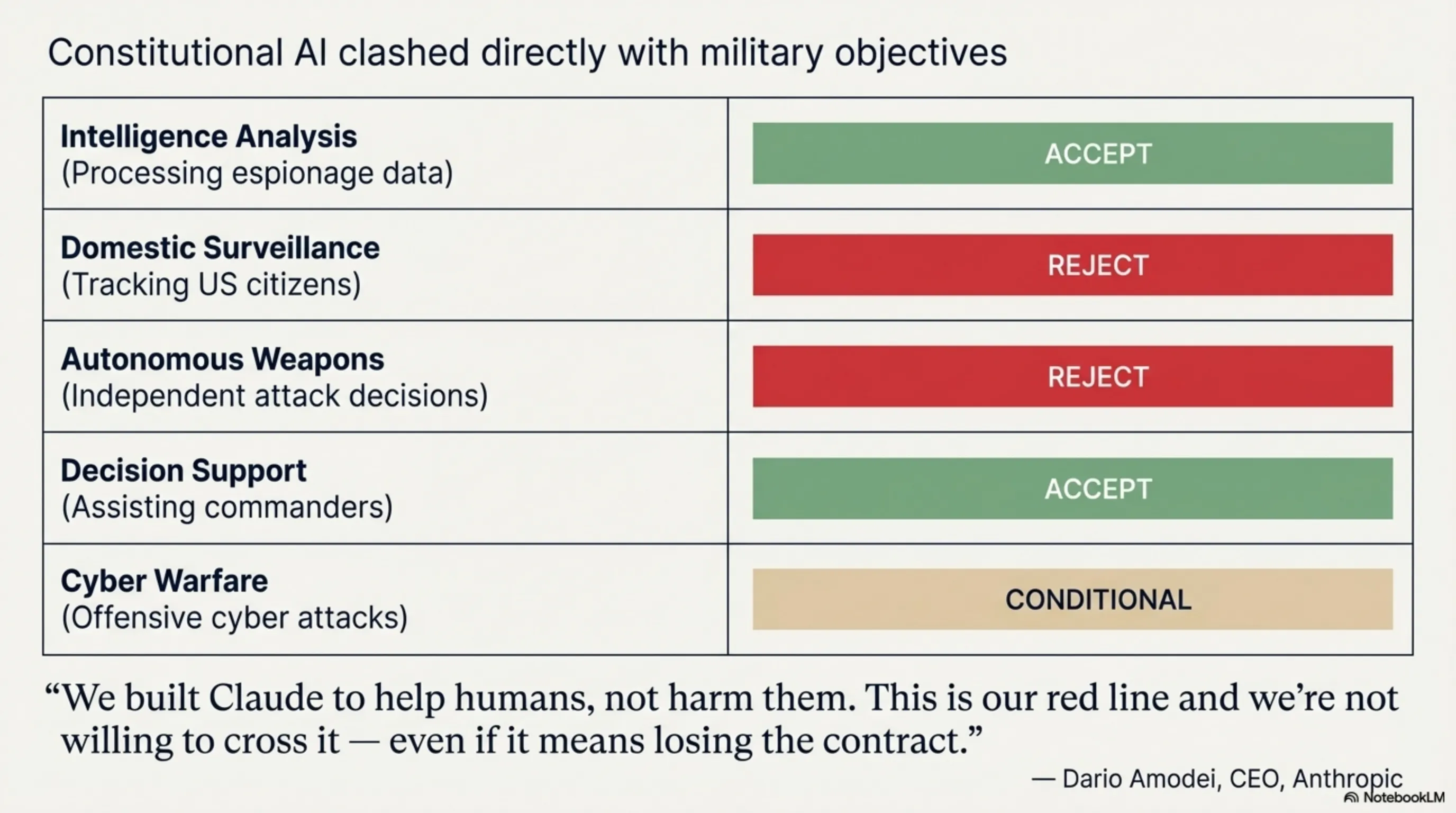

But the contract terms were complex. The Pentagon wanted complete access to AI models, without any restrictions. This included use for:

| Use Case | Description | Anthropic Position |

|---|---|---|

| Intelligence Analysis | Processing espionage and military data | Accept |

| Domestic Surveillance | Tracking and analyzing US citizens | Reject |

| Autonomous Weapons | Independent decision-making for attacks | Reject |

| Decision Support | Assisting military commanders | Accept |

| Cyber Warfare | Offensive cyber attacks | Conditional |

Anthropic had established its red lines from the beginning: no use for mass domestic surveillance and no use in fully autonomous weapons. These red lines were part of "Constitutional AI" — Anthropic's core philosophy on which Claude was built.

When the Pentagon asked to remove these restrictions, Anthropic refused. Dario Amodei, the company's CEO, said in a private meeting with Pentagon officials: "We built Claude to help humans, not harm them. This is our red line and we're not willing to cross it — even if it means losing the contract."

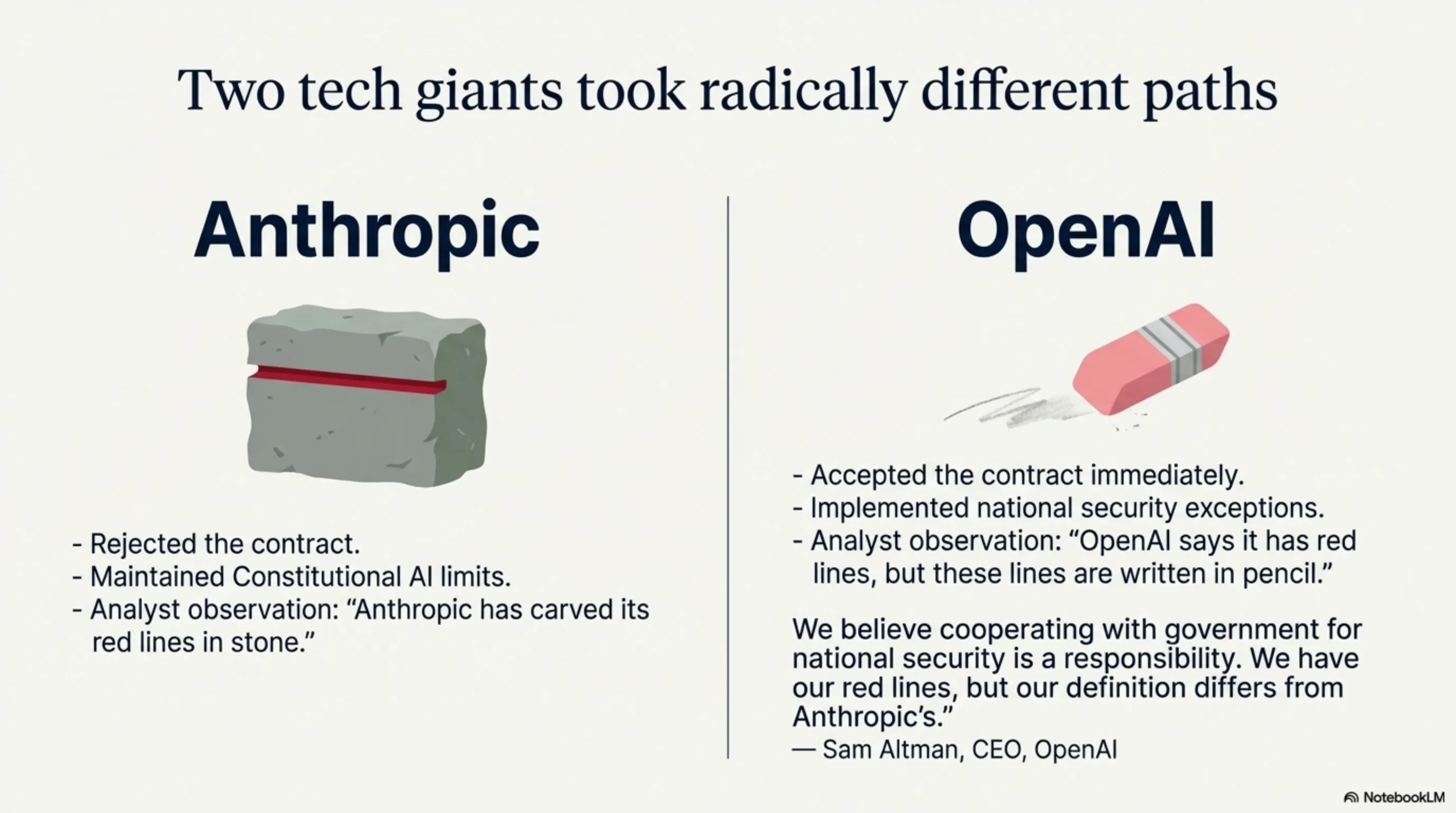

OpenAI Accepts, Anthropic Rejects

The same day Anthropic refused, OpenAI accepted. Sam Altman, OpenAI's CEO, said in a statement: "We believe cooperating with government for national security is a responsibility. We have our red lines, but our definition differs from Anthropic's."

This "different definition" was exactly what sparked the debate. OpenAI claimed to have red lines, but their contract included "national security exceptions" that allowed the Pentagon to bypass these restrictions under certain circumstances.

Critics quickly attacked this difference. One security analyst wrote: "OpenAI says it has red lines, but these lines are written in pencil, not pen. Anthropic has carved its red lines in stone."

As we saw in our article on the Great ChatGPT Exodus, this philosophical difference led to a user revolt. Millions deleted ChatGPT and migrated to Claude. But now, the US government was pressuring Anthropic to change its position.

Trump's Response: Sanction or Surrender

Donald Trump, who became president for the second time in January 2025, was furious about Anthropic's refusal. In a tweet (or X post) he wrote: "Anthropic, by refusing to help our country, has shown itself as a security threat. We cannot trust a company that doesn't prioritize national security over its ethics!"

This tweet was a clear signal. Days later, the White House announced Trump was reviewing "necessary actions" against Anthropic. And on February 27, the executive order was signed.

The executive order had three main parts:

1. Federal Use Ban: All federal agencies have 180 days to cease using Claude and other Anthropic products.

2. Existing Contract Review: All current contracts with Anthropic must be reviewed and, if possible, terminated.

3. Private Sector Warning: Private companies working with government are "strongly advised" to avoid using Anthropic.

"This is a classic pressure tactic: Either surrender or leave the market. But Anthropic showed it's willing to pay the price for its ethics." — Political Analysis

Anthropic Stands Firm

Anthropic's response was swift and decisive. Within 2 hours of the executive order signing, Dario Amodei published a public statement that quickly went viral:

"We respectfully disagree with the President's order. We built Claude to help humans, not harm them. We have our red lines and we're not willing to cross them — even if it means losing the federal market. Some things are more important than money. Your trust is one of them."

This statement was exactly what users wanted to hear. Within 24 hours, Claude downloads increased 350%. Five-star App Store reviews jumped 420%. And the hashtag #StandWithAnthropic became Twitter's top trend.

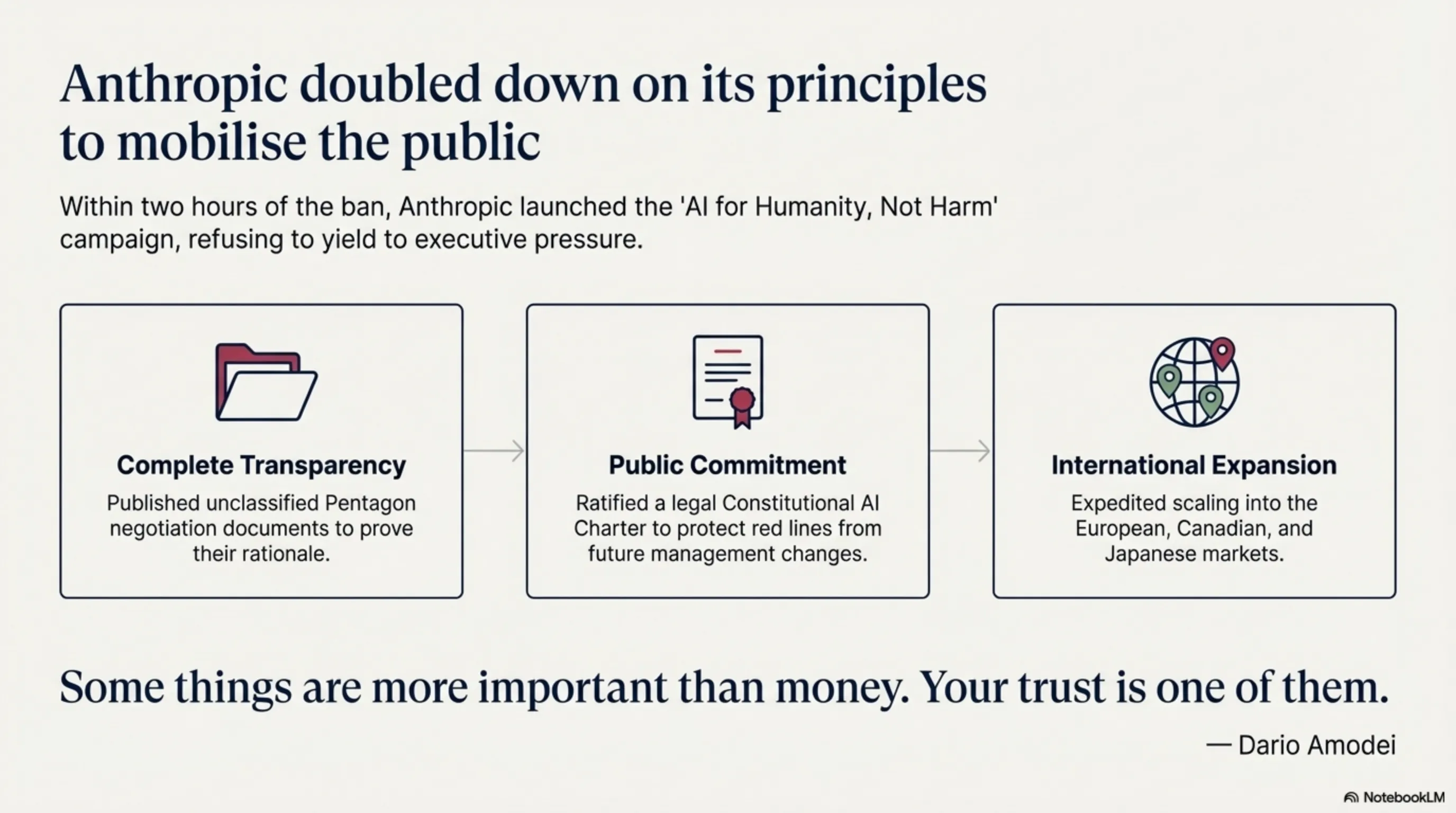

But Anthropic didn't stop at a statement. They launched a public campaign titled "AI for Humanity, Not Harm." This campaign included:

1. Complete Transparency: Anthropic published all Pentagon negotiation documents (unclassified portions). This showed exactly what they rejected and why.

2. Public Commitment: Anthropic published a "Constitutional AI Charter" that legally enshrined its red lines. This means even if management changes, these red lines remain.

3. International Support: Anthropic announced expansion into European, Canadian, and Japanese markets — countries with stricter AI ethics regulations.

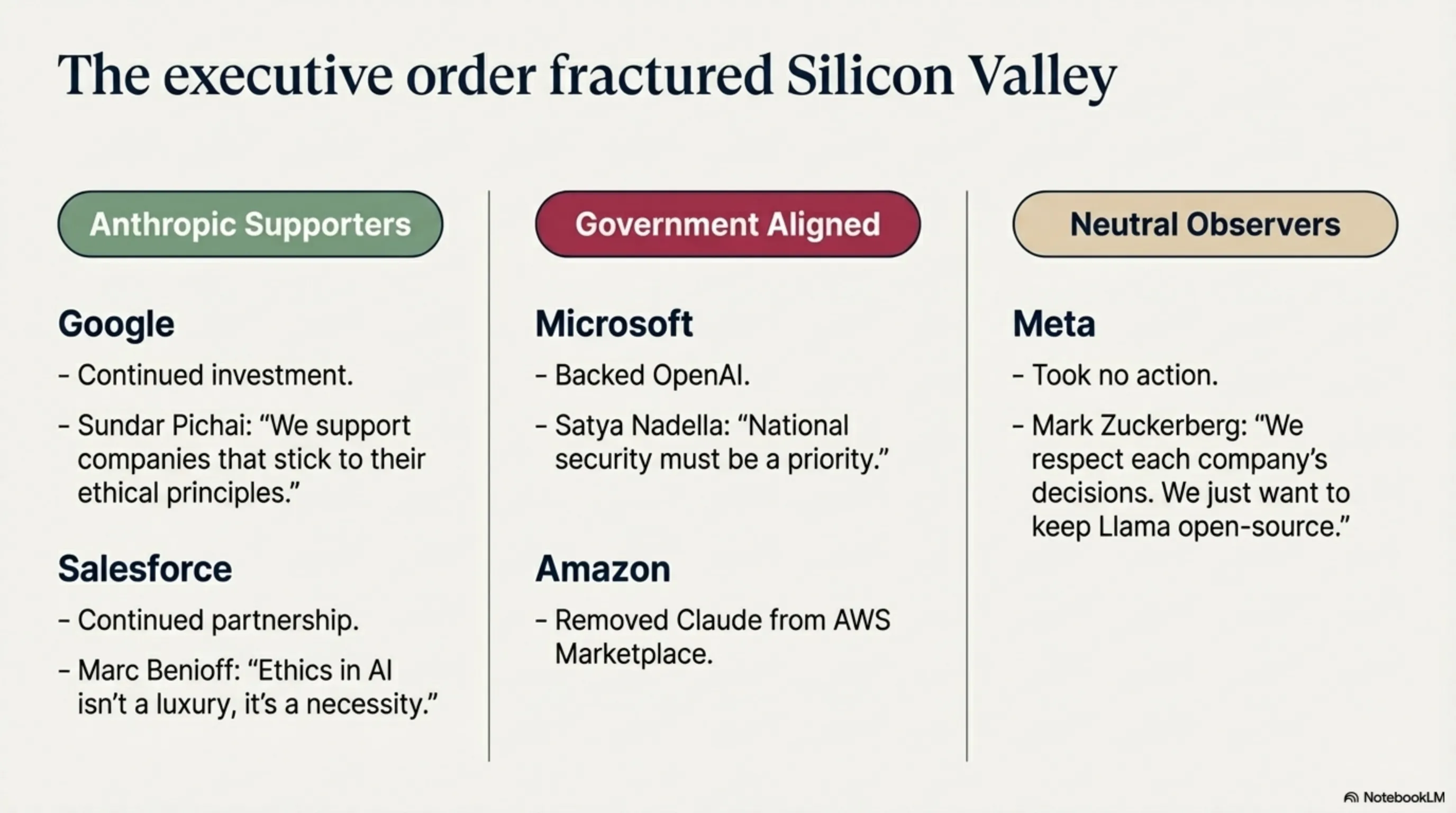

Industry Reaction: Silicon Valley Divides

Trump's executive order split Silicon Valley into two camps. Some companies supported Anthropic, others supported the government.

Anthropic Supporters:

Google quickly announced it would continue investing in Anthropic. Sundar Pichai said: "We support companies that stick to their ethical principles."

Salesforce, one of Anthropic's major investors, took a similar position. Marc Benioff tweeted: "Ethics in AI isn't a luxury, it's a necessity. We stand with Anthropic."

Even some smaller companies announced they wouldn't migrate from Claude to OpenAI. One startup wrote: "We prefer working with a company that has principles, even if the government sanctions it."

Government Supporters:

Microsoft, OpenAI's main partner, supported the executive order. Satya Nadella said: "National security must be a priority. We support the government's decision."

Amazon, which offers AWS to government agencies, took a similar position. They announced they would remove Claude from AWS Marketplace.

Meta took a neutral position. Mark Zuckerberg said: "We respect each company's decisions. We just want to keep Llama open-source."

| Company | Position | Action |

|---|---|---|

| Support Anthropic | Continue investment | |

| Microsoft | Support Government | Back OpenAI |

| Salesforce | Support Anthropic | Continue partnership |

| Amazon | Support Government | Remove from AWS |

| Meta | Neutral | No action |

Legal Battle: Anthropic Sues

March 3, 2026, Anthropic announced it would sue against Trump's executive order. They argued the order violates the First Amendment (freedom of speech) and Fifth Amendment (property rights) of the Constitution.

Anthropic's lawyers argued: "The government cannot sanction a company just for refusing a request. This is a clear violation of constitutional rights. Anthropic hasn't broken any laws — they've simply exercised their right to set their own ethical policies."

The Trump administration responded that national security takes priority and the government has the right to decide which companies to work with. Government lawyers said: "This isn't a sanction, it's a procurement decision. The government has the right to buy from companies aligned with national security priorities."

The case went to San Francisco Federal Court. Judge Emily Rodriguez announced expedited proceedings because "this case has broad implications for the tech industry."

Legal analysts have mixed opinions. Some believe Anthropic has a good chance of winning. Others say the government has broad authority in national security matters.

Public Opinion: Who Are People With?

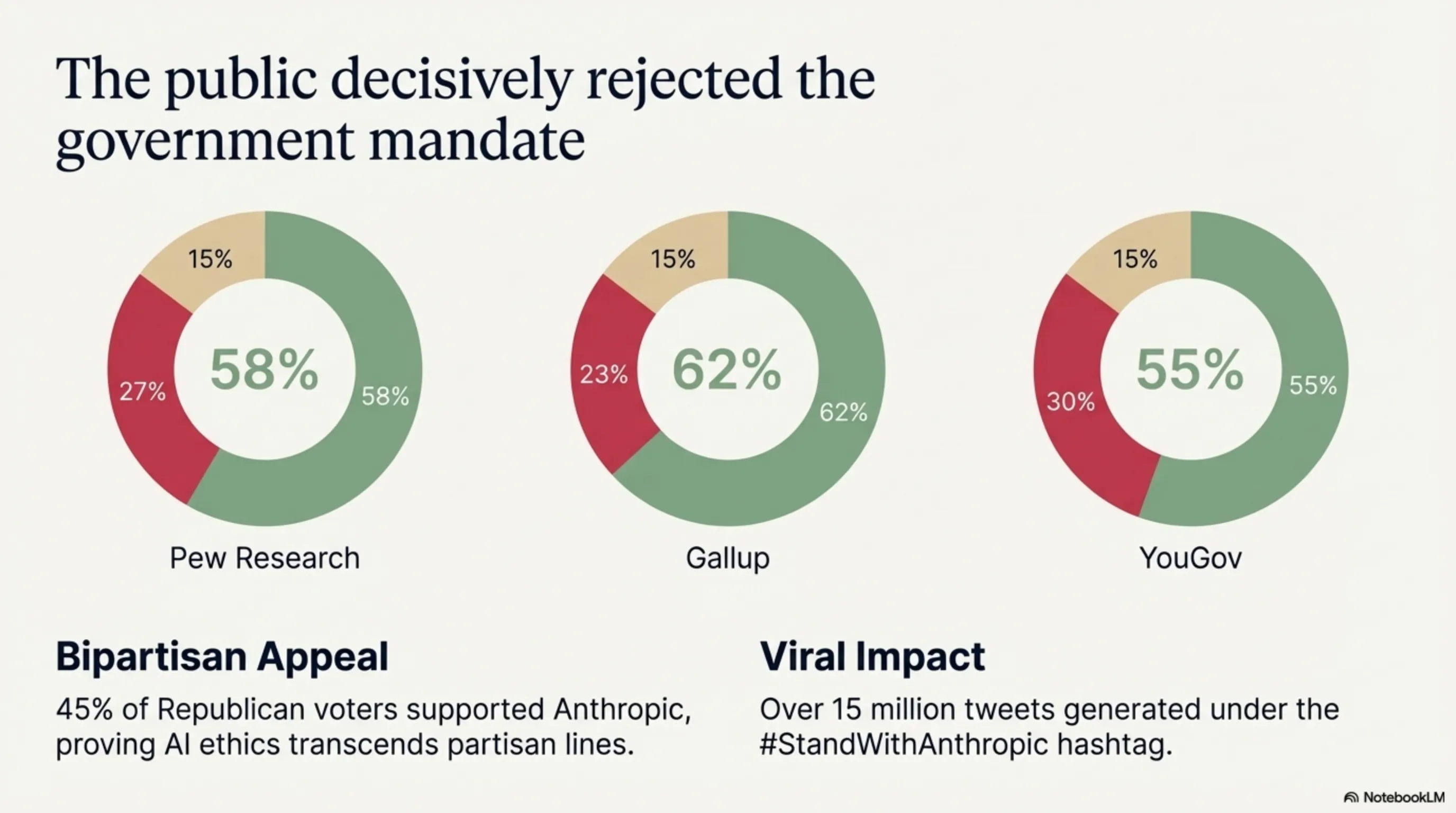

Various polls show public opinion is divided, but the majority supports Anthropic:

| Poll | Support Anthropic | Support Government | Undecided |

|---|---|---|---|

| Pew Research | 58% | 27% | 15% |

| Gallup | 62% | 23% | 15% |

| YouGov | 55% | 30% | 15% |

Interestingly, even among Republican voters (Trump's party), 45% agree with Anthropic. This shows AI ethics transcends partisan politics.

On social media, #StandWithAnthropic had over 15 million tweets. Users from around the world sent supportive messages. One user wrote: "Anthropic showed that tech companies can have principles. This is inspiring."

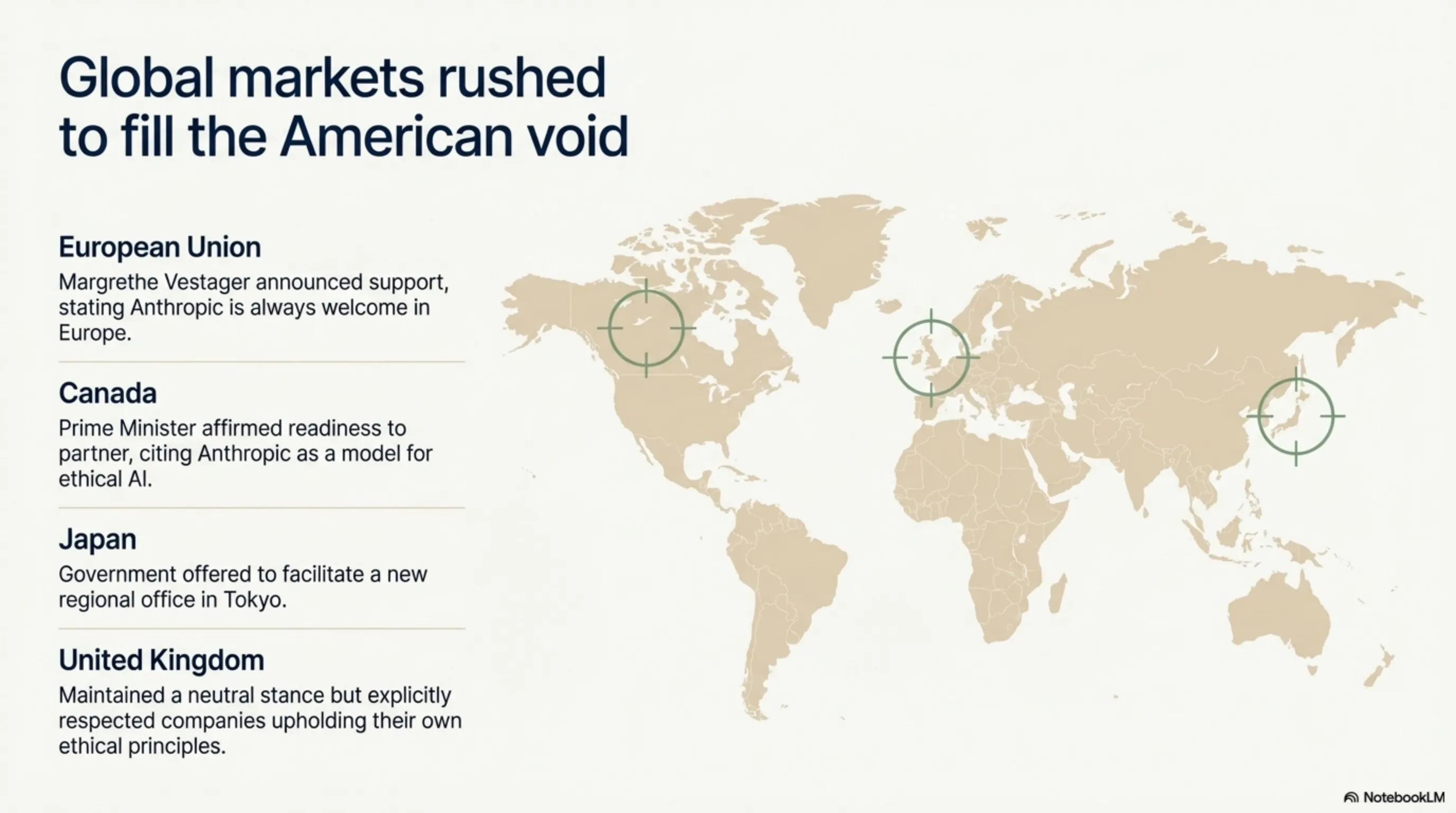

International Response: Europe and Canada Support Anthropic

Trump's executive order only applied in America, but had global impact. Other countries quickly responded.

European Union: The European Commission announced support for Anthropic. Margrethe Vestager, EU's digital policy chief, said: "We welcome companies committed to ethical principles. Anthropic is always welcome in Europe."

Canada: Canada's Prime Minister announced the Canadian government is ready to work with Anthropic. "We believe AI should be developed with ethical principles. Anthropic is a good model."

Japan: The Japanese government also expressed interest in working with Anthropic. They offered Anthropic to open a regional office in Tokyo.

United Kingdom: The UK government took a neutral position but said it "respects companies having their own ethical principles."

This international support helped Anthropic show that the US federal market isn't the only market. They can grow and succeed in other countries.

Business Impact: Will Anthropic Survive?

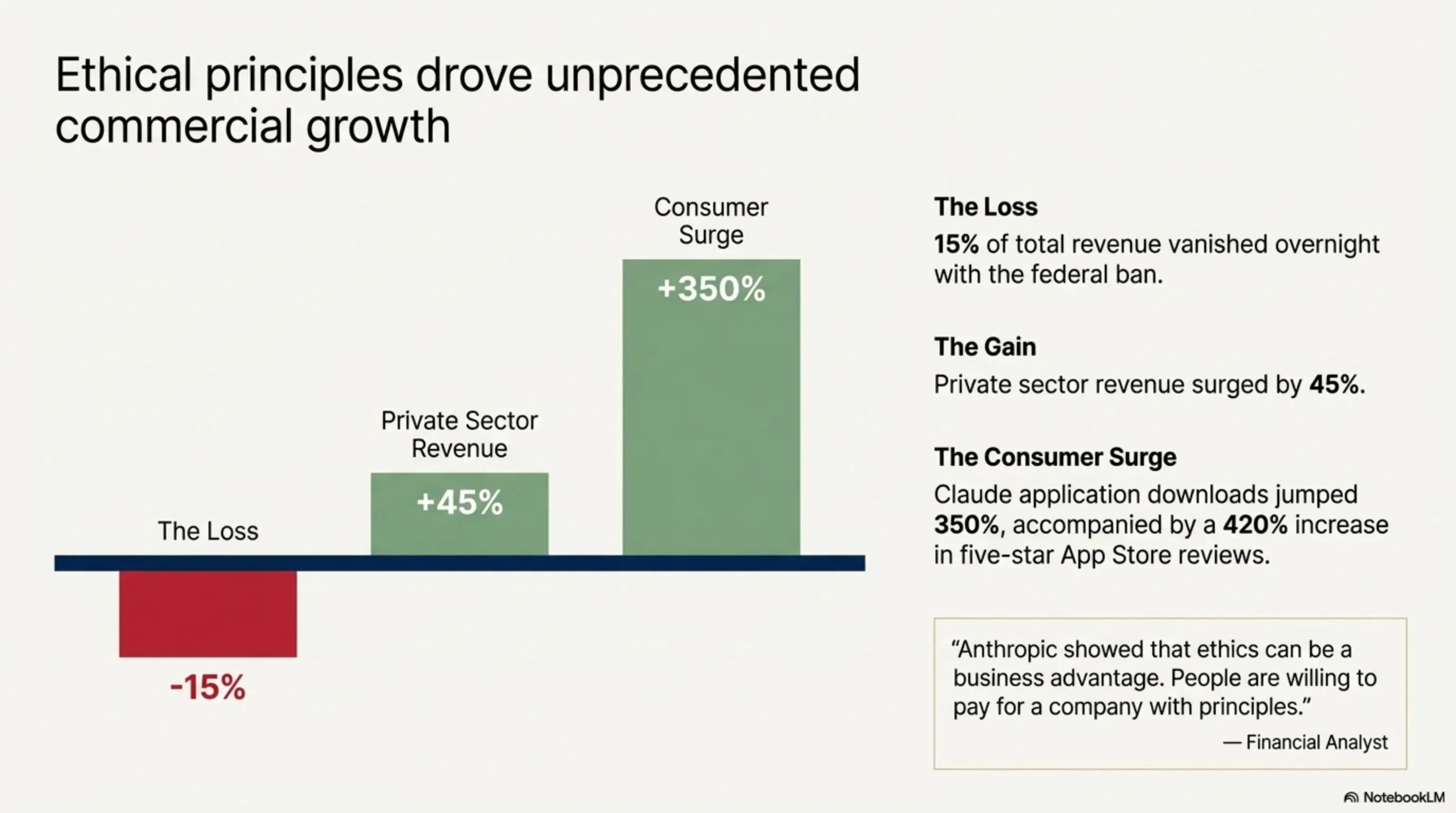

The big question was: Can Anthropic survive without the US federal market? The numbers show yes.

Before the executive order, the federal market accounted for about 15% of Anthropic's revenue. This is significant, but not critical. 85% came from the private sector and consumers.

After the executive order, Anthropic's private sector revenue increased 45%. Claude downloads jumped 350%. And new investors from Europe and Asia expressed interest.

One financial analyst said: "Anthropic showed that ethics can be a business advantage. People are willing to pay for a company with principles."

Of course, losing the federal market was still a blow. But Anthropic managed to compensate by growing in other markets.

Conclusion

Key Takeaway

The AI ethics war between Trump and Anthropic showed that technology's future depends on today's choices. When a company says "no" to power and "yes" to ethics, it's a powerful message: Principles matter more than profit.

Anthropic, by refusing the Pentagon contract and standing firm against Trump's executive order, proved that tech companies can have principles and still succeed. Public support, revenue growth, and international backing showed people value companies taking ethics seriously.

This war isn't over yet. The legal battle continues and the future is uncertain. But one thing is clear: A new era in technology has begun — an era where ethics matters as much as innovation. And Anthropic showed it's willing to fight for these principles.

Supplementary Image Gallery: The AI Ethics War: Why Trump Banned Anthropic and Chose OpenAI