In this strategic report dated April 27, 2026, Tekin Guide explores the deepest layers of the AI hardware war. As NVIDIA solidifies its dominance with the Blackwell X100 architecture, tech giants (the UXL Coalition) have united to break the CUDA monopoly through open-source software. We dissect the hidden costs, TSMC's production bottlenecks, and the phenomenon of "Sovereign AI".

🚀 Tekin Guide: Battle for the Heart of AI

Today is April 27, 2026. The real AI war isn't happening between intelligences, but rather on lifeless pieces of silicon. Tonight, we go beyond news analysis. Together, we'll experience hardware engineering in simple terms to understand why the world is addicted to NVIDIA's chips.

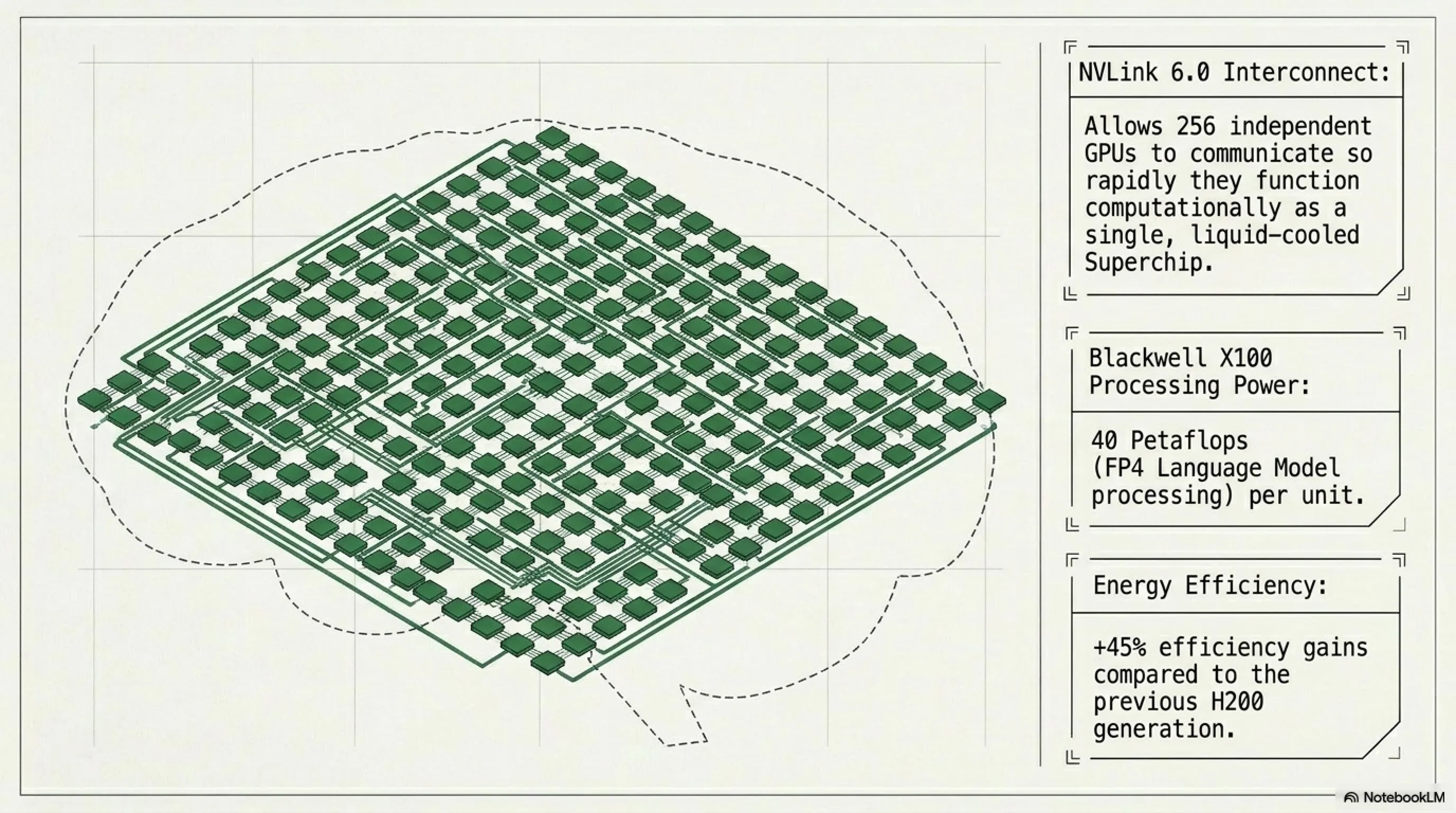

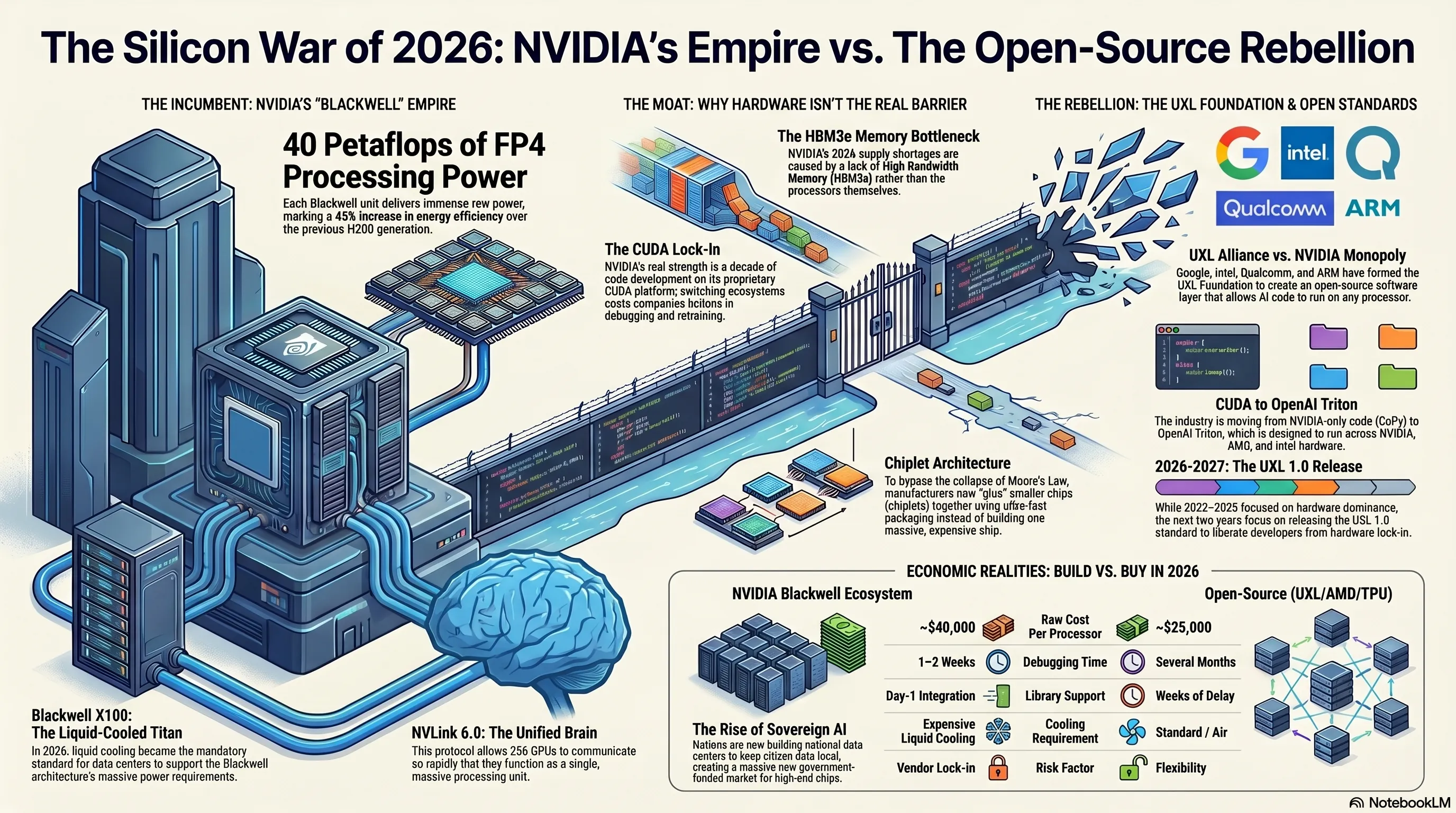

1. The Blackwell X100 Monster: Why NVIDIA is Still Invincible?

With the introduction of the Blackwell X architecture in early 2026, NVIDIA turned the concept of the "Liquid-Cooled Superchip" into a mandatory standard for datacenters. However, the true secret of this chip's power doesn't lie in its transistor count, but in the NVLink 6.0 communication protocol. This technology allows 256 different GPUs to communicate so rapidly that they function as a single "unified brain."

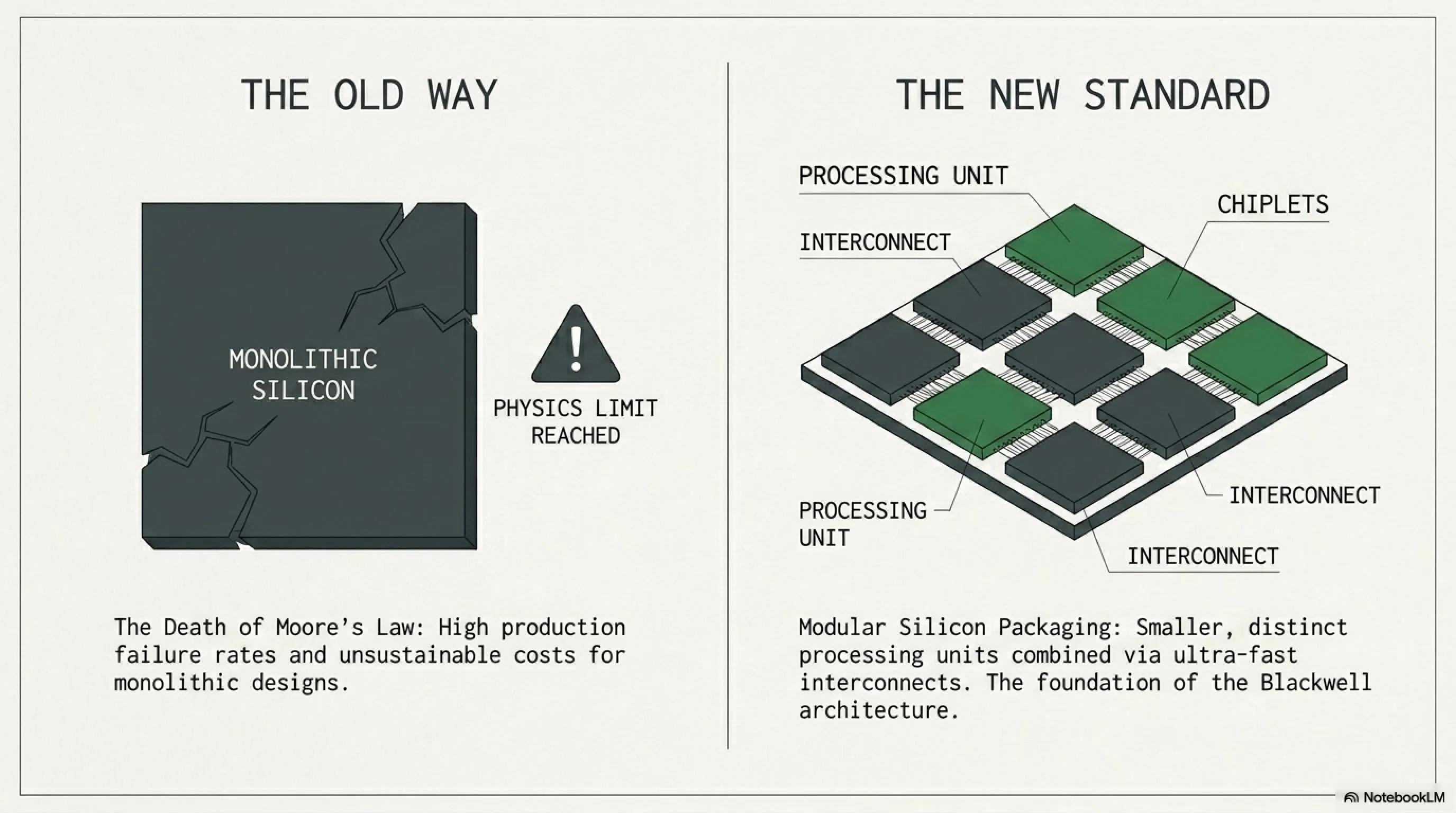

2. Chiplet Architecture and the End of Moore's Law: Snapping Silicon Together

As you may know, "Moore's Law" (which states that the number of transistors on a microchip doubles every two years) is collapsing due to the physical limitations of silicon. In 2026, NVIDIA and AMD are utilizing the Chiplet architecture to build even more powerful processors.

📚 Inspector's Hardware Dictionary

- What is a Chiplet?

- Instead of manufacturing one massive (monolithic) chip, which has a high defect rate and astronomical costs, companies build smaller chips (chiplets) and connect them using ultra-fast packaging. Blackwell is inherently composed of multiple chiplets linked together.

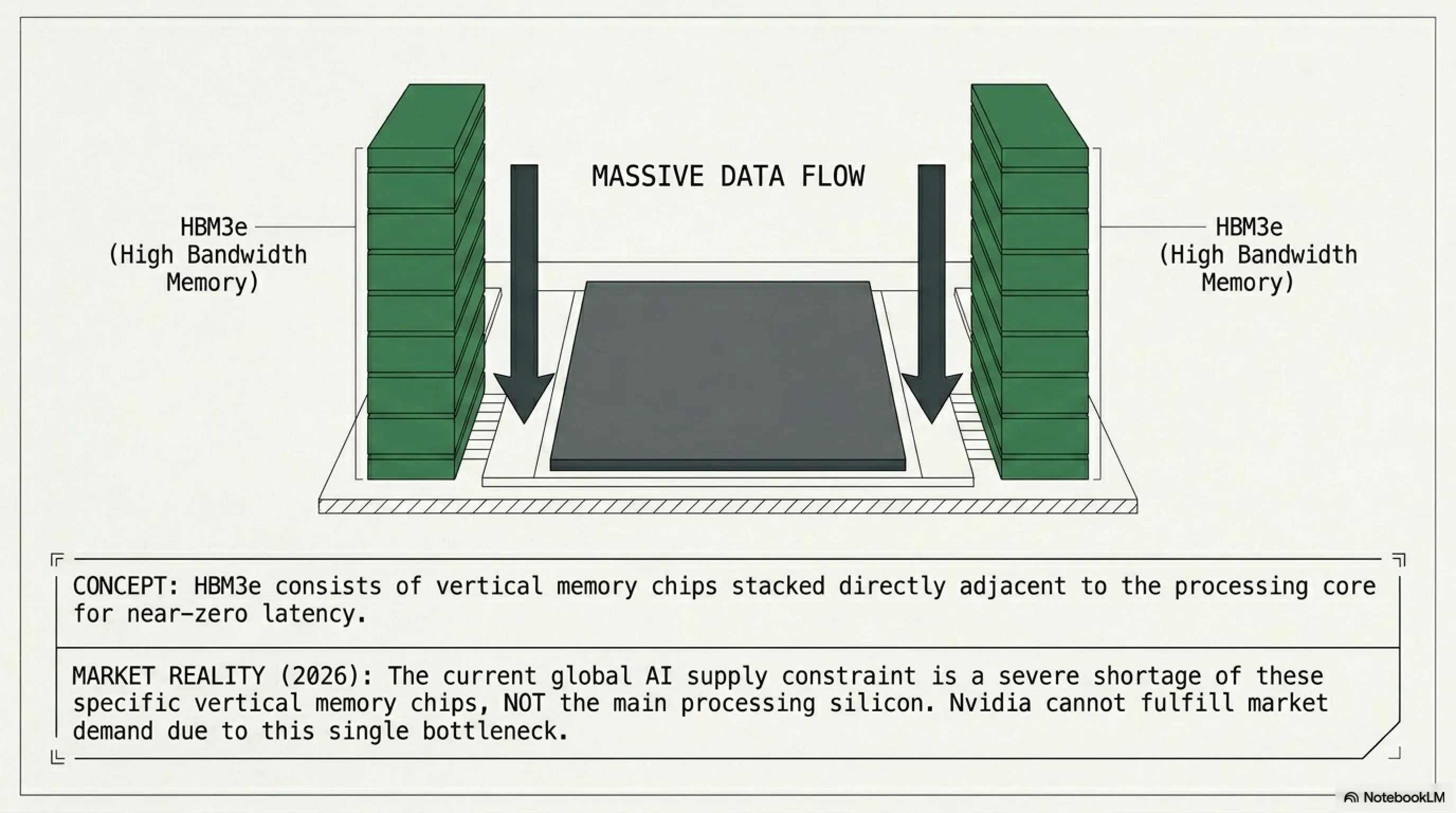

- HBM3e (High Bandwidth Memory)

- Vertical memory chips placed directly alongside the processing chiplet. In 2026, it is the shortage of *these* memories that prevents NVIDIA from meeting market demand, not the core processor itself!

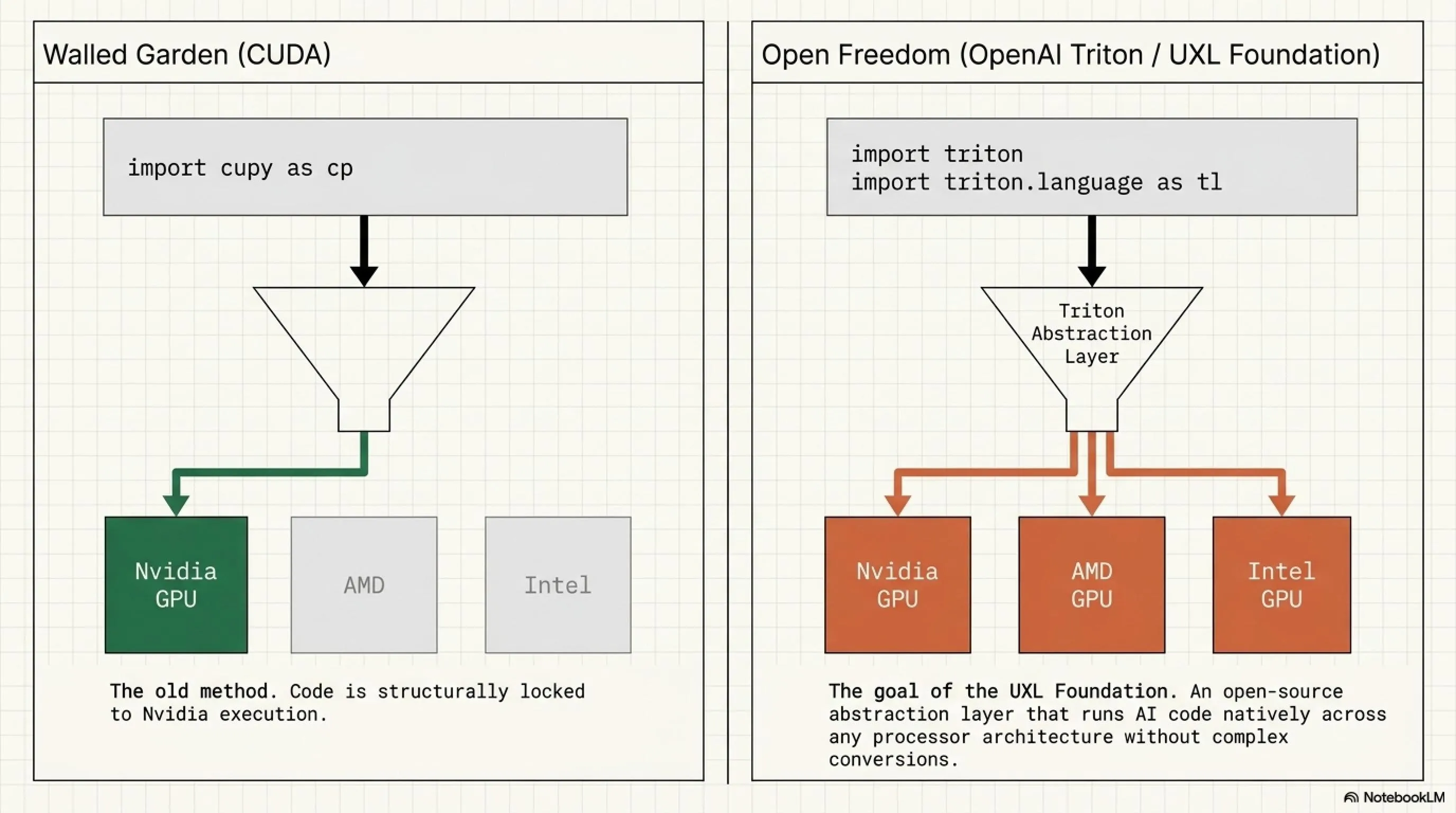

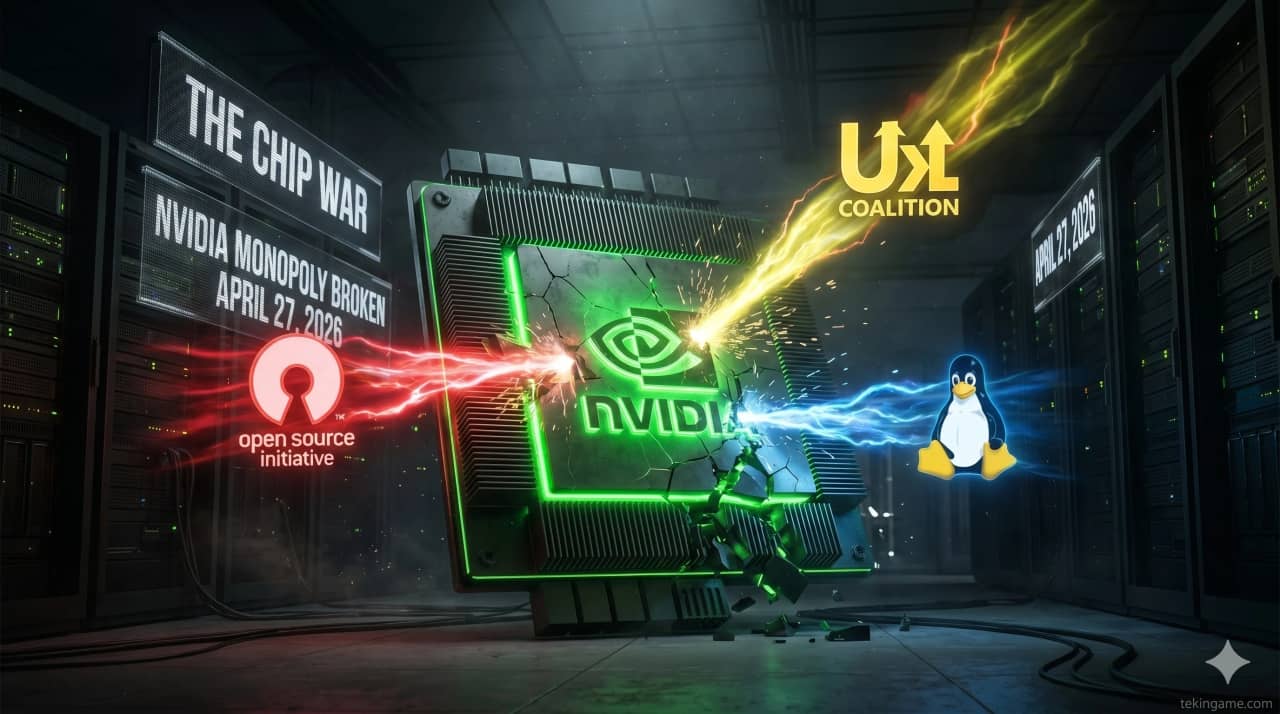

3. The Open-Source Rebellion: How the UXL Coalition Plans to Break NVIDIA's Monopoly?

[IMAGE_PLACE_HOLDER_3]The tech industry despises absolute dependency on a single company. That's why a coalition named the UXL Foundation (comprising Google, Intel, Qualcomm, and Arm) was formed to build an open-source software layer. Their goal? To enable AI code to run on any processor (be it AMD, Intel, or Google's custom chips) without the need for complex conversions.

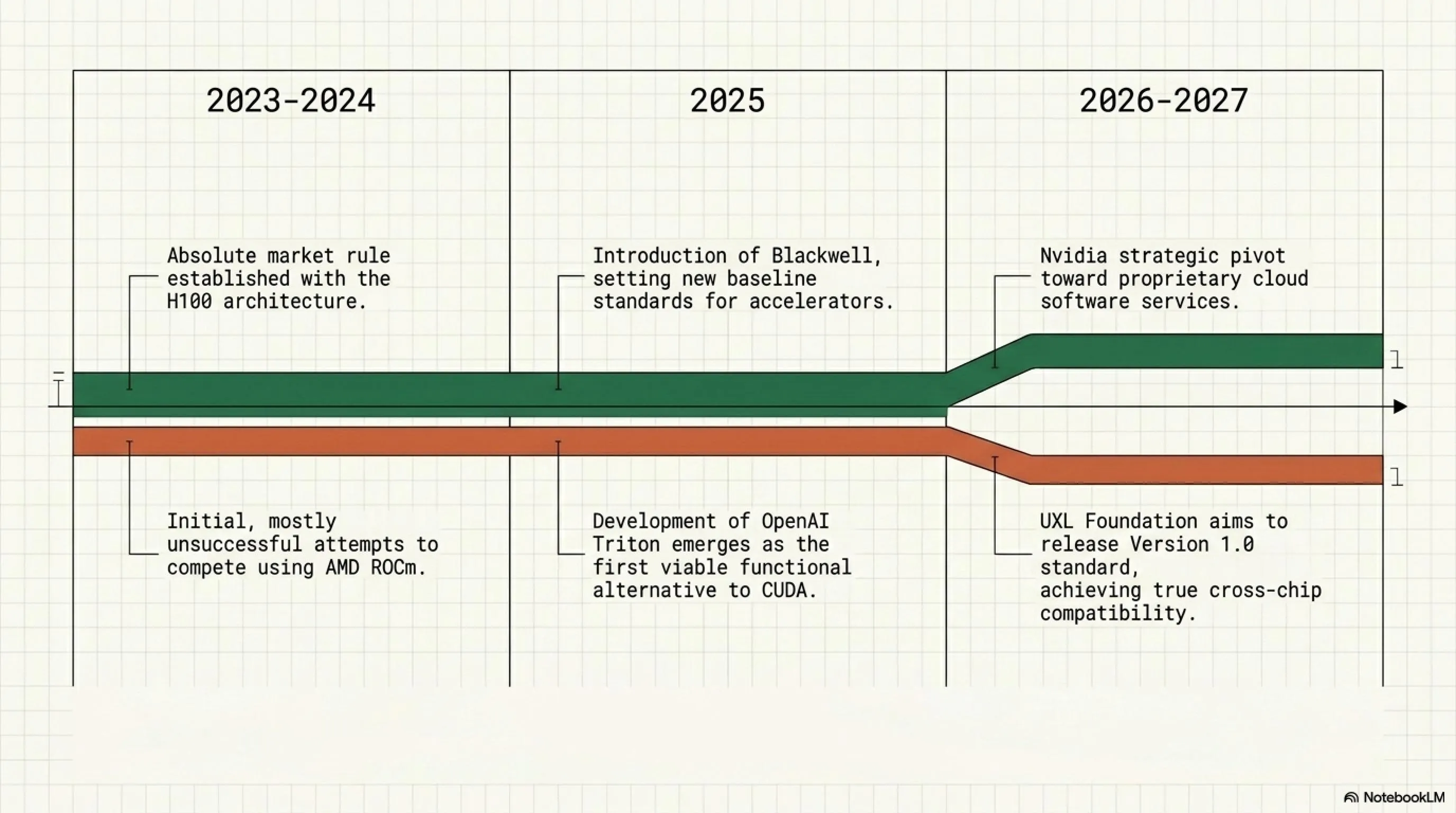

| Year | NVIDIA Monopoly Status | Open-Source Consortium Countermeasure |

|---|---|---|

| 2023-2024 | Absolute Dominance with H100 | Unsuccessful attempts by AMD ROCm |

| 2025 | Intro of Blackwell (Next-Gen Accelerator) | Development of OpenAI Triton as a CUDA alternative |

| 2026-2027 | Focus on Proprietary Cloud Software Services | Release of UXL Standard v1.0 for all silicon |

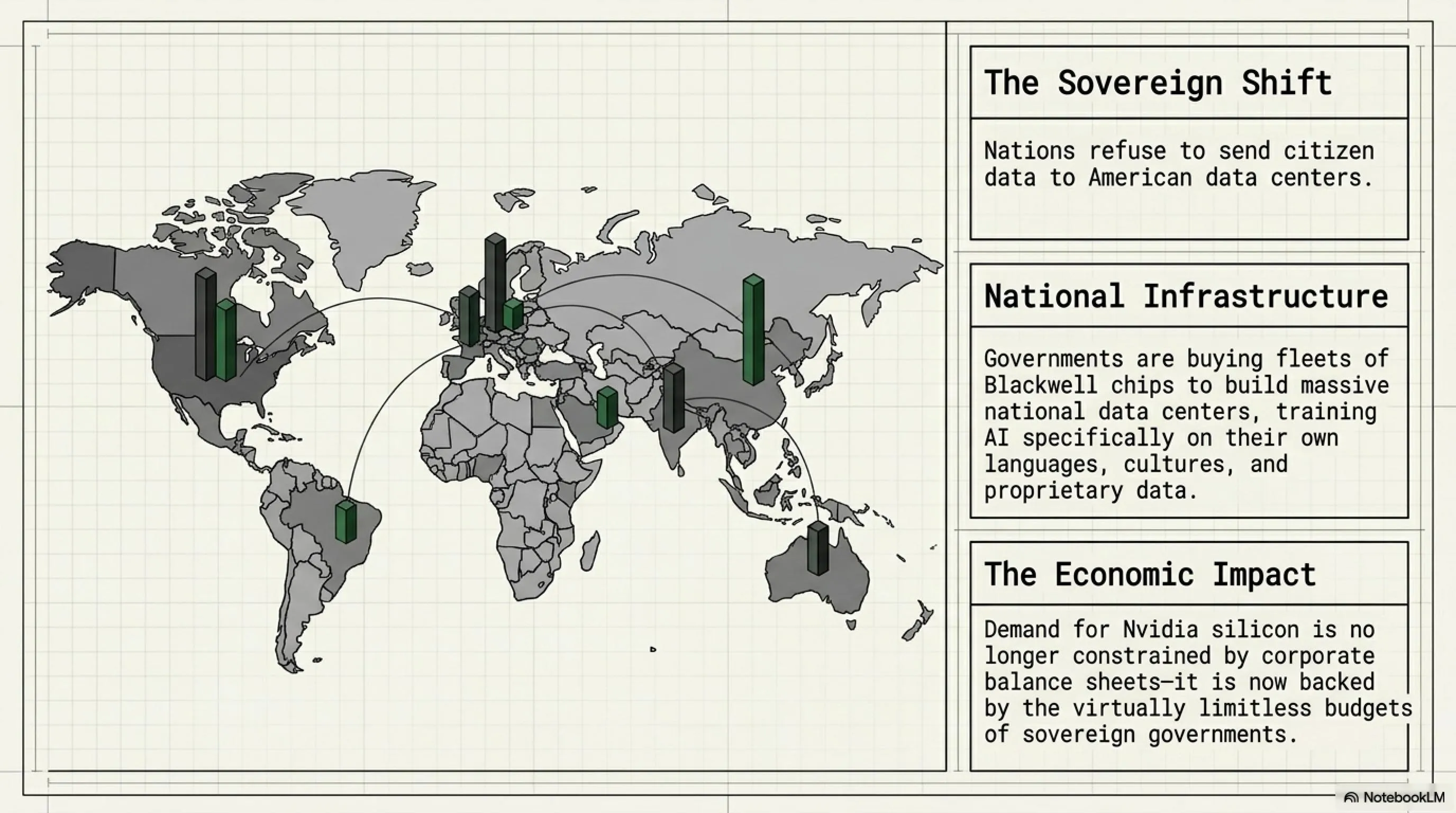

4. Sovereign AI: When Governments Enter the Game

[IMAGE_PLACE_HOLDER_4]In 2026, a new concept known as "Sovereign AI" has gained immense momentum. Countries no longer want to send their citizens' data to American datacenters. They are building massive national datacenters utilizing Blackwell chips to train proprietary AI models reflecting their own language and culture. This means the demand for NVIDIA chips is no longer just from corporations, but is fueled by bottomless government budgets!

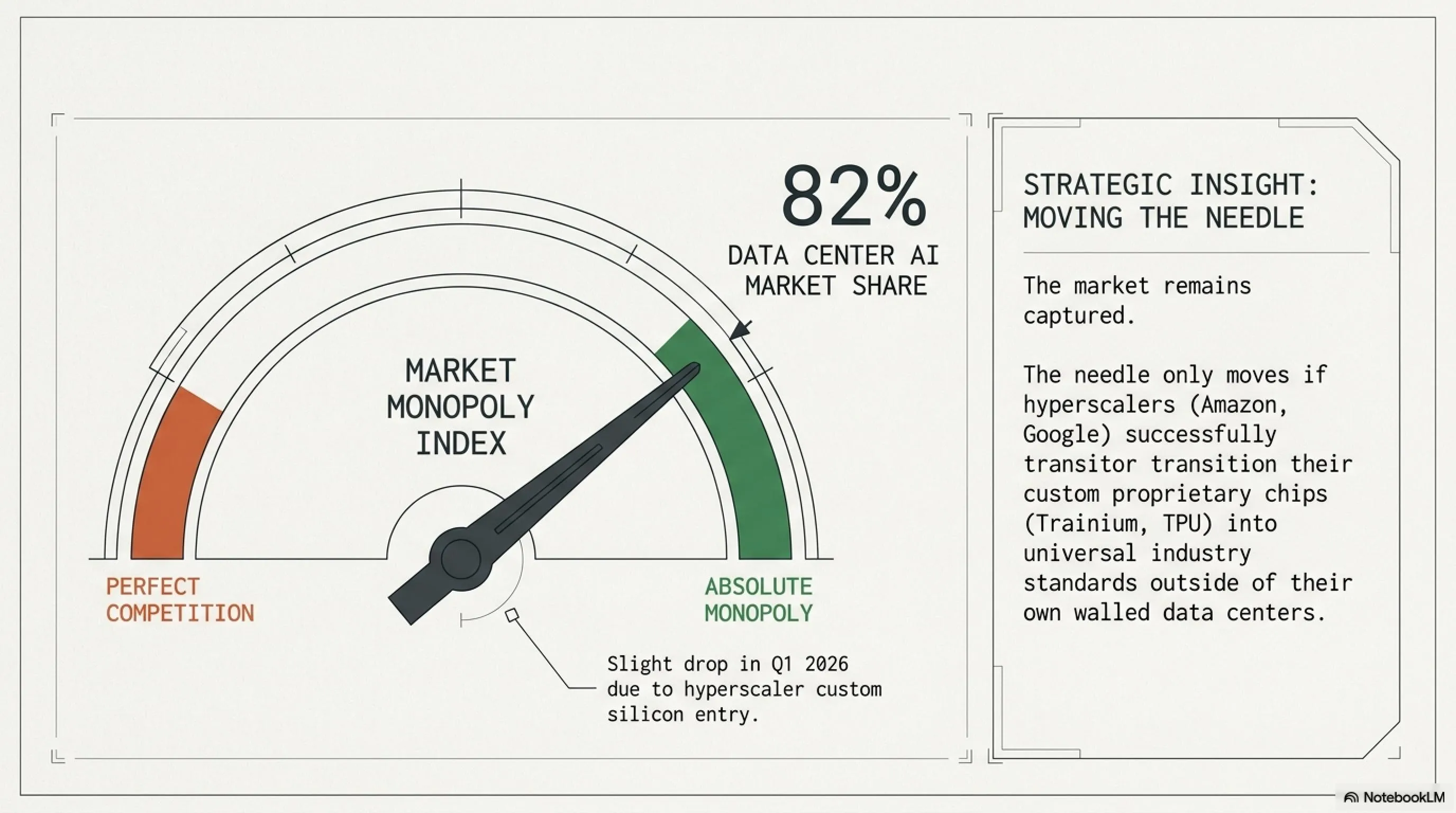

📊 Market Monopoly Index (Vendor Lock-in)

Tekin's Forecast: Until companies like Amazon and Google can make their proprietary chips (like AWS Trainium and Google TPU) an industry standard outside of their own cloud environments, this arrow will not move to the left (toward competition).

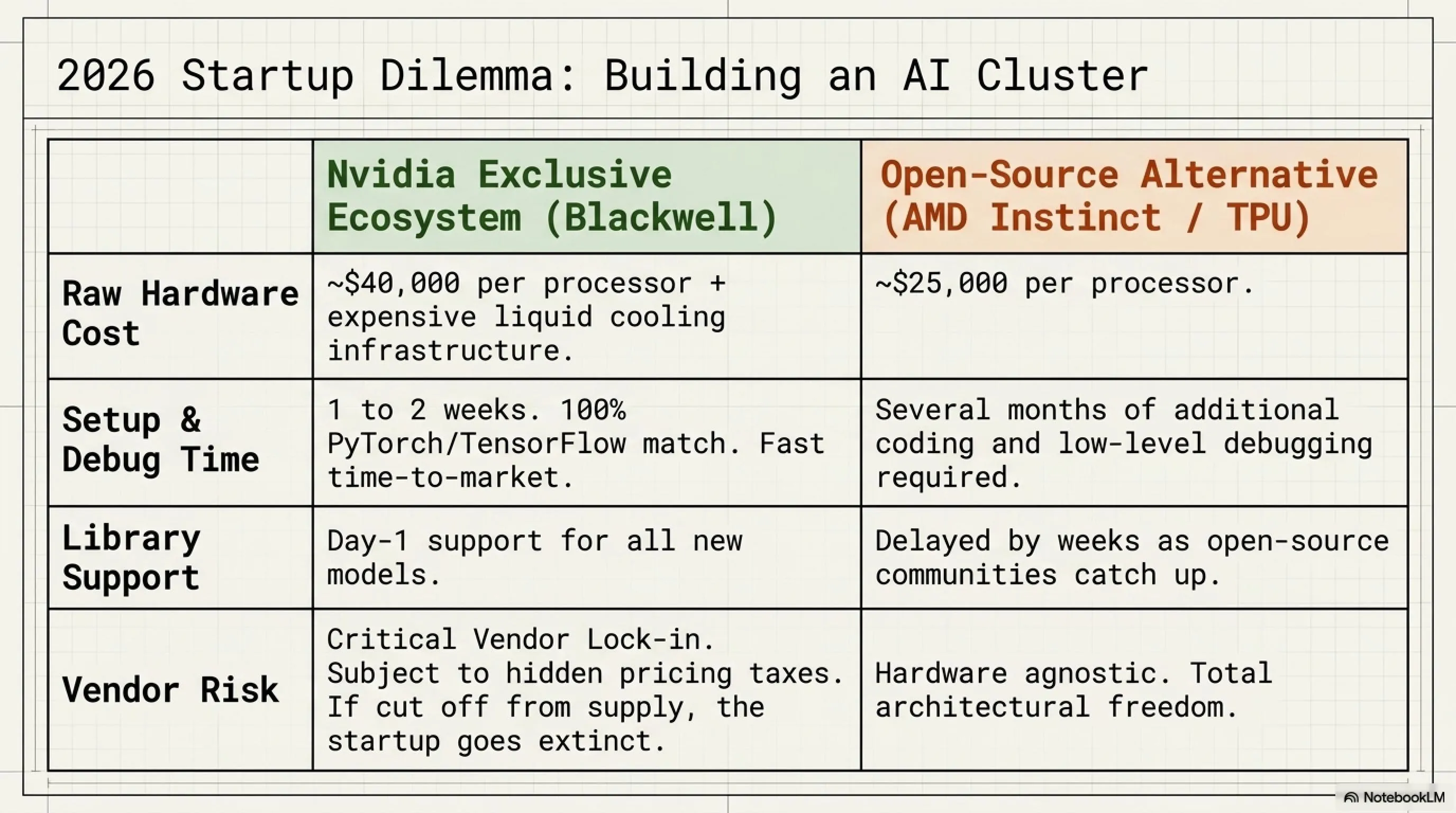

5. The Cost Battle: How Much to Build an AI Cluster?

[IMAGE_PLACE_HOLDER_5]If a startup wants to train its own AI model in 2026, it faces two paths: buying NVIDIA's monolithic turnkey systems (which are exorbitantly expensive but boast flawless software support) or opting for the open-source hardware approach (which features cheaper initial hardware but requires months of extra coding and debugging).

| Comparison Metric | NVIDIA Proprietary Ecosystem (Blackwell) | Open-Source Consortium Alternative (AMD / TPU) |

|---|---|---|

| Raw Hardware Cost Per Unit | ~$40,000 | ~$25,000 |

| Time Required for Code Debugging | 1 to 2 Weeks | Several Months |

| Support for Cutting-Edge Libraries | Day-1 Support | Delayed by weeks |

✅ Advantages of the NVIDIA Route

- Unrivaled interconnect speeds thanks to NVLink and HBM3e

- 100% upstream compliance with PyTorch and TensorFlow

- Significantly faster Time-to-Market for finished AI models

❌ Risks of Falling into the NVIDIA Trap

- You become a hostage to NVIDIA's pricing power (Severe Vendor Lock-in)

- If NVIDIA allocates your chips elsewhere, your company starves

- Requires extremely expensive, specialized liquid cooling infrastructure

🔥 Verdict of the Silicon War: Who Wins in 2026?

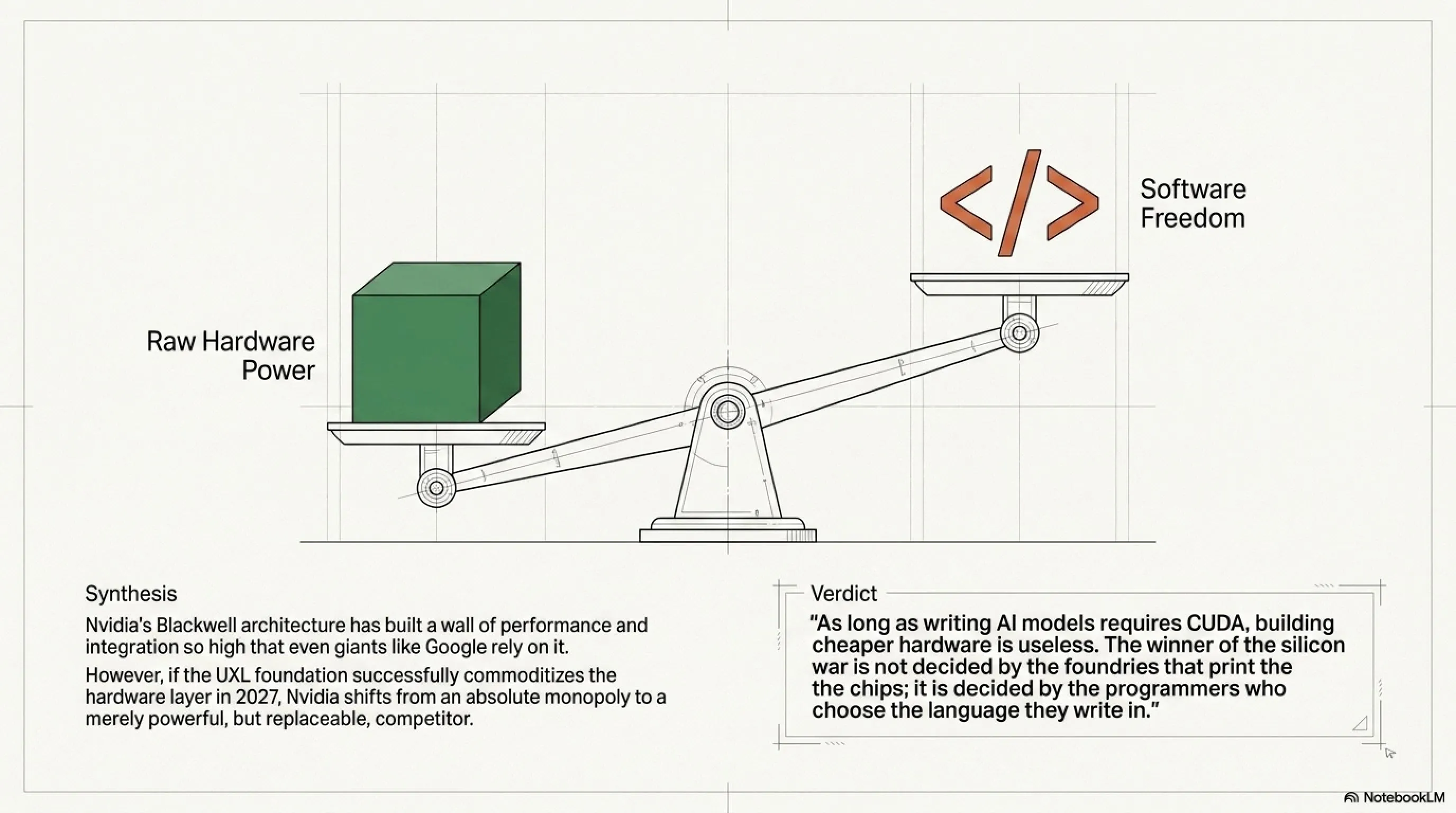

Dear Tekin Legion, the war between NVIDIA and the open-source coalition is a battle of "Brute Hardware Power" against "Software Freedom." With its Blackwell X100 architecture, NVIDIA has built such a high wall of performance and integration that even tech titans like Google and Apple would struggle without it.

However, NVIDIA's Achilles' heel lies in low-level open-source compilation code (like OpenAI Triton). If the UXL coalition can successfully establish a platform in 2027 where AI code runs seamlessly across any silicon without velocity drops, NVIDIA's empire could transition from an absolute monopoly to merely a powerful competitor. But for now, the king of datacenters still wears a leather jacket!

💡 Inspector Tekin's Final Word

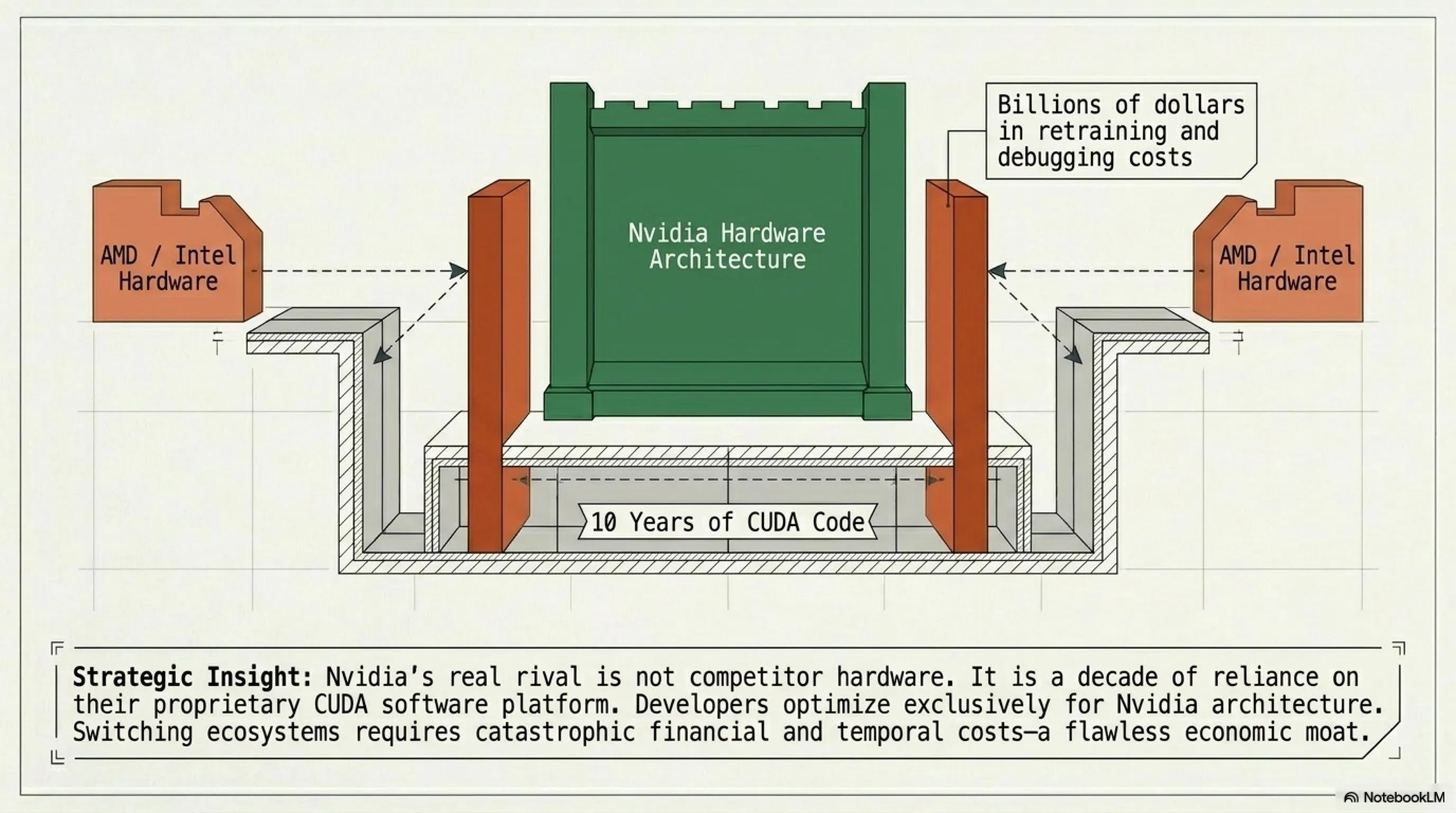

"As long as writing AI models demands NVIDIA's proprietary programming language (CUDA), competitors' attempts to build cheaper silicon are largely futile. The winner of this war won't be decided by hardware manufacturers; rather, it will be decided by developers choosing which language they write their code in."

🤔 Frequently Asked Questions (FAQ)

Could NVIDIA's monopoly stall the broader advancement of AI?

No, quite the contrary. NVIDIA's temporary monopoly has established a unified standard that allows enterprise companies to iterate rapidly. The core issue is the exorbitant capital required, pricing out smaller startups.

Why is Apple largely absent from this specific cloud battle?

Apple is fiercely focused on "Edge AI" (on-device processing via their M4/A18 chips) and has zero interest in engaging in heavy datacenter warfare against NVIDIA, prioritizing personalized, dense, local models over cloud leviathans.

🌐 Confidential Sources & References:

- NVIDIA GPU Technology Conference (GTC) 2026 Keynote Archives

- UXL Foundation - Unified Software Stack Whitepaper

- TSMC 3nm Node Production and Yield Reports

- OpenAI API Technical Back-end Leaks (Triton Documentation)

🌐 Stay Connected With Us

For the latest tech, gaming, and gadget news, follow us on social media:

📸 Instagram 🆔 Telegram Arabia 🆔 Telegram Global 🆔 Telegram Iran 💬 Direct Contact 📧 majid@tekingame.comSupplementary Image Gallery: 🏴☠️ Tekin Guide: The AI Chip War of April 27, 2026; Will NVIDIA's Empire Fall? 🤖🔌